FAQs

Download

Focus Mode

Font Size

What is NVIDIA Tesla?

NVIDIA Tesla is a new product line introduced by NVIDIA following the launch of professional acceleration card QUADRO and the entertainment graphics card GeForce series, which is mainly used for scenarios that require high performance computing in a broad range of scientific research. With NVIDIA® Tesla® GPU accelerator, it can handle the workloads that require super strict HPC in ultra-large data centers faster.

What is computing acceleration?

Accelerated computing refers to the use of hardware accelerators or co-processors to perform floating-point calculations and graphics processing more efficiently than software running on CPUs. Depending on your business scenario, you can choose GPU Computing instances for general computing or GPU Rendering instances for graphics-intensive applications.

What Are the Strengths of GPUs Compared to CPUs?

Compared to CPUs, GPUs possess more Arithmetic Logic Units (ALUs) and support massive multi-threaded parallel computing.

When Should You Choose GPU Instances?

GPU instances are best suited for highly parallel applications, such as workloads using thousands of threads. When graphics processing involves massive computational requirements where each task is relatively small, the execution of a set of operations forms a pipeline. The throughput of this pipeline is more critical than the latency of individual operations. To build applications that fully utilize this parallelism, users need specialized knowledge of GPU devices and understand how to program for various graphics APIs (DirectX, OpenGL) or GPU computing programming models (CUDA, OpenCL).

How to Choose Drivers Based on Instance Types and Scenarios?

NVIDIA GPU instance types include physical passthrough instances (full GPU) and vGPU instances (fractional GPU, e.g., 1/4 GPU).

Physical passthrough GPUs can use Tesla drivers or GRID drivers (a few models do not support GRID drivers) to achieve computational acceleration in different scenarios.

vGPUs can only use specific versions of GRID drivers to achieve computational acceleration.

For driver installation steps related to NVIDIA GPU instances, please refer to Installing NVIDIA Tesla Drivers and Installing NVIDIA GRID Drivers. You can refer to the table below to select the driver type based on the instance type and application scenario:

Instance Type | Scenario | Drive Type | Recommended Installation Method |

Computing instance - passthrough card | General computing | Tesla driver | Select Install GPU driver automatically on the purchase page. Download and install the driver from the NVIDIA official website. |

| Graphics rendering | GRID driver | Apply for GRID drivers and a license from the NVIDIA website and install them. |

Rendering instances - vGPU - vDWS/vWS | Graphics rendering | GRID driver | Select a specific image with pre-installed GRID drivers. |

How to Install Drivers for GPU Instances?

Based on your actual needs, you can directly create an instance with GPU drivers installed, or manually install the corresponding GPU driver on an existing instance:

When creating a GPU instance, you can directly use an instance with installed GPU drivers in the following ways:

In the Image section of the purchase page, select a Public Image and check the option Install GPU driver automatically propriate version of the driver. This method is recommended. Note that it only supports certain Linux public images. For details, see Driver Installation Guide.

Select a vGPU instance with a public image that has the GRID driver pre-installed; there is no need to install the driver separately.

If you did not select automatic GPU driver installation when creating the GPU instance, or if the operating system or version you need is not available in the public images, please refer to the NVIDIA Driver Installation Guide and Installing GRID Driver to manually install the corresponding driver to ensure that you can use the GPU instance normally. regarding how to choose the GPU driver type, please refer to:

For NVIDIA series GPU instances used for general computing, you need to install the Tesla Driver and CUDA. For more details, see Installing Tesla Driver and Installing CUDA Driver.

For NVIDIA series GPU instances used for 3D graphics rendering tasks (such as high-performance graphics processing and video encoding/decoding), you need to install the GRID Driver and configure the License Server. For more details, see Installing NVIDIA GRID Driver Guide.

How Is the Cloud GPU Service Charged?

Cloud GPU Service offers four purchasing methods: yearly/monthly subscription, pay-as-you-go, spot instances, and committed use billing, catering to different user scenarios. For details, see CVM Billing Modes. Note that some GPU models may support only 2-3 of these billing modes, subject to the actual display on the purchase page.

Can Cloud GPU Service Instances be Reconfigured?

Cloud GPU Service instances, including PNV4, GT4, GN10X/GN10Xp, GN6/GN6S, GN7, GN8, GNV4v, GNV4, GN7vw, and GI1, support configuration adjustments within the same instance family. However, GN7 instances do not support changes from passthrough mode (full GPU) to vGPU mode (fractional GPUs such as 1/4 GPU). GI3X does not currently support configuration adjustments.

Note:

For prerequisites, must-knows, and an operation guide on configuration adjustments, see Configuring the Instance Specifications.

For details on configuration adjustment fees, see Configuration Adjustment Billing Guide.

What is Local SSD?

Local SSD is local storage derived from the physical machine where the cloud server resides. This type of storage provides block-level data access capabilities for instances, featuring low latency, high random IOPS, and high I/O throughput. GPU Computing instances equipped with Local SSD do not support hardware upgrades (CPU, Memory), only bandwidth upgrades.

Can GPU Instances Support Access to CVM?

Yes. Cloud GPU Service possesses both private IP and public IP addresses, supporting interoperability and access with other cloud products such as CVM.

Which GPU Models Support the HARP Network Protocol?

All GPU instance types support the HARP network protocol.

Why Does nvidia-smi Show Less Memory Than the Actual GPU Memory on Cloud GPU Service?

The Tesla series GPUs have Error Correcting Code (ECC) enabled by default, which checks and corrects bit errors that may occur during data transmission and storage. When enabled, it reduces the available memory and may cause some performance loss. To ensure data accuracy, it is recommended to keep ECC enabled.

Why Does the Number of GPUs Shown by nvidia-smi Appear Smaller Than the Actual Number of GPUs When Creating a Multi-GPU Instance with a Custom Image?

Due to the driver being embedded during the creation of a custom image, the number of GPUs on the machine used to create the custom image can affect whether the GPUs in instances created from the image are properly loaded. It is generally recommended to use the same instance specifications or an instance type with more GPUs to create the image, ensuring that all GPUs are automatically recognized and their drivers are properly loaded.

How to Check the GPU Driver Version?

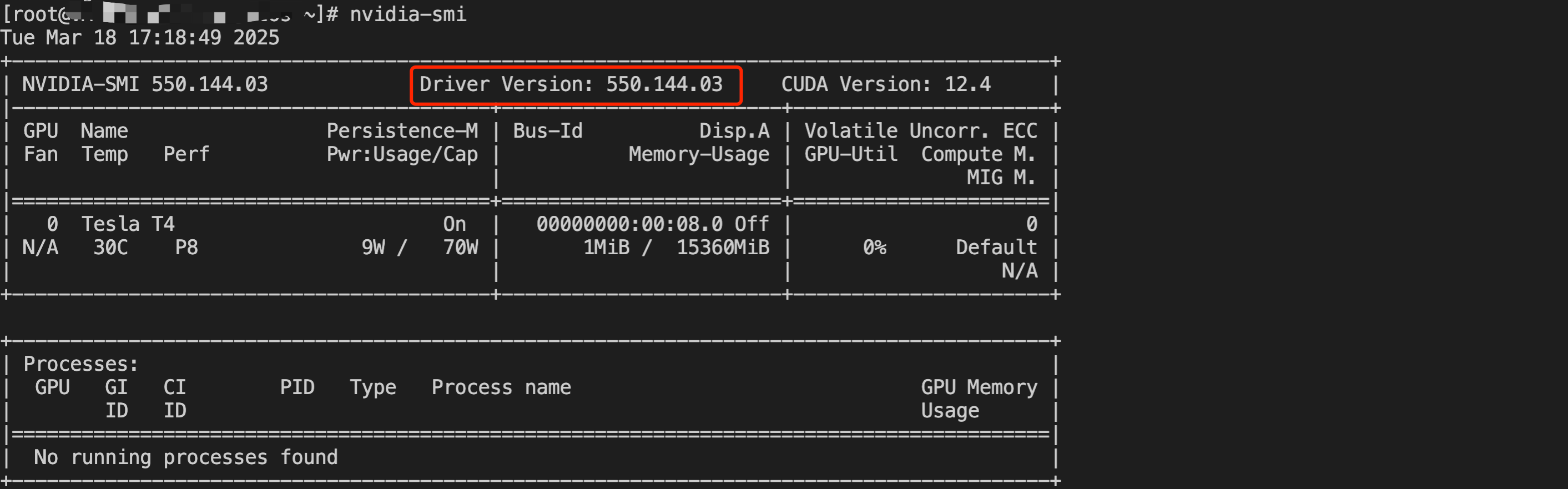

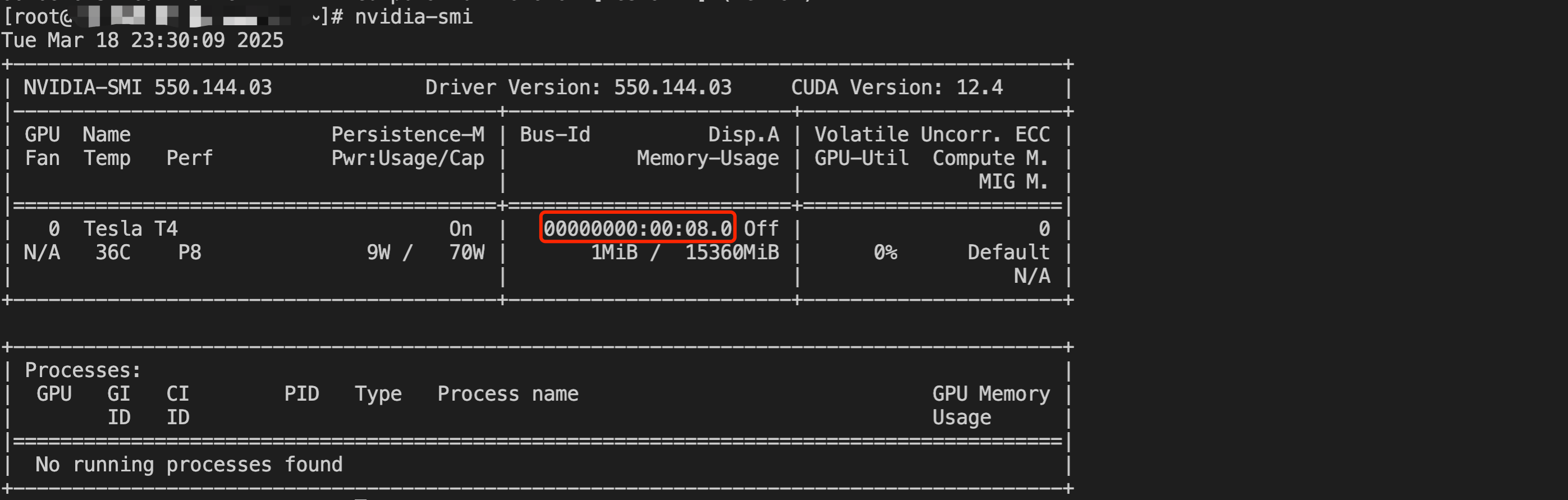

For GPU servers running Linux, you can refer to the NVIDIA Driver Installation Guide. After installing the driver, log in to the server and run the

nvidia-smi command to check the GPU driver version. As shown in the red box below, after the nvidia-smi command is run, the driver version displayed is 550.144.03.

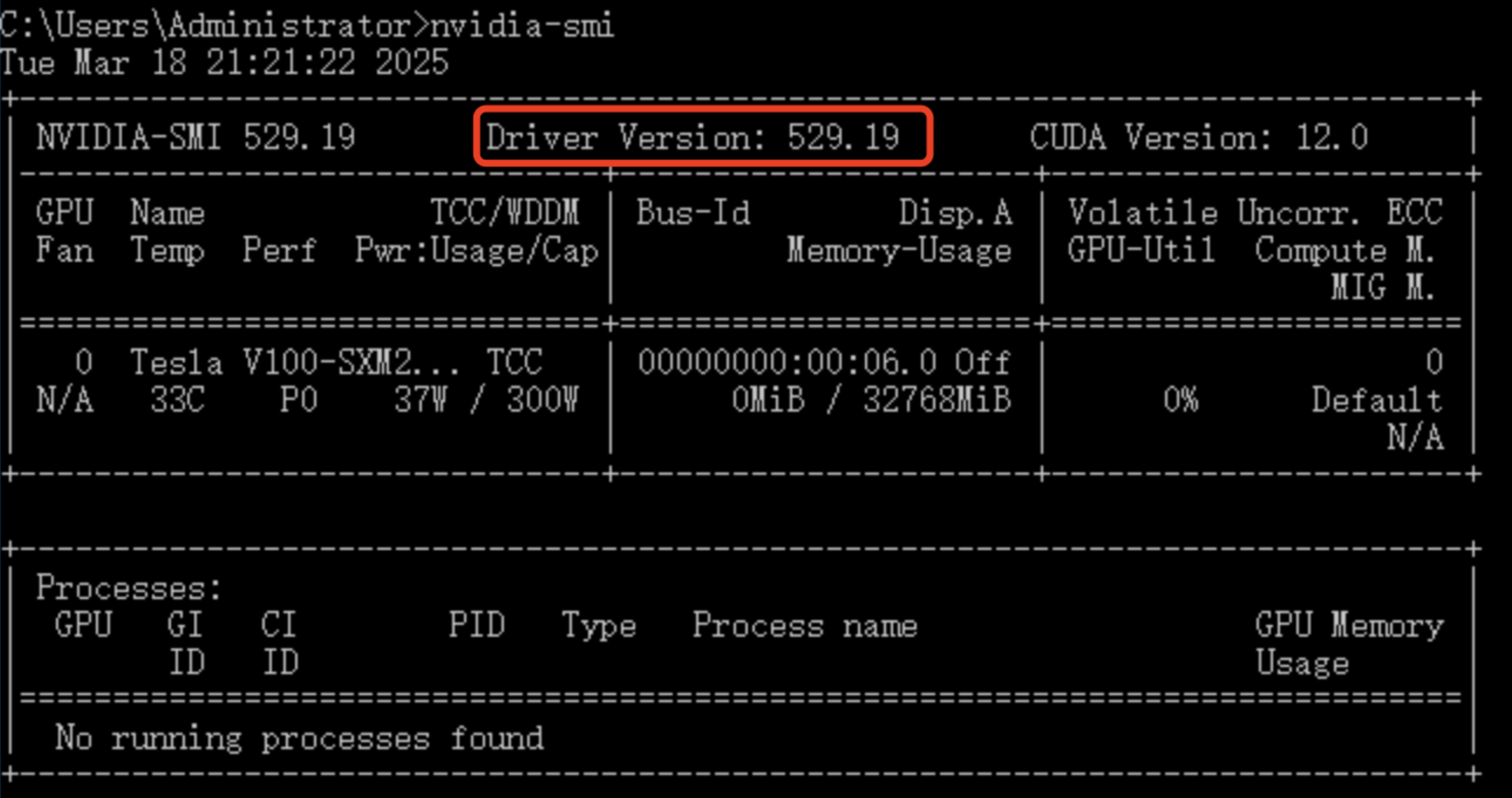

For GPU servers running Windows, you can refer to the NVIDIA Driver Installation Guide. After installing the driver, log in to the server and run the

nvidia-smi command in Command Prompt to check the GPU driver version. As shown in the red box below, after the nvidia-smi command is run, the driver version displayed is 529.19.

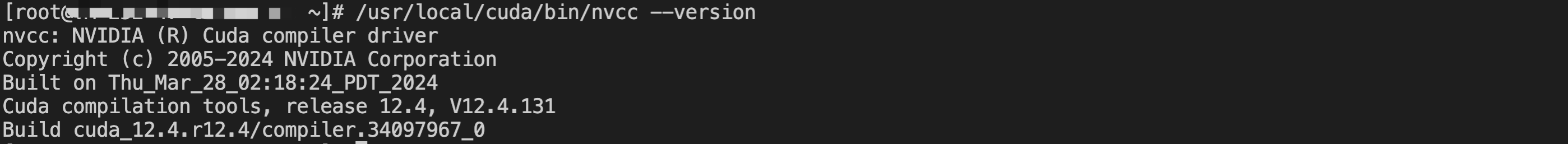

How To Check the CUDA Version of the GPU?

For Linux servers, after the CUDA driver is installed, run the

/usr/local/cuda/bin/nvcc --version command to check the CUDA version. As shown below, the CUDA version is 12.4.

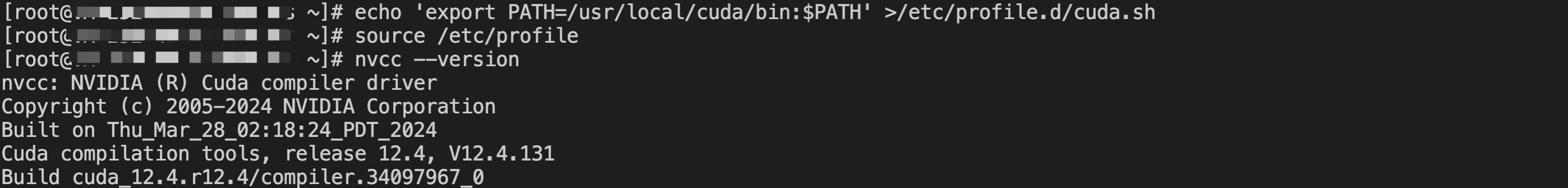

If the root user has run the following command to configure environment variables, you can run the

nvcc --version command to obtain CUDA version information.echo 'export PATH=/usr/local/cuda/bin:$PATH' >/etc/profile.d/cuda.shsource /etc/profile

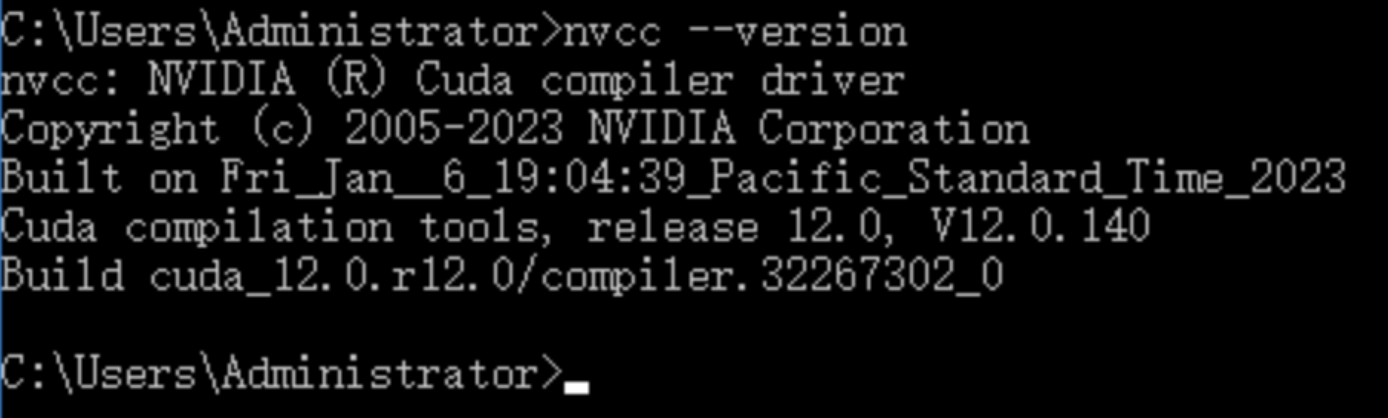

For Windows servers, refer to Installing CUDA Driver. After CUDA is installed, run the

nvcc --version command in Command Prompt to check the CUDA version. As shown below, the CUDA version is 12.0.

How to Install the NVIDIA Fabric Manager Software Package?

NVIDIA Fabric Manager is a critical GPU management tool, particularly essential in high-performance computing and deep learning domains. It ensures efficient data transmission by optimizing communication between GPUs. After installation, the tool configures and manages NVLink connections, performing tasks such as bandwidth allocation, error handling, and load balancing.

For GPU servers running Ubuntu, see the following steps for installation:

1. Log in to the console, select the target GPU instance, click Log in on the right, and choose a connection method to Log in to Instance based on your needs.

2. Copy the following command to the instance terminal.

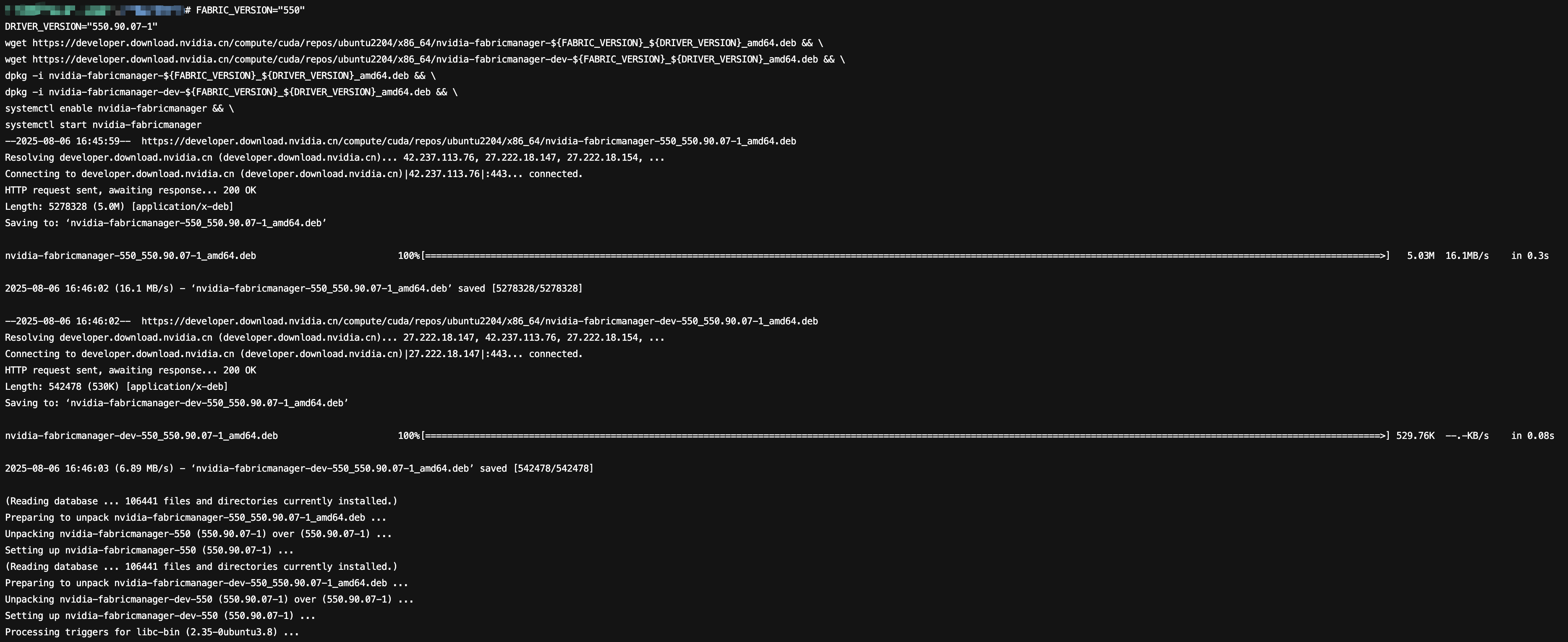

# Set Major Version NumberFABRIC_VERSION="550"# Set the full driver version number (including minor version and revision number)DRIVER_VERSION="550.90.07-1"# Download Main Installation Packagewget https://developer.download.nvidia.cn/compute/cuda/repos/ubuntu2204/x86_64/nvidia-fabricmanager-${FABRIC_VERSION}_${DRIVER_VERSION}_amd64.deb && \\# Download Development Packagewget https://developer.download.nvidia.cn/compute/cuda/repos/ubuntu2204/x86_64/nvidia-fabricmanager-dev-${FABRIC_VERSION}_${DRIVER_VERSION}_amd64.deb && \\# Install Main Packagedpkg -i nvidia-fabricmanager-${FABRIC_VERSION}_${DRIVER_VERSION}_amd64.deb && \\# Install Development Packagedpkg -i nvidia-fabricmanager-dev-${FABRIC_VERSION}_${DRIVER_VERSION}_amd64.deb && \\# Enable System Servicesystemctl enable nvidia-fabricmanager && \\# Start Service Immediatelysystemctl start nvidia-fabricmanager

3. As needed, adjust parameters for

FABRIC_VERSION and DRIVER_VERSION, then run the command to download and install the NVIDIA Fabric Manager software package, the successful result is as shown in the figure below:

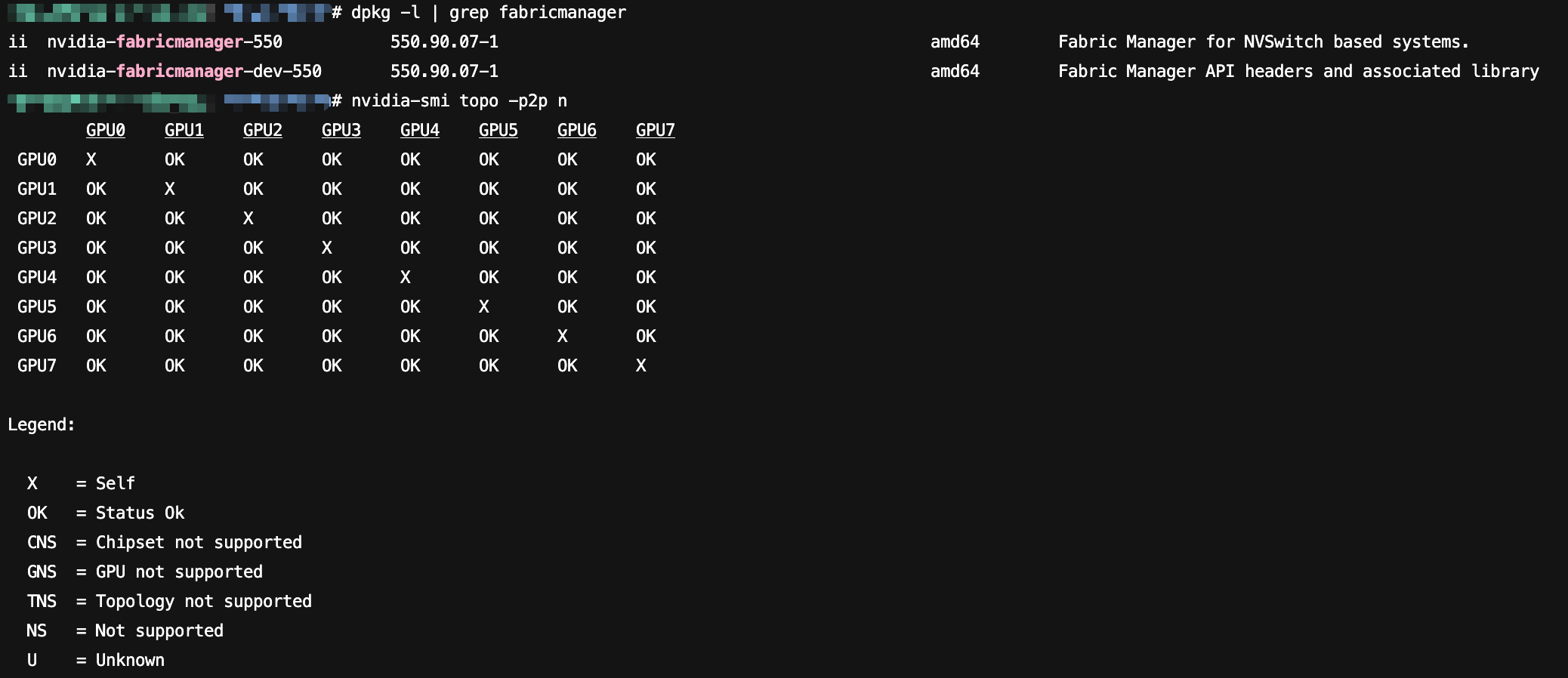

4. After installation, run the following command to check the NVIDIA Fabric Manager version information.

# Check NVIDIA Fabric Manager Version Informationdpkg -l | grep fabricmanager# Verify the Interconnect Status of Multiple GPUsnvidia-smi topo -p2p n

The successful execution result is as shown in the figure below:

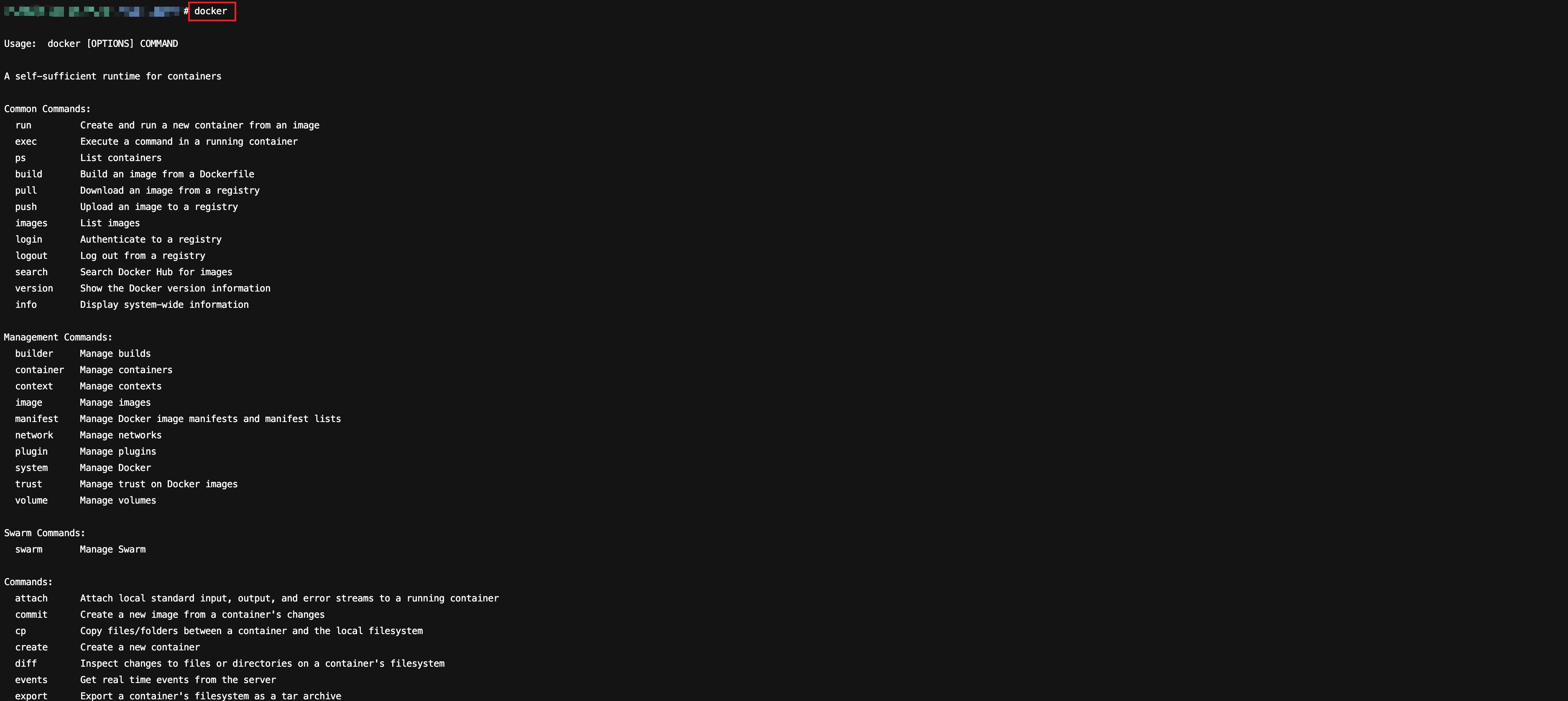

How To Install the Docker Software Package

Docker is an open-source containerization platform, designed to simplify the development, deployment, and operation processes of applications. It provides a lightweight, portable, and self-contained containerization environment, enabling development.

personnel to build, package, and distribute applications in a consistent manner across different computers.

For GPU servers running Ubuntu, see the following steps for installation:

1. Log in to the console, select the target GPU instance, click Log in on the right, and choose a connection method to Log in to Instance based on your needs.

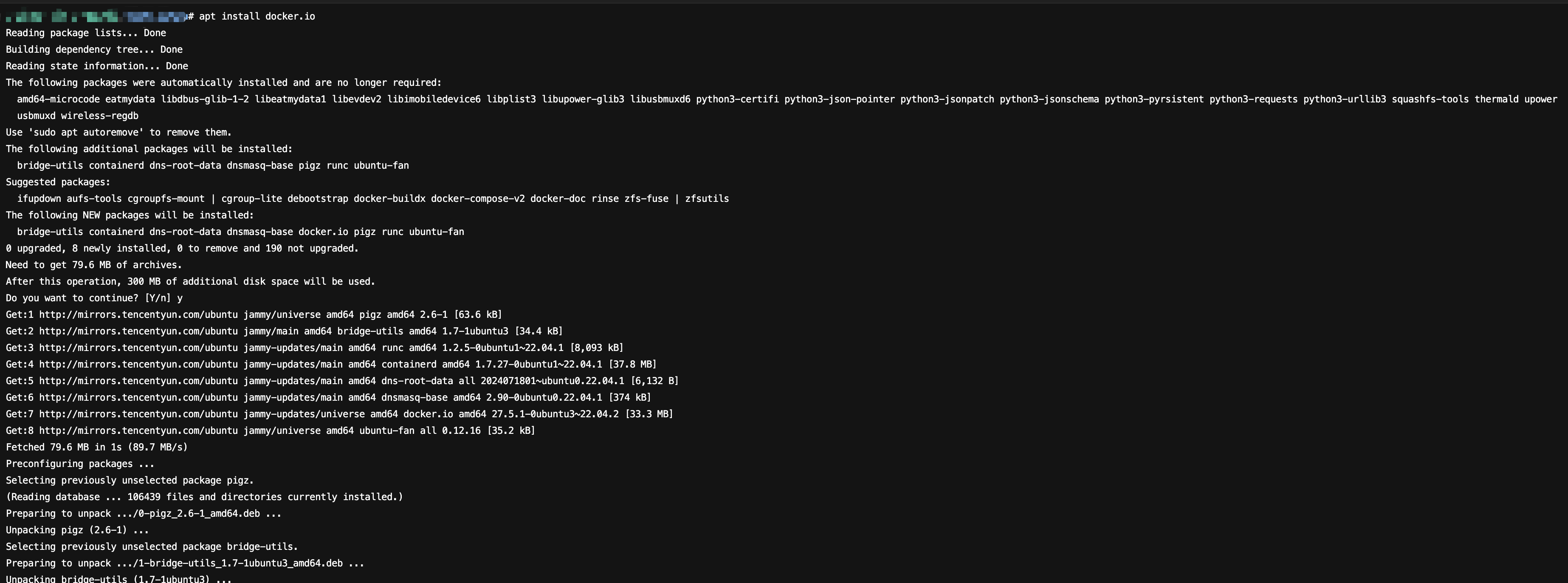

2. Run the

apt install docker.io command to install the Docker software package, the successful result is as shown in the figure below:

3. After installation, run the

command -v docker command to check whether Docker is installed successfully, the successful result is as shown in the figure below:

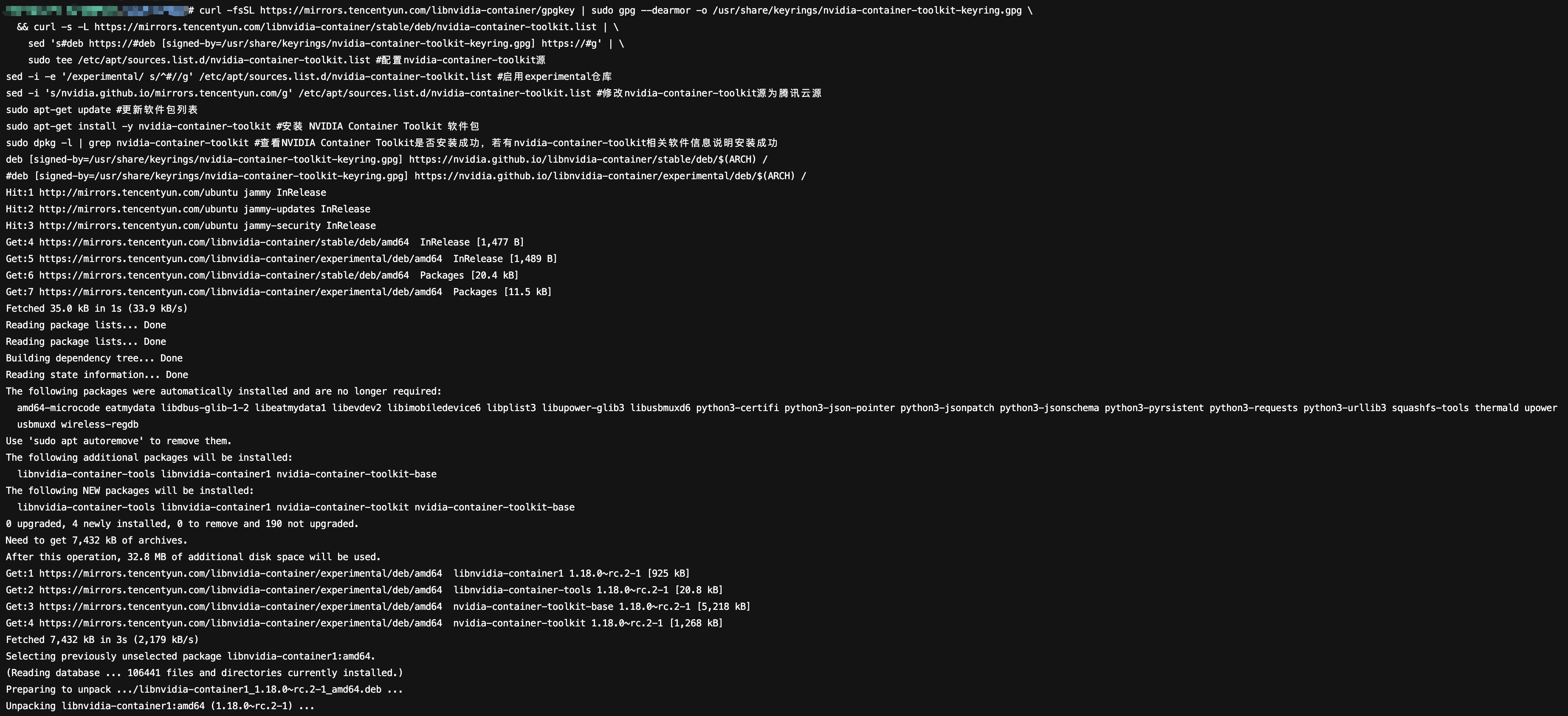

How to Install the NVIDIA Container Toolkit Toolchain?

NVIDIA Container Toolkit is an official toolchain introduced by NVIDIA, designed to address the complexity of GPU resource access in container environments. Through standardized and automated approaches, it seamlessly integrates GPU devices, driver libraries, and computing frameworks into the container ecosystem, serving as the cornerstone for building GPU-accelerated applications.

For GPU servers running Ubuntu, see the following steps for installation:

1. Log in to the console, select the target GPU instance, click Log in on the right, and choose a connection method to Log in to Instance based on your needs.

2. Copy the following command to the instance terminal.

# Configure the nvidia-container-toolkit sourcecurl -fsSL https://mirrors.tencentyun.com/libnvidia-container/gpgkey | sudo gpg --dearmor -o /usr/share/keyrings/nvidia-container-toolkit-keyring.gpg \\&& curl -s -L https://mirrors.tencentyun.com/libnvidia-container/stable/deb/nvidia-container-toolkit.list | \\sed 's#deb https://#deb [signed-by=/usr/share/keyrings/nvidia-container-toolkit-keyring.gpg] https://#g' | \\sudo tee /etc/apt/sources.list.d/nvidia-container-toolkit.list# Enable the experimental repositorysed -i -e '/experimental/ s/^#//g' /etc/apt/sources.list.d/nvidia-container-toolkit.list# Modify the nvidia-container-toolkit source to Tencent Cloud sourcesed -i 's/nvidia.github.io/mirrors.tencentyun.com/g' /etc/apt/sources.list.d/nvidia-container-toolkit.list# Update the package listsudo apt-get update# Install the NVIDIA Container Toolkit packagesudo apt-get install -y nvidia-container-toolkit# Check whether NVIDIA Container Toolkit is installed successfully. If related software information of nvidia-container-toolkit is displayed, it indicates successful installation.sudo dpkg -l | grep nvidia-container-toolkit# Restart docker for NVIDIA Container Toolkit to take effectsudo systemctl restart docker

3. Run the command to Install the NVIDIA Container Toolkit package, with successful results shown below:

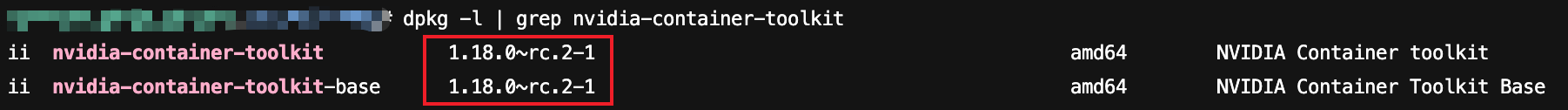

4. After installation, run the

dpkg -l | grep nvidia-container-toolkit command to check the NVIDIA Container Toolkit version information, with successful results shown below:

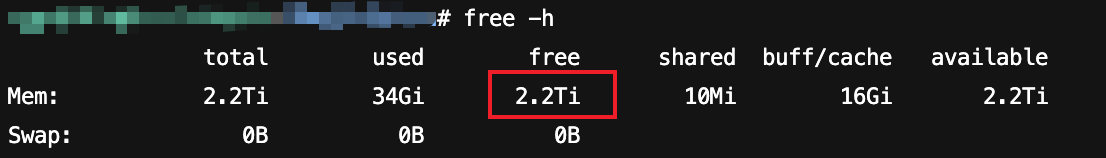

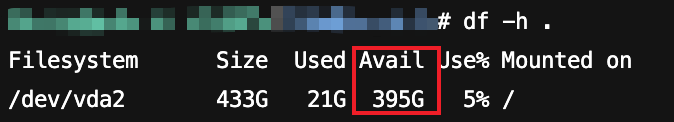

How to Check the Remaining Space of Memory and Hard Disk?

For GPU servers with Ubuntu operating system, run the

free -h command to check available memory space, run the df -h . command to check available disk space, with successful results shown below:

Why Is the CUDA Version Reported by the nvidia-smi Command Inconsistent with That Reported by the nvcc --version Command?

CUDA (Compute Unified Device Architecture) software stack is composed of the driver layer, runtime layer, and libraries layer; the APIs involved in the CUDA software stack include the driver layer API (Driver API) and the runtime layer API (Runtime API).

nvidia-smi displays the CUDA version of the GPU driver-installed Driver API, which is determined by the GPU driver.nvcc --version displays the runtime layer API version of the CUDA Toolkit actually installed in the system.They can be installed independently, so there may be inconsistent versions.

Why Does the CUDA Driver Layer API Version Become Lower Than the CUDA Runtime API Version After GPU Drivers and CUDA Are Installed Using the "Background Auto-Installation GPU Driver Feature" in the Console on a GPU Cloud Server?

CUDA supports forward compatibility. If the GPU driver is version 535, it is also compatible with CUDA 12.4.

This Article Explains Why Compute-Optimized GPU-Accelerated Cloud Servers Running Windows OS Can Display Graphics Card Information Using the nvidia-smi Command in Command Prompt After Driver Installation, but Not in Task Manager

Since Compute-optimized GPU servers do not have GRID drivers installed by default, the GPU operates in TCC (Tesla Compute Cluster) mode. In TCC mode, the GPU is solely dedicated to computing and does not support graphics display capabilities. The WDDM (Windows Display Driver Model) mode supports both computing and graphics, but switching to WDDM mode is only possible when GRID drivers are installed on the GPU server. Consequently, no GPU-related performance monitoring data appears in Task Manager. After GPU GRID drivers are installed on a Windows OS GPU server, GPU performance monitoring data can be viewed in Task Manager.

How to Disable and Enable ECC Mode on Linux OS GPU Servers?

By default, the GPU on GPU servers has ECC Mode enabled. ECC (Error Correcting Code) is a technology that detects and corrects memory errors. When ECC Mode is enabled via the GPU driver, the driver dedicates a portion of VRAM as parity bits to detect data corruption. This allows it to identify and correct errors in video memory, significantly reducing the risk of data corruption caused by hardware failures or environmental interference and enhancing business stability.

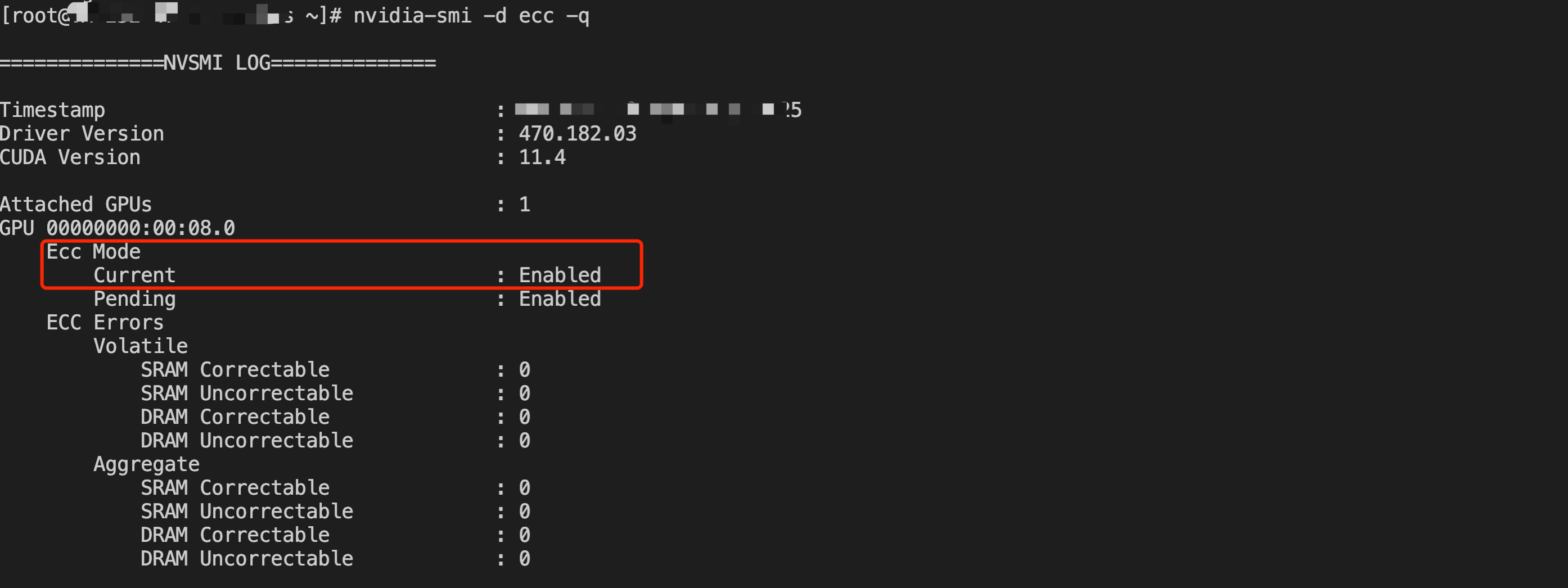

View ECC Mode

Execute the

nvidia-smi -d ecc -q command to check whether ECC Mode is enabled. If ECC mode is enabled, it displays "Current ECC Mode: Enabled"; if not, it displays "Current ECC Mode: Disabled".

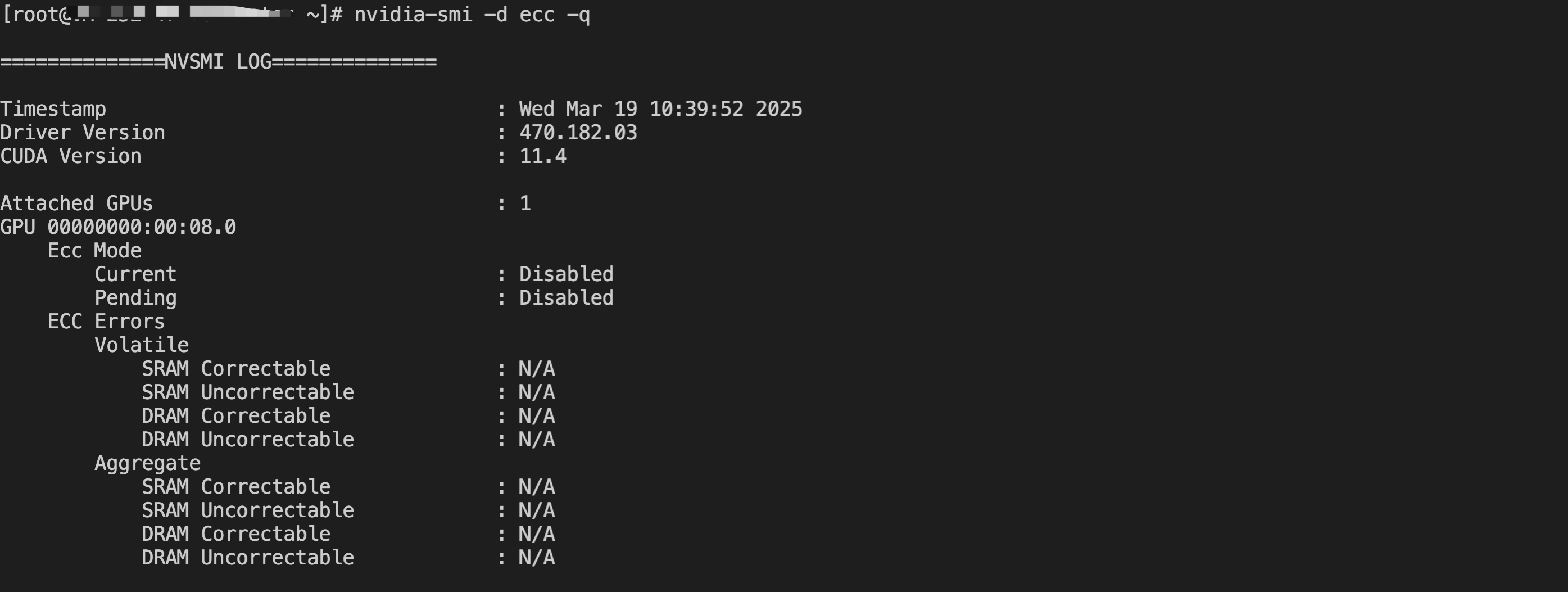

Disable ECC Mode

Warning: Although disabling ECC Mode may free up some GPU memory and improve performance, it could increase the risk of data errors and reduce system stability. Evaluate this operation carefully.

Execute the

nvidia-smi -e 0 command to disable it, then restart the server for the changes to take effect.Warning: Restarting the server may cause business interruption. Evaluate the impact of the restart on your business carefully.

nvidia-smi -e 0reboot -f

After the restart is complete, log in to the server and execute the

nvidia-smi -d ecc -q command to check the ECC Mode. You will see that it is now in the Disabled state.

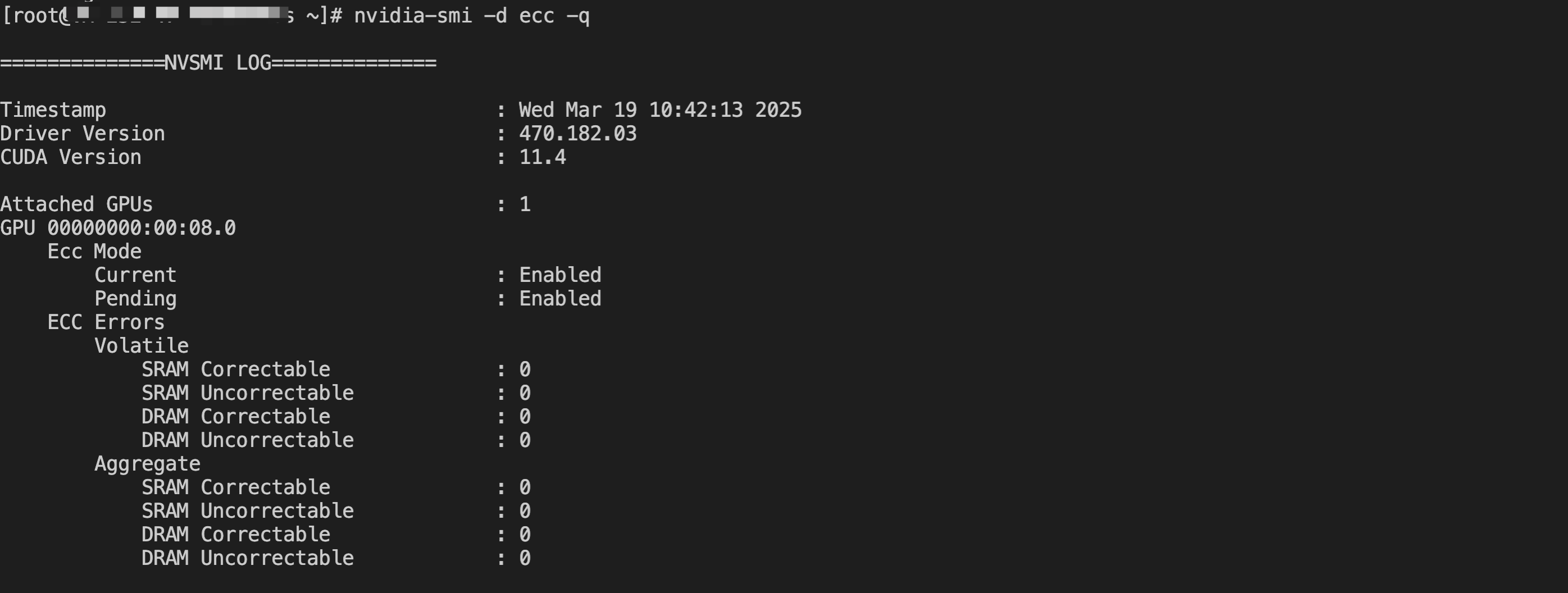

Enable ECC Mode

Warning: Adjusting ECC Mode requires a server restart to take effect. Restarting the server may cause business interruption. Carefully evaluate the impact of the restart on your business.

Execute the

nvidia-smi -e 1 command to enable it, then restart the server for the changes to take effect. Refer to the following code.nvidia-smi -e 1reboot -f

After the restart is complete, execute the

nvidia-smi -d ecc -q command to check the ECC Mode. You will see that it is now in the Enabled state.

Why Does Disabling/Enabling ECC Mode on GPU Not Take Effect?

Adjusting ECC Mode by executing the

nvidia-smi -e 0/1 command and restarting the server may fail. For NVIDIA Tesla drivers version 535 and above, Legacy Persistence Mode is poorly supported. Enabling persistence mode with the nvidia-smi -pm 1 command may cause ECC Mode adjustments to fail. It is recommended to disable persistence mode using the nvidia-smi -pm 0 command, then enable persistence mode with the nvidia-persistenced --persistence-mode command before adjusting ECC Mode.Additionally, it could be an issue with the driver itself. You can reinstall or switch to a different driver version before adjusting ECC Mode.

How to View the BusID of a GPU Server

Please refer to NVIDIA Driver Installation Guide to install the driver. After logging in to the server, execute the nvidia-smi command in the Windows Command Prompt or Linux Shell to view the GPU BusID. As shown in the figure below, the BusID is the part marked in red: 00:08.0.

Help and Support

Was this page helpful?

You can also Contact sales or Submit a Ticket for help.

Help us improve! Rate your documentation experience in 5 mins.

Feedback