- Release Notes and Announcements

- Product Introduction

- Purchase Guide

- Getting Started

- Operation Guide

- Instance Management

- Creating Instance

- Naming with Consecutive Numeric Suffixes or Designated Pattern String

- Viewing Instance

- Upgrading Instance

- Downgrading Instance Configuration

- Terminating/Returning Instances

- Change from Pay-as-You-Go to Monthly Subscription

- Upgrading Instance Version

- Adding Routing Policy

- Public Network Bandwidth Management

- Connecting to Prometheus

- AZ Migration

- Setting Maintenance Time

- Setting Message Size

- Topic Management

- Consumer Group

- Monitoring and Alarms

- Smart Ops

- Permission Management

- Tag Management

- Querying Message

- Event Center

- Migration to Cloud

- Data Compression

- Instance Management

- CKafka Connector

- Best Practices

- Connector Best Practices

- Connecting Flink to CKafka

- Connecting Schema Registry to CKafka

- Connecting Spark Streaming to CKafka

- Connecting Flume to CKafka

- Connecting Kafka Connect to CKafka

- Connecting Storm to CKafka

- Connecting Logstash to CKafka

- Connecting Filebeat to CKafka

- Multi-AZ Deployment

- Production and Consumption

- Log Access

- Replacing Supportive Route (Old)

- Troubleshooting

- API Documentation

- History

- Introduction

- API Category

- Making API Requests

- DataHub APIs

- ACL APIs

- Topic APIs

- BatchModifyGroupOffsets

- BatchModifyTopicAttributes

- CreateConsumer

- CreateDatahubTopic

- CreatePartition

- CreateTopic

- CreateTopicIpWhiteList

- DeleteTopic

- DeleteTopicIpWhiteList

- DescribeDatahubTopic

- DescribeTopic

- DescribeTopicAttributes

- DescribeTopicDetail

- DescribeTopicProduceConnection

- DescribeTopicSubscribeGroup

- FetchMessageByOffset

- FetchMessageListByOffset

- ModifyDatahubTopic

- ModifyTopicAttributes

- DescribeTopicSyncReplica

- Instance APIs

- Route APIs

- Other APIs

- Data Types

- Error Codes

- SDK Documentation

- General References

- FAQs

- Service Level Agreement (New Version)

- Contact Us

- Glossary

- Release Notes and Announcements

- Product Introduction

- Purchase Guide

- Getting Started

- Operation Guide

- Instance Management

- Creating Instance

- Naming with Consecutive Numeric Suffixes or Designated Pattern String

- Viewing Instance

- Upgrading Instance

- Downgrading Instance Configuration

- Terminating/Returning Instances

- Change from Pay-as-You-Go to Monthly Subscription

- Upgrading Instance Version

- Adding Routing Policy

- Public Network Bandwidth Management

- Connecting to Prometheus

- AZ Migration

- Setting Maintenance Time

- Setting Message Size

- Topic Management

- Consumer Group

- Monitoring and Alarms

- Smart Ops

- Permission Management

- Tag Management

- Querying Message

- Event Center

- Migration to Cloud

- Data Compression

- Instance Management

- CKafka Connector

- Best Practices

- Connector Best Practices

- Connecting Flink to CKafka

- Connecting Schema Registry to CKafka

- Connecting Spark Streaming to CKafka

- Connecting Flume to CKafka

- Connecting Kafka Connect to CKafka

- Connecting Storm to CKafka

- Connecting Logstash to CKafka

- Connecting Filebeat to CKafka

- Multi-AZ Deployment

- Production and Consumption

- Log Access

- Replacing Supportive Route (Old)

- Troubleshooting

- API Documentation

- History

- Introduction

- API Category

- Making API Requests

- DataHub APIs

- ACL APIs

- Topic APIs

- BatchModifyGroupOffsets

- BatchModifyTopicAttributes

- CreateConsumer

- CreateDatahubTopic

- CreatePartition

- CreateTopic

- CreateTopicIpWhiteList

- DeleteTopic

- DeleteTopicIpWhiteList

- DescribeDatahubTopic

- DescribeTopic

- DescribeTopicAttributes

- DescribeTopicDetail

- DescribeTopicProduceConnection

- DescribeTopicSubscribeGroup

- FetchMessageByOffset

- FetchMessageListByOffset

- ModifyDatahubTopic

- ModifyTopicAttributes

- DescribeTopicSyncReplica

- Instance APIs

- Route APIs

- Other APIs

- Data Types

- Error Codes

- SDK Documentation

- General References

- FAQs

- Service Level Agreement (New Version)

- Contact Us

- Glossary

COS

Last updated: 2022-06-07 14:54:27

This document is currently invalid. Please refer to the documentation page of the product.

Overview

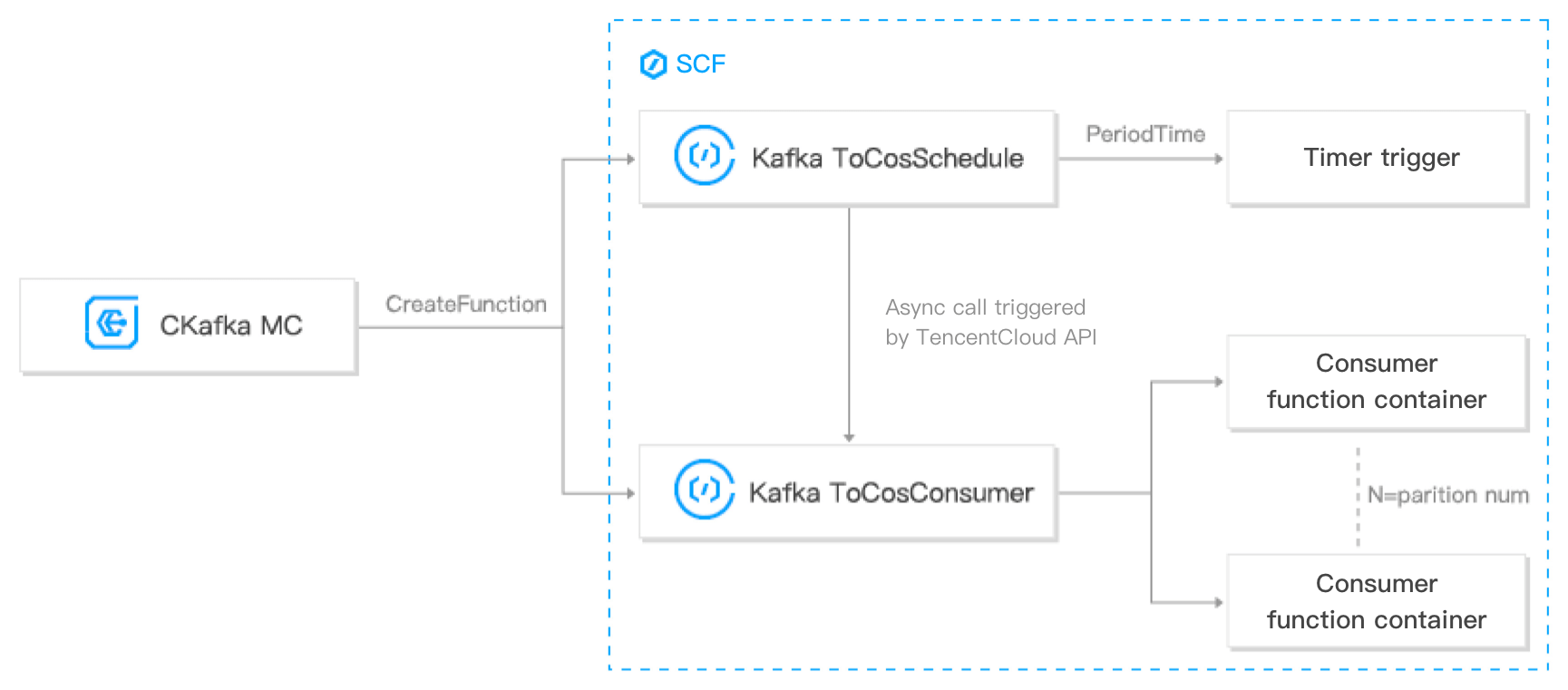

DataHub offers data distribution capabilities. You can distribute CKafka data to COS for data analysis and download. The process and architecture are as shown below:

Prerequisites

Currently, this feature relies on the SCF and COS services, which should be activated first.

Directions

Creating task

- Log in to the CKafka console.

- Click Data Distribution on the left sidebar, select the region, and click Create Task.

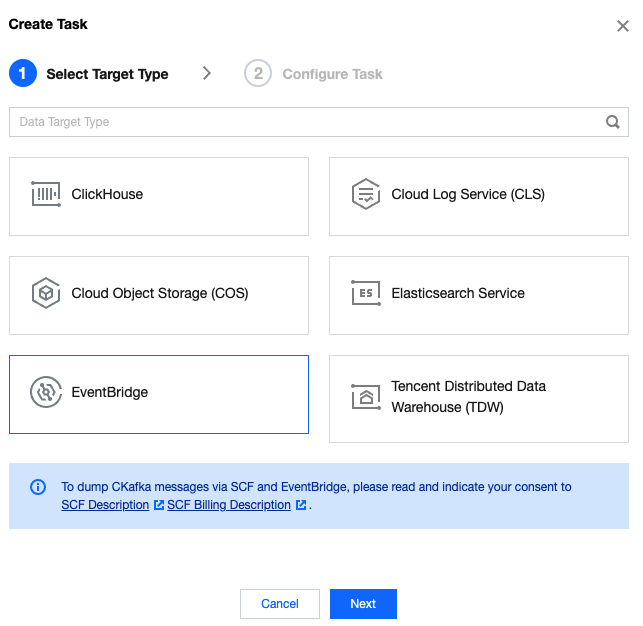

- Select EventBridge as the Target Type and click Next.

Note:

Note:Before using SCF and EventBridge to process data, you need to read and agree to SCF Description and Billing Overview.

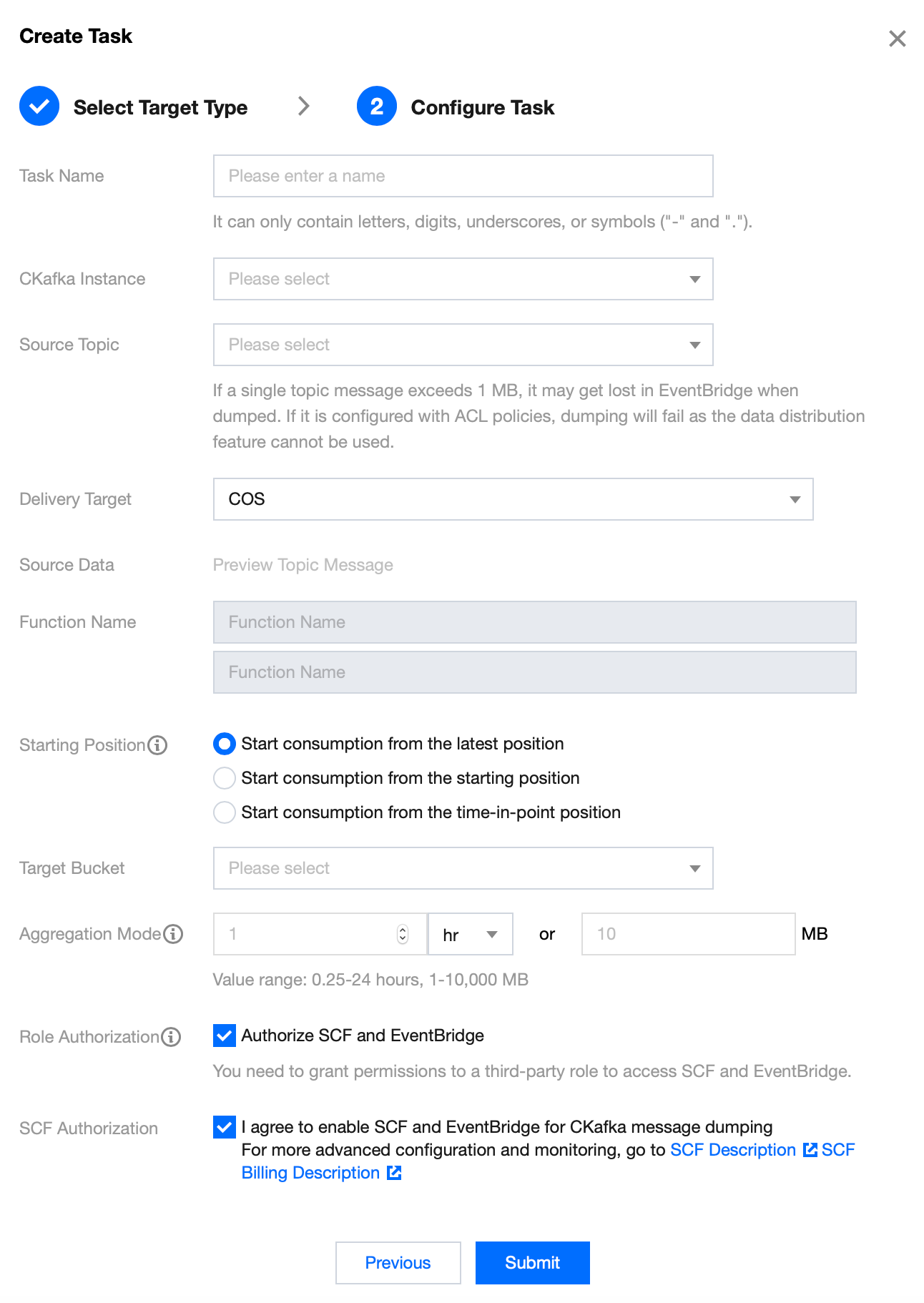

- On the Configure Task page, enter the task details.

- Task Name: It can only contain letters, digits, underscores, or symbols ("-" and ".").

- CKafka Instance: Select the source CKafka instance.

- Source Topic: Select the source topic.

- Delivery Target: Select COS.

- Starting Position: Select the topic offset of historical messages when dumping.

- Target Bucket: Select a COS bucket for the specific topic, where a file path named

instance-id/topic-id/date/timestampwill be automatically created for message storage. If this path cannot meet your business needs, modify it underCkafkaToCosConsumerafter completing the creation. - Aggregation Mode: Select either or both of the aggregation modes for the files to be aggregated to the COS bucket. For example, if you specify that files are aggregated once every hour and once every 1 GB, files will be aggregated every time either of the conditions is met.

- Role Authorization: You need to grant permissions to a third-party role to access SCF and EventBridge.

- SCF Authorization: Indicate your consent to activating SCF and EventBridge and create a function. Then, you need to go to the function settings to set more advanced configuration items and view monitoring information.

- Click Submit.

Viewing monitoring data

- Log in to the CKafka console.

- Click Data Distribution on the left sidebar and click the ID of the target task to enter its basic information page.

- At the top of the task details page, click Monitoring, select the resource to be viewed, and set the time range to view the corresponding monitoring data.

Restrictions and Billing

- The dump speed is subject to the limit of the peak bandwidth of the CKafka instance. If the consumption is too slow, check the peak bandwidth settings or increase the number of CKafka partitions.

- The dump speed is subject to the size of a single CKafka file. A file exceeding 500 MB in size will be automatically split for multipart upload.

- Currently, you can only store messages to a COS bucket in the same region as the CKafka instance. For latency considerations, cross-region storage is not supported.

- For message dump to COS, the file content is composed by serializing the values in CKafka messages with UTF-8 strings. Binary data format is not supported currently.

- The operating account that enables message dump to COS must have write permission to the target COS bucket.

- You must have at least one VPC before you can dump messages to COS. If you choose the classic network during creation, bind a VPC as instructed in Adding Routing Policy.

- This feature is provided based on the SCF service that offers a free tier. For more information on the fees for excessive usage, see the billing rules of SCF.

- The fees of the dumping scheme is subject to the number of CKafka partitions, which is currently the same as the number of concurrent functions. If you want to modify the number of concurrent functions, check the logic of the

schedulefunction.

Yes

Yes

No

No

Was this page helpful?