原生 HDFS 数据迁移到腾讯云 CHDFS

下载

聚焦模式

字号

准备工作

1. 在腾讯云官网创建 CHDFS 文件系统和 CHDFS 挂载点,配置好权限信息。

2. 通过腾讯云 VPC 环境的 CVM 机器访问创建好的 CHDFS,详情请参见 创建 CHDFS。

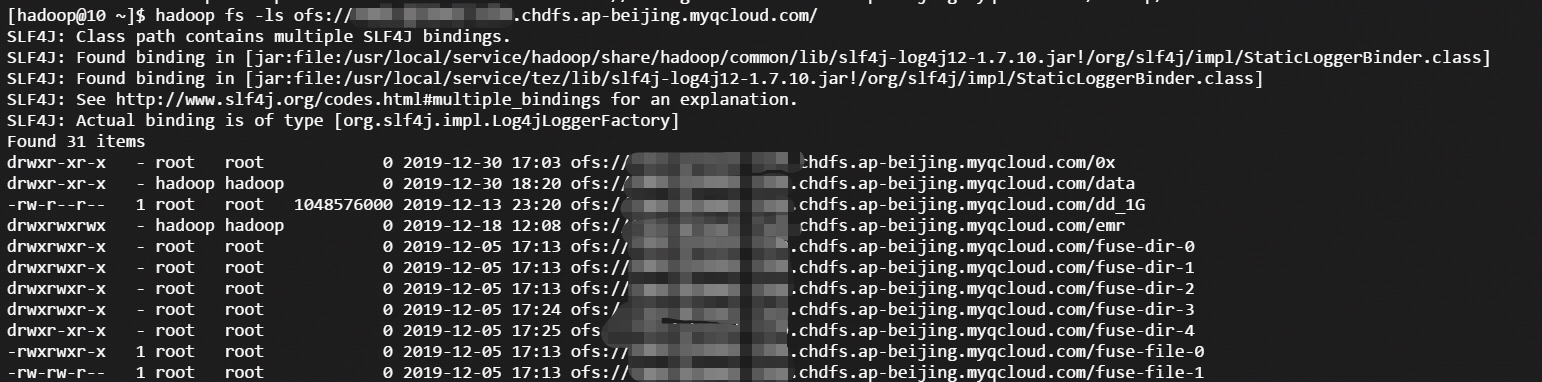

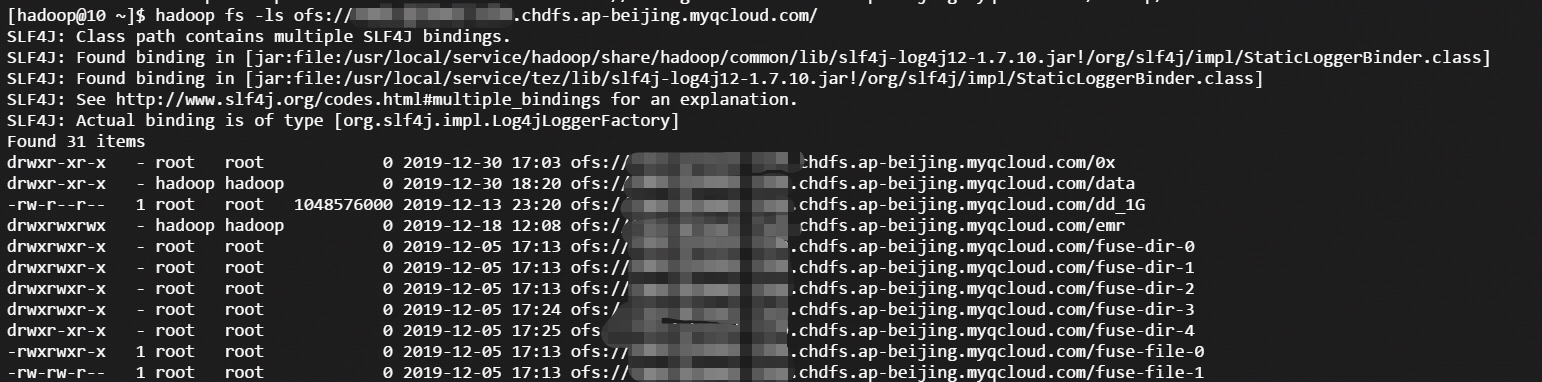

3. 当挂载成功后,打开 hadoop 命令行工具,执行以下命令,验证 CHDFS 功能是否正常。

hadoop fs -ls ofs://f4xxxxxxxxxxxxxxx.chdfs.ap-beijing.myqcloud.com/

如果能看到以下类似的输出,则表明云 HDFS 功能一切正常。

迁移

注意事项

在 hadoop distcp 工具中,提供了一些 CHDFS 不兼容的参数。如果指定如下表格中的一些参数,则不生效。

参数 | 描述 | 状态 |

-p[rbax] | r:replication,b:block-size,a:ACL,x:XATTR | 不生效 |

示例说明

1. 当 CHDFS 准备就绪后,执行以下 hadoop 命令进行数据迁移。

hadoop distcp hdfs://10.0.1.11:4007/testcp ofs://f4xxxxxxxx-xxxx.chdfs.ap-beijing.myqcloud.com/

其中

f4xxxxxxxx-xxxx.chdfs.ap-beijing.myqcloud.com为挂载点域名,需要根据实际申请的挂载点信息进行替换。2. Hadoop 命令执行完毕后,会在日志中打印出本次迁移的具体详情。如下示例所示:

2019-12-31 10:59:31 [INFO ] [main:13300] [org.apache.hadoop.mapreduce.Job:] [Job.java:1385]Counters: 38File System CountersFILE: Number of bytes read=0FILE: Number of bytes written=387932FILE: Number of read operations=0FILE: Number of large read operations=0FILE: Number of write operations=0HDFS: Number of bytes read=1380HDFS: Number of bytes written=74HDFS: Number of read operations=21HDFS: Number of large read operations=0HDFS: Number of write operations=6OFS: Number of bytes read=0OFS: Number of bytes written=0OFS: Number of read operations=0OFS: Number of large read operations=0OFS: Number of write operations=0Job CountersLaunched map tasks=3Other local map tasks=3Total time spent by all maps in occupied slots (ms)=419904Total time spent by all reduces in occupied slots (ms)=0Total time spent by all map tasks (ms)=6561Total vcore-milliseconds taken by all map tasks=6561Total megabyte-milliseconds taken by all map tasks=6718464Map-Reduce FrameworkMap input records=3Map output records=2Input split bytes=408Spilled Records=0Failed Shuffles=0Merged Map outputs=0GC time elapsed (ms)=179CPU time spent (ms)=4830Physical memory (bytes) snapshot=1051619328Virtual memory (bytes) snapshot=12525191168Total committed heap usage (bytes)=1383071744File Input Format CountersBytes Read=972File Output Format CountersBytes Written=74org.apache.hadoop.tools.mapred.CopyMapper$CounterBYTESSKIPPED=5COPY=1SKIP=2

文档反馈