Push 和 Pull 的区别

聚焦模式

字号

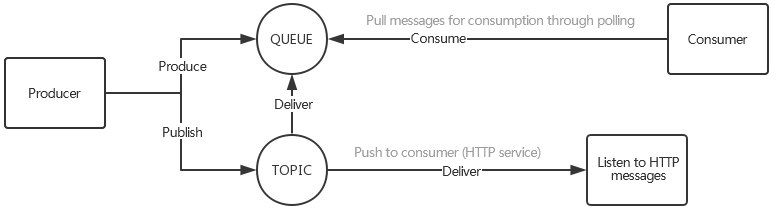

Push 模型:当 Producer 发出的消息到达后,服务端马上将这条消息投递给 Consumer。

Pull 模型:当服务端收到这条消息后什么也不做,只是等着 Consumer 主动到自己这里来读,即 Consumer 这里有一个“拉取”的动作。

场景1:Producer 速率大于 Consumer 速率

当 Producer 速率大于 Consumer 速率时,有两种可能性:一种是 Producer 本身的效率就要比 Consumer 高(例如,Consumer 端处理消息的业务逻辑可能很复杂,或者涉及到磁盘、网络等 I/O 操作);另一种是 Consumer 出现故障,导致短时间内无法消费或消费不畅。

Push 方式由于无法得知当前 Consumer 的状态,所以只要有数据产生,便会不断地进行推送,在以上两种情况下时,可能会导致 Consumer 的负载进一步加重,甚至是崩溃(例如生产者是 flume 疯狂抓日志,消费者是 HDFS+hadoop,处理效率跟不上)。除非Consumer 有合适的反馈机制能够让服务端知道自己的状况。

而采取 Pull 的方式问题就简单了许多,由于 Consumer 是主动到服务端拉取数据,此时只需要降低自己访问频率即可。举例:如前端是 flume 等日志收集业务,不断向 CMQ 生产消息,CMQ 向后端投递,后端业务如数据分析等业务,效率可能低于生产者。

场景2:强调消息的实时性

采用 Push 的方式时,一旦消息到达,服务端即可马上将其推送给消费端,这种方式的实时性显然是非常好的;而采用 Pull 方式时,为了不给服务端造成压力(尤其是当数据量不足时,不停的轮询显得毫无意义),需要控制好自己轮询的间隔时间,但这必然会给实时性带来一定的影响。

场景3:Pull 的长轮询

Pull 模式存在的问题:由于主动权在消费方,消费方无法准确地决定何时去拉取最新的消息。如果一次 Pull 取到消息了还可以继续去 Pull,如果没有 Pull 取到消息则需要等待一段时间再重新 Pull。

由于等待时间很难判定。您可能有很多种动态拉取时间调整算法,可能依然会遇到问题,是否有消息到来不是由消费方决定。也许1分钟内连续到来3000条消息,接下来的几分钟或几个小时内却没有新消息产生。

CMQ 提供了长轮询的优化方法,用以平衡 Pull/Push 模型各自的缺点。基本方式是:消费者如果尝试拉取失败,不是直接 return,而是把连接挂在那里 wait,服务端如果有新的消息到来,把连接拉起,返回最新消息。

场景4:部分或全部 Consumer 不在线

在消息系统中,Producer 和 Consumer 是完全解耦的,Producer 发送消息时,并不要求 Consumer 一定要在线,对于 Consumer 也是同样的道理,这也是消息通信区别于 RPC 通信的主要特点;但是对于 Consumer 不在线的情况,却有很多值得讨论的场景。

首先,在 Consumer 偶然宕机或下线时,Producer 的生产是可以不受影响的,Consumer 上线后,可以继续之前的消费,此时消息数据不会丢失;但是如果 Consumer 长期宕机或是由于机器故障无法再次启动,就会出现问题,即服务端是否需要为 Consumer 保留数据,以及保留多久的数据等。

采用 Push 方式时,因为无法预知 Consumer 的宕机或下线是短暂的还是持久的,如果一直为该 Consumer 保留自宕机开始的所有历史消息,那么即便其他所有的 Consumer 都已经消费完成,数据也无法清理掉,随着时间的积累,队列的长度会越来越大,此时无论消息是暂存于内存还是持久化到磁盘上(采用 Push 模型的系统,一般都是将消息队列维护于内存中,以保证推送的性能和实时性,这一点会在后边详细讨论),都将对 CMQ 服务端造成巨大压力,甚至可能影响到其他 Consumer 的正常消费,尤其当消息的生产速率非常快时更是如此;但是如果不保留数据,那么等该 Consumer 再次起来时,则要面对丢失数据的问题。

折中的方案是:CMQ 给数据设定一个超时时间,当 Consumer 宕机时间超过这个阈值时,则清理数据;但这个时间阈值也并不太容易确定。

在采用 Pull 模型时,情况会有所改善;服务端不再关心 Consumer 的状态,而是采取“你来了我才服务”的方式,Consumer 是否能够及时消费数据,服务端不会做任何保证(也有超时清理时间)。

文档反馈