Hue 实践教程

下载

聚焦模式

字号

本文主要介绍 Hue 的实践用法。

Hive SQL 查询

Hue 的 beeswax App 提供了友好方便的 Hive 查询功能,可以选择不同的 Hive 数据库、编写 HQL 语句、提交查询任务、查看结果。

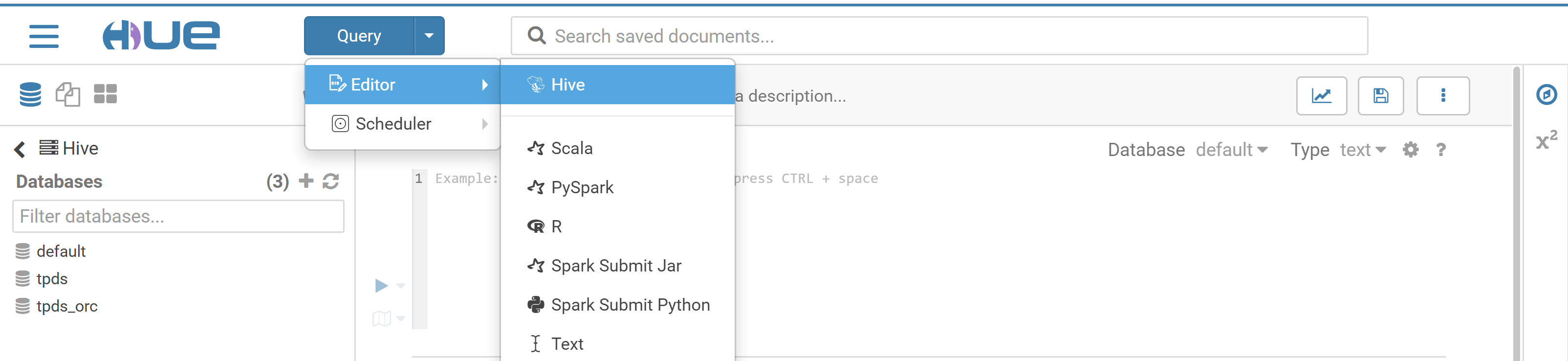

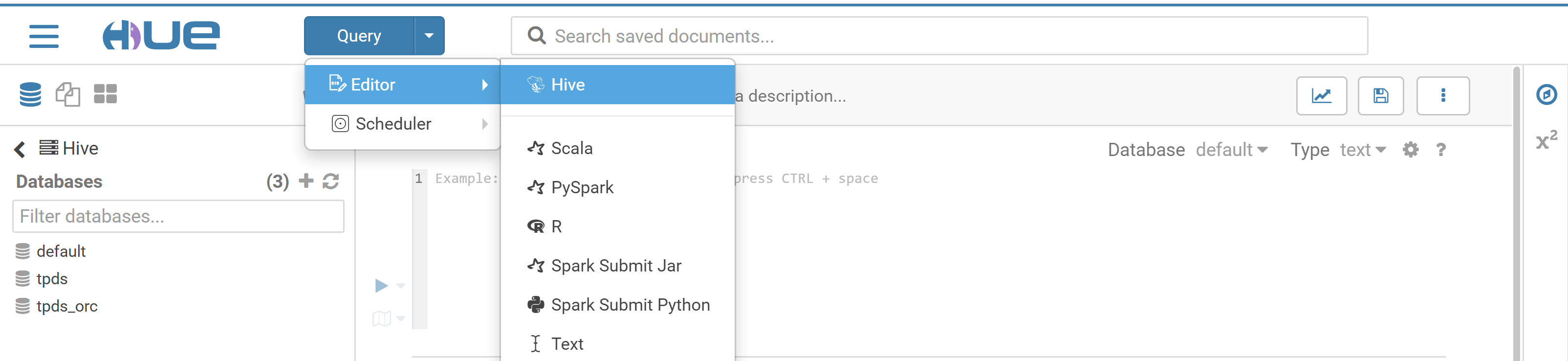

1. 在 Hue 控制台上方,选择 Query > Editor > Hive。

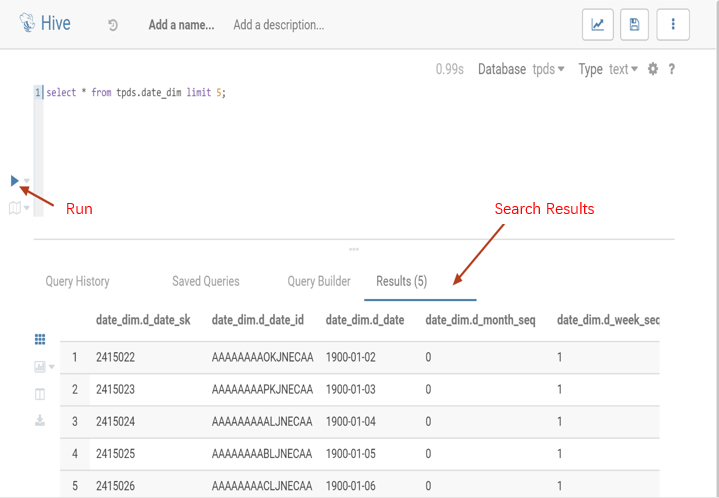

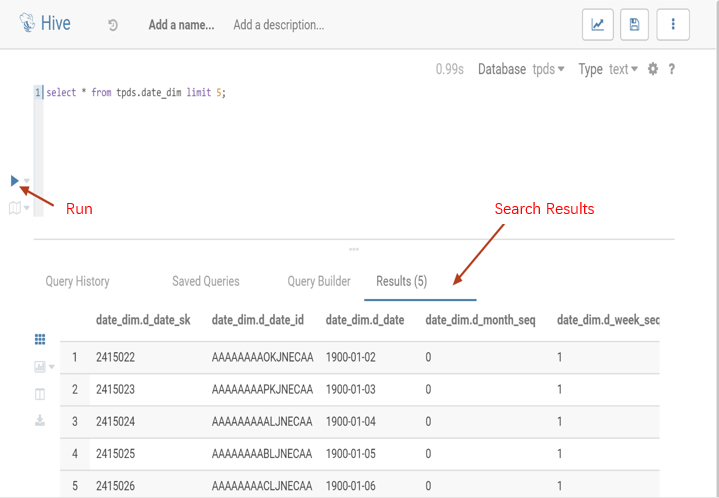

2. 在语句输入框中输入要执行语句,然后单击执行,执行语句。

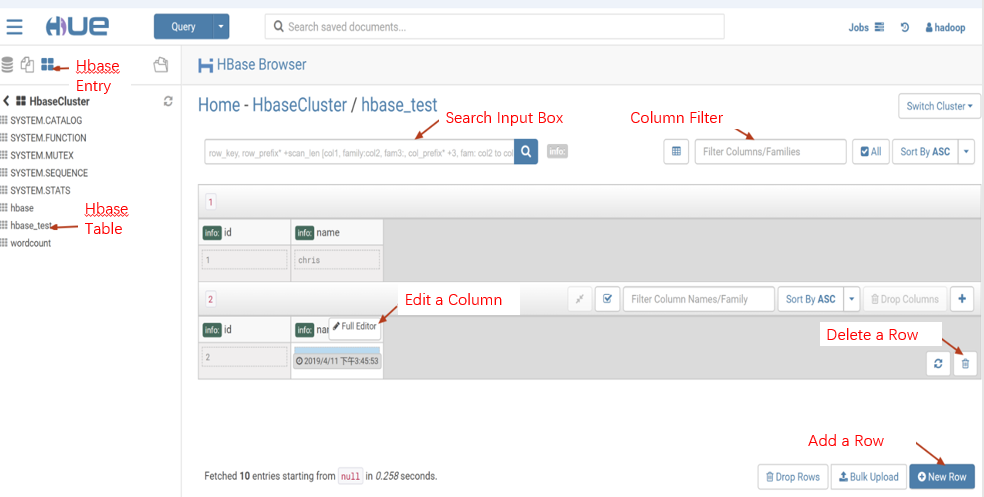

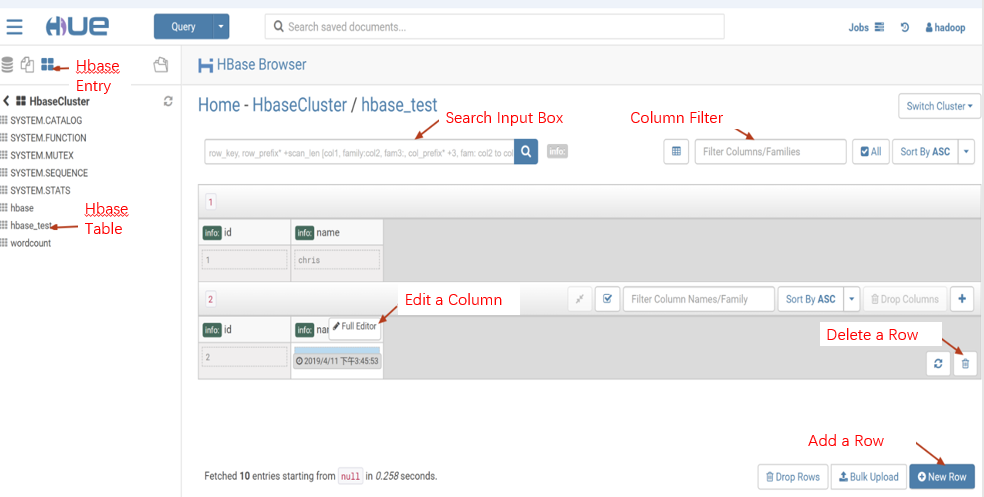

Hbase 数据查询和修改、数据展示

使用 Hbase Browser 可以查询、修改、展示 Hbase 集群中表的数据。

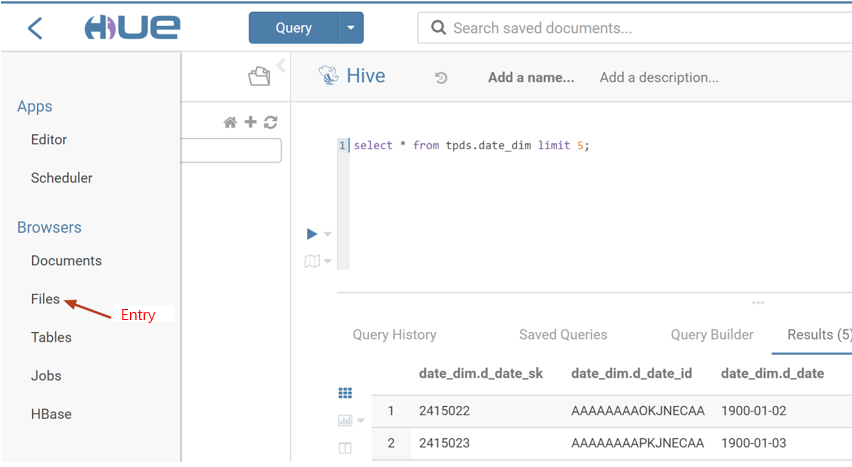

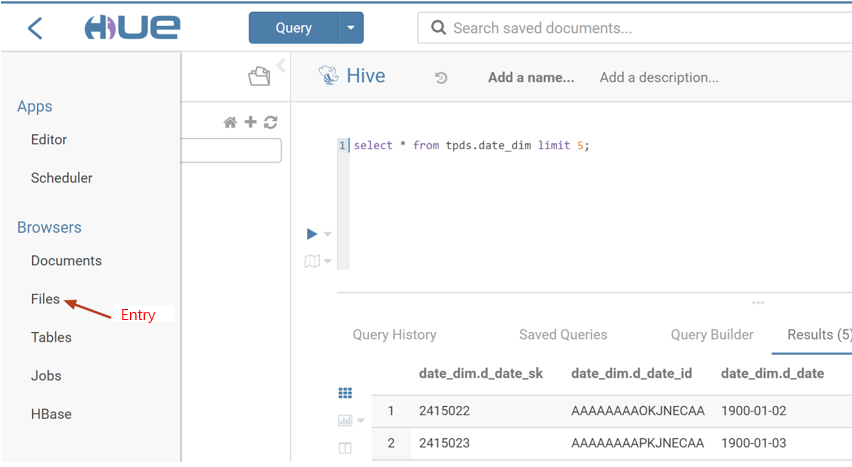

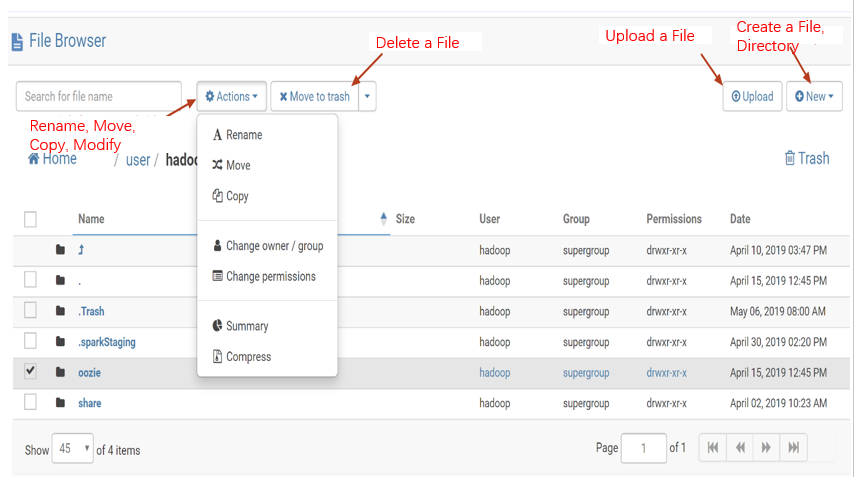

访问 HDFS 和文件浏览

通过 Hue 的 Web 页面可方便查看 HDFS 中的文件和文件夹,并对其进行创建、下载、上传、复制、修改和删除等操作。

1. 在 Hue 控制台左侧,选择 Browsers > Files 进入 HDFS 文件浏览。

在 Hue 控制台左侧,选择 Browsers > Files 进入 HDFS 文件浏览。

Oozie 任务的开发

1. 准备工作流数:Hue 的任务调度基于工作流,先创建一个包含 Hive script 脚本的工作流,Hive script 脚本的内容如下:

create database if not exists hive_sample;show databases;use hive_sample;show tables;create table if not exists hive_sample (a int, b string);show tables;insert into hive_sample select 1, "a";select * from hive_sample;

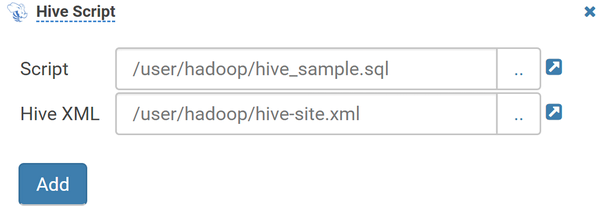

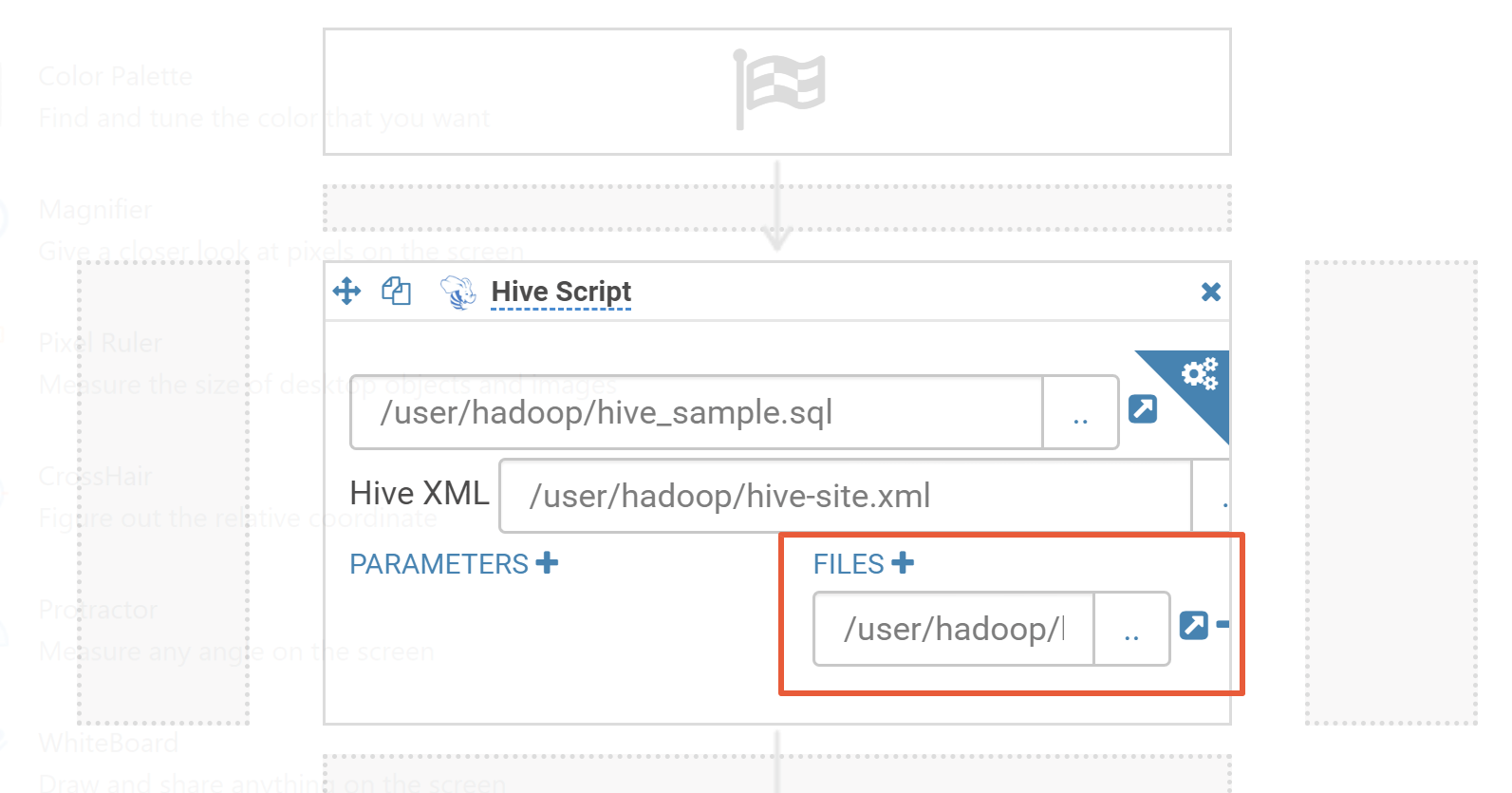

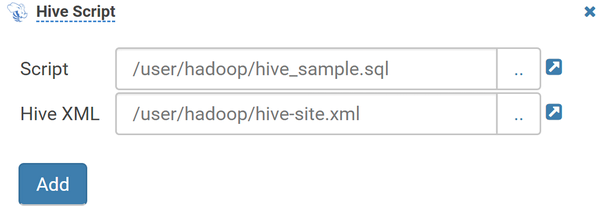

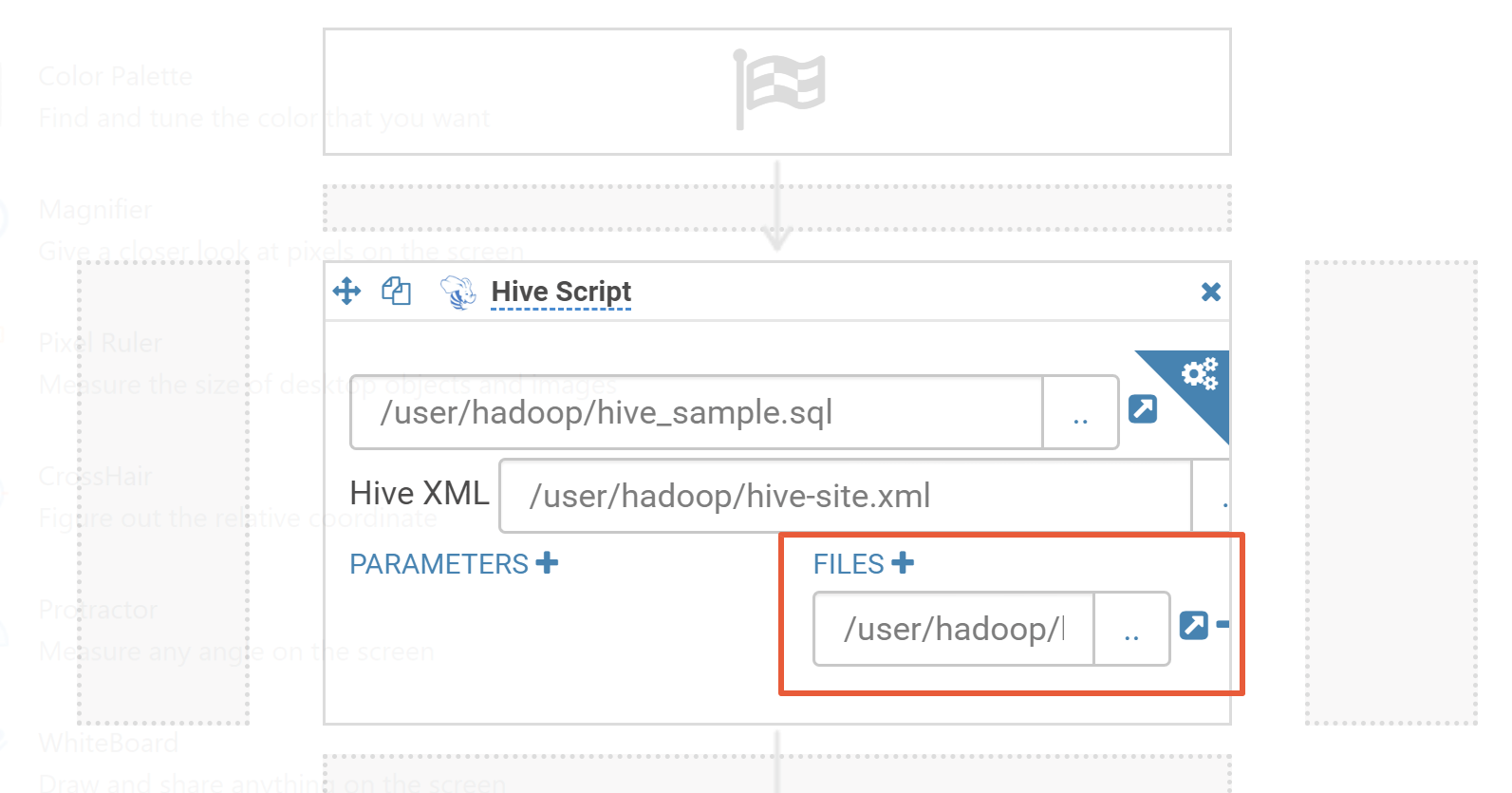

将以上内容保存为 hive_sample.sql 文件。Hive 工作流还需要一个 hive-site.xml 配置文件,此配置文件可以在集群中安装了 Hive 组件的节点上找到。具体路径:/usr/local/service/hive/conf/hive-site.xml,复制一个 hive-site.xml 文件。然后上传 Hive script 文件和 hive-site.xml 到 hdfs 的目录,例如:/user/hadoop。

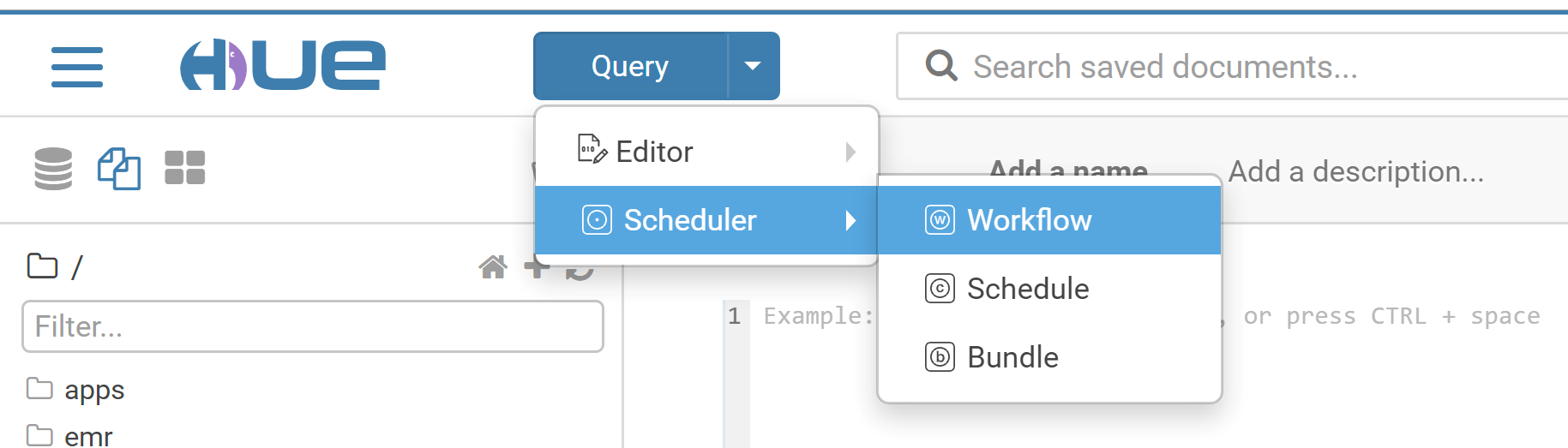

2. 创建工作流。

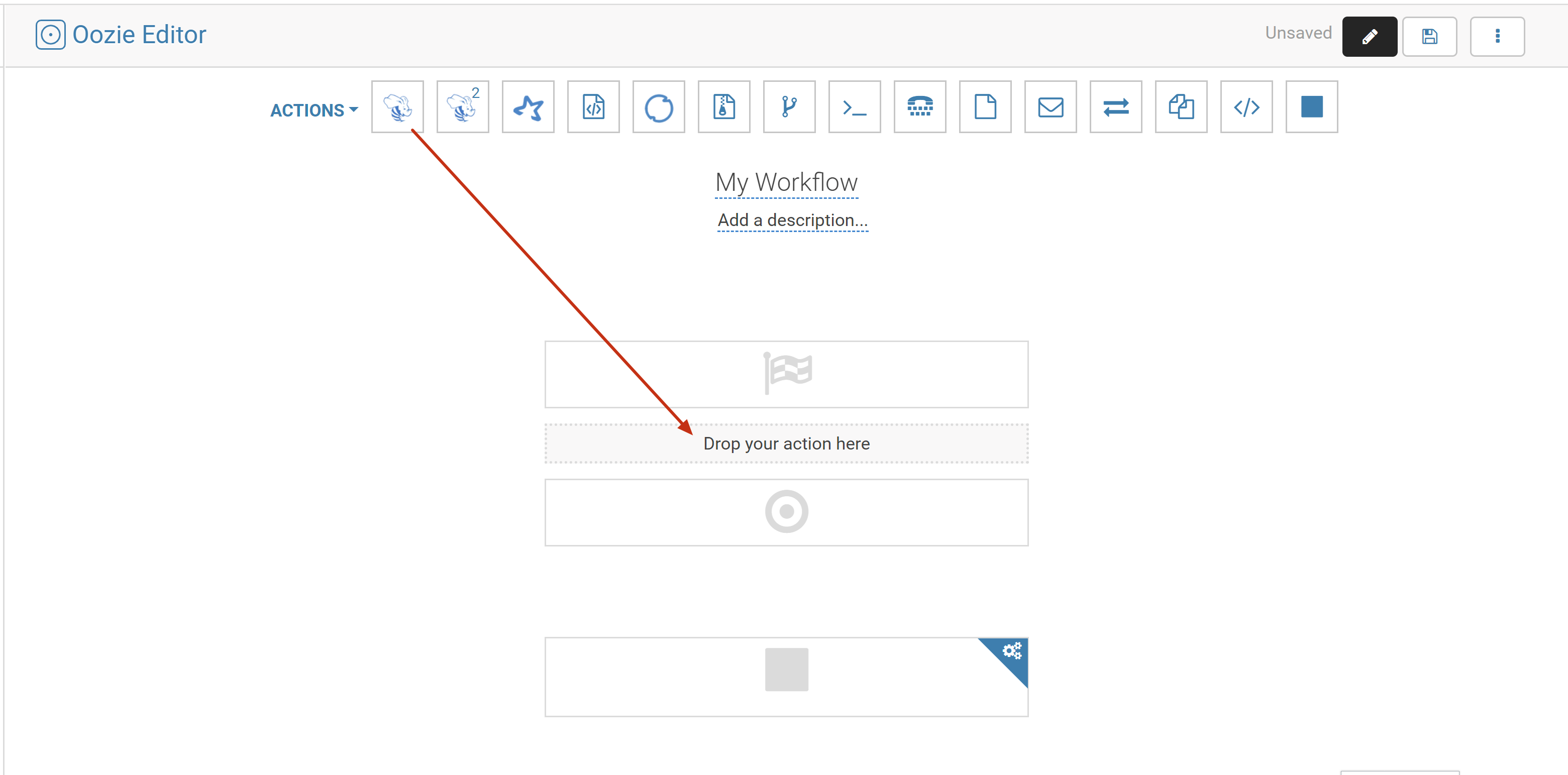

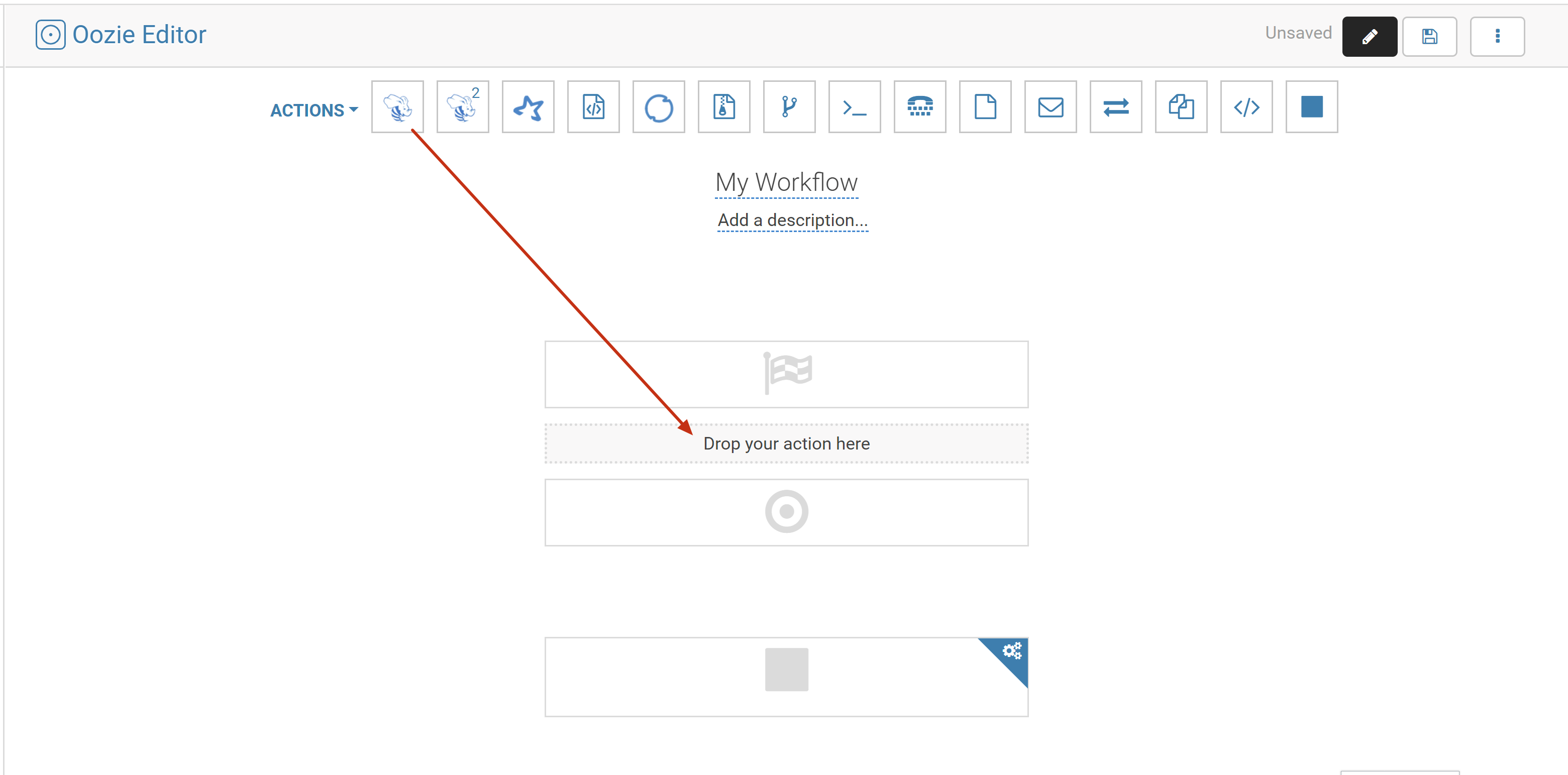

2.1 切换到 hadoop 用户,在 Hue 页面上方,选择 Query > Scheduler > Workflow。

2.2 在工作流编辑页面中拖一个 Hive Script。

注意

本文以安装 Hive 版本为 Hive1 为例,配置参数为 HiveServer1。与其他 Hive 版本混合部署时(即配置其他版本的配置参数时),会报错。

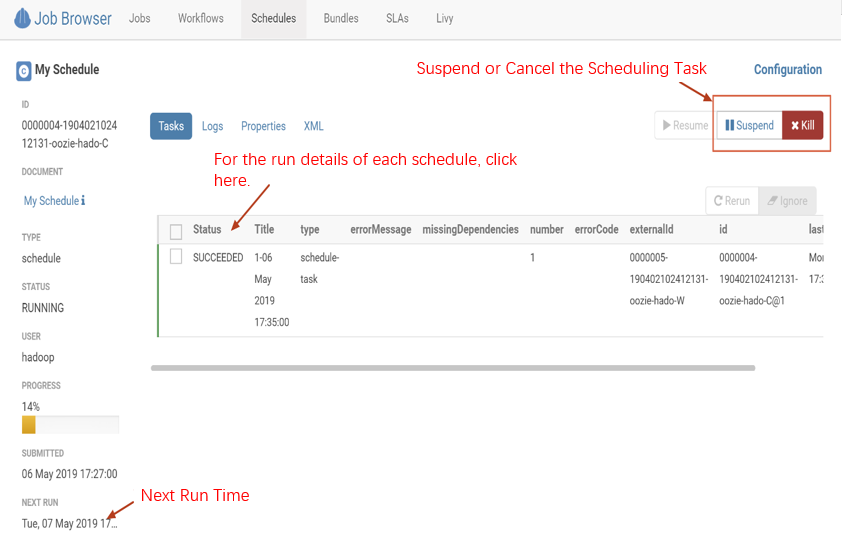

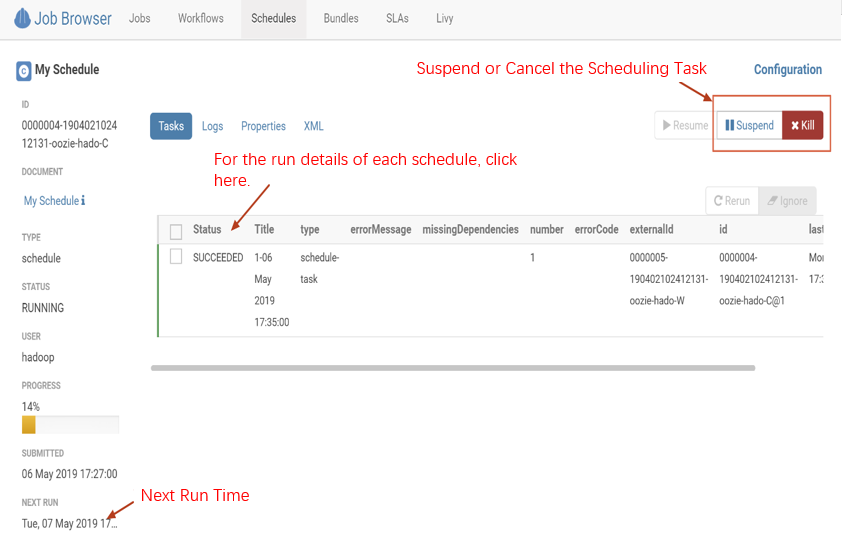

3. 创建定时调度任务。

Hue 的定时调度任务是 schedule,类似于 Linux 的 crontab,支持的调度粒度可以到分钟级别。

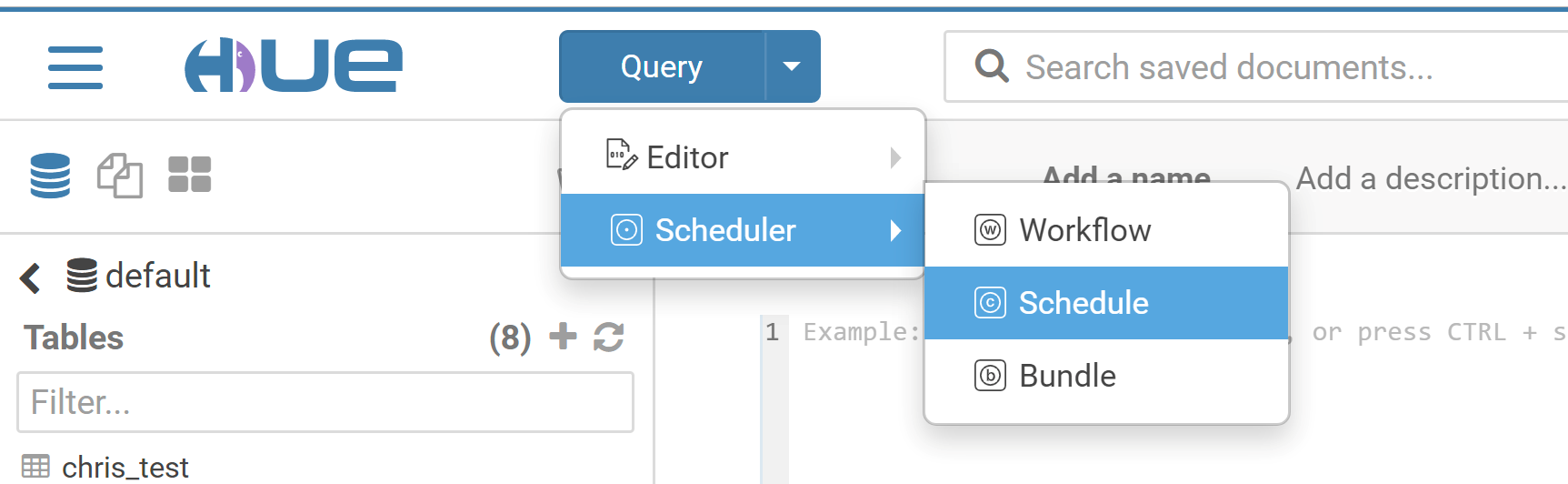

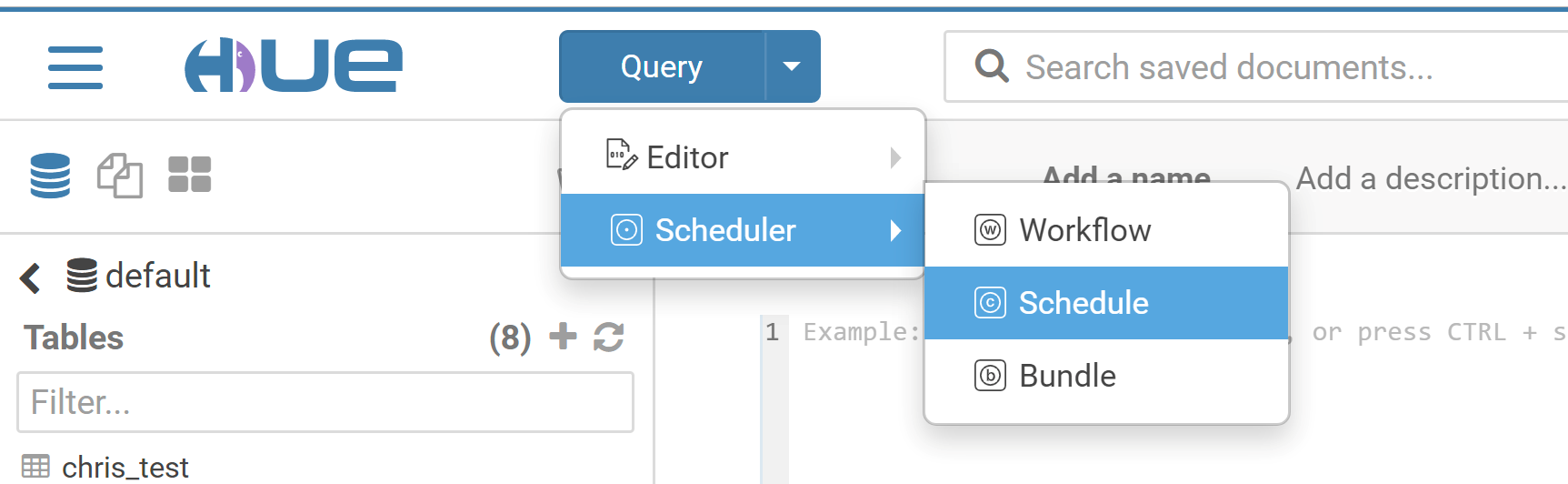

3.1 选择 Query > Scheduler > Schedule,创建 Schedule。

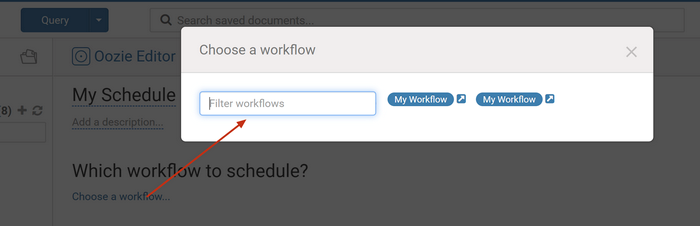

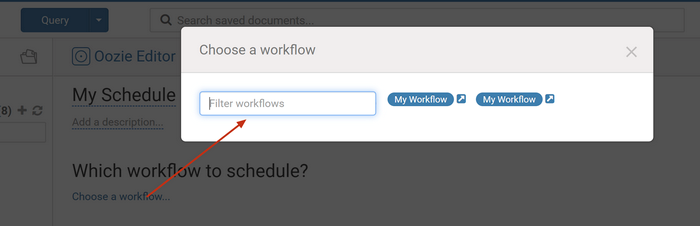

3.2 单击 Choose a workflow,选择一个创建好的工作流。

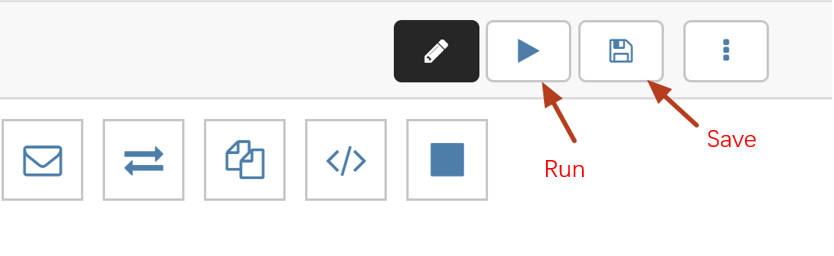

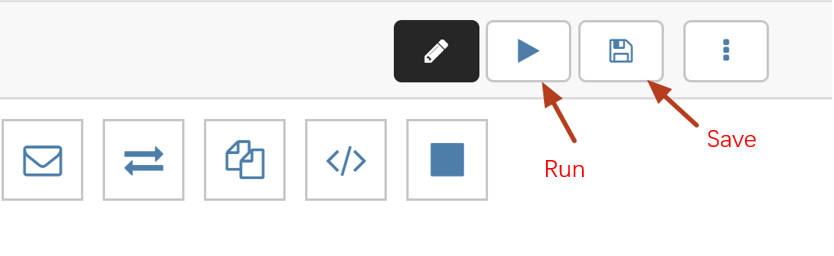

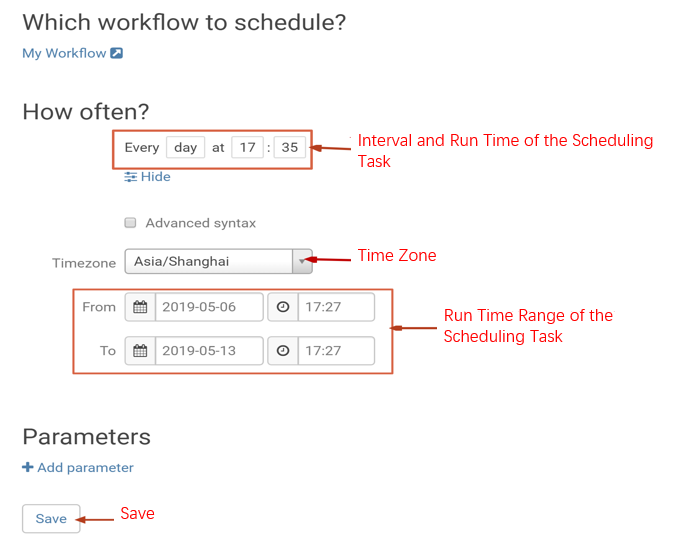

3.3 选择需要调度的时间点和时间间隔、时区、调度任务的开始时间和结束时间,然后单击 Save 保存。

4. 创建定时调度任务。

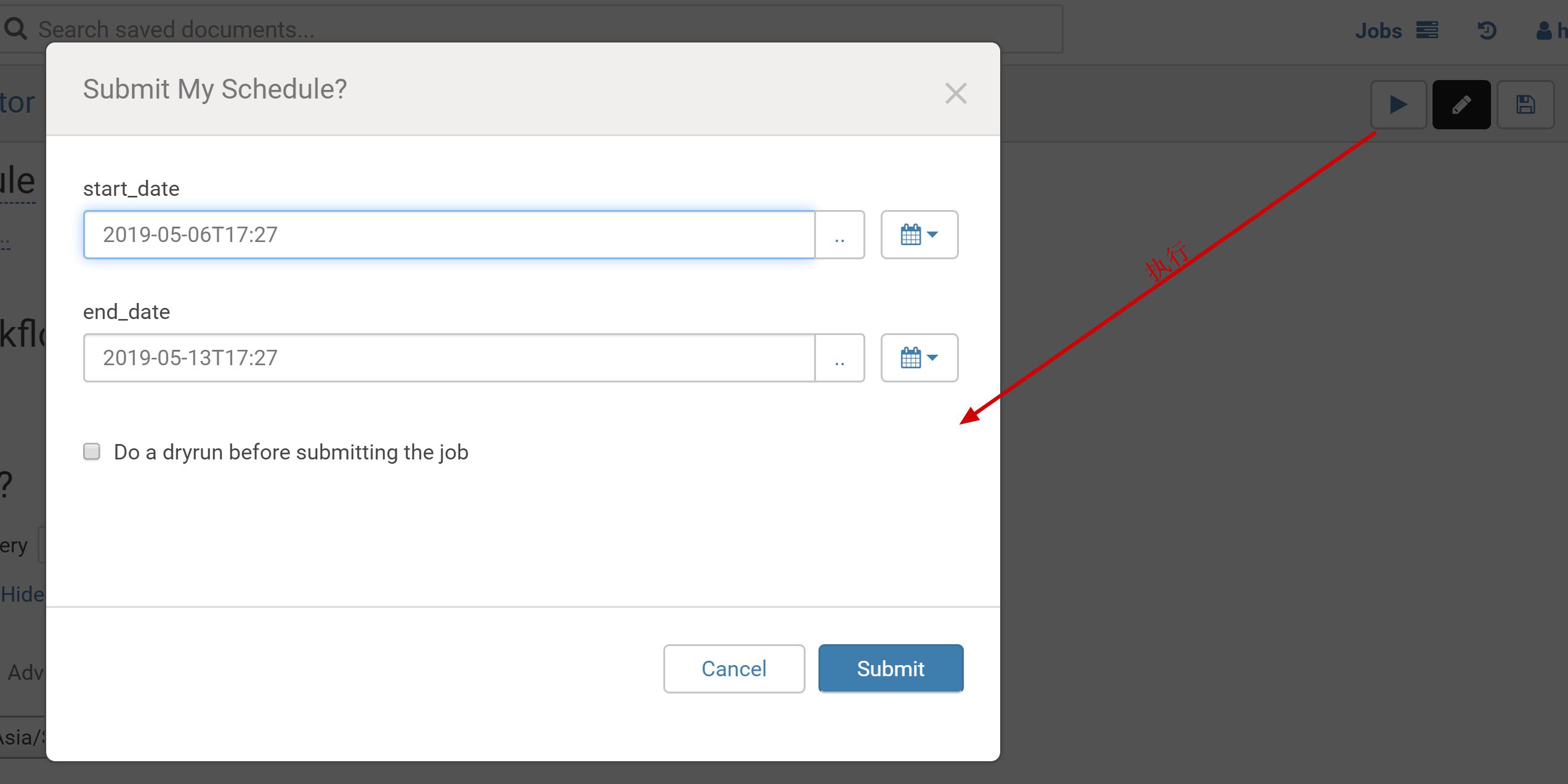

4.1 单击右上角的提交,提交调度任务。

4.2 在 schedulers 的监控页面可以查看任务调度情况。

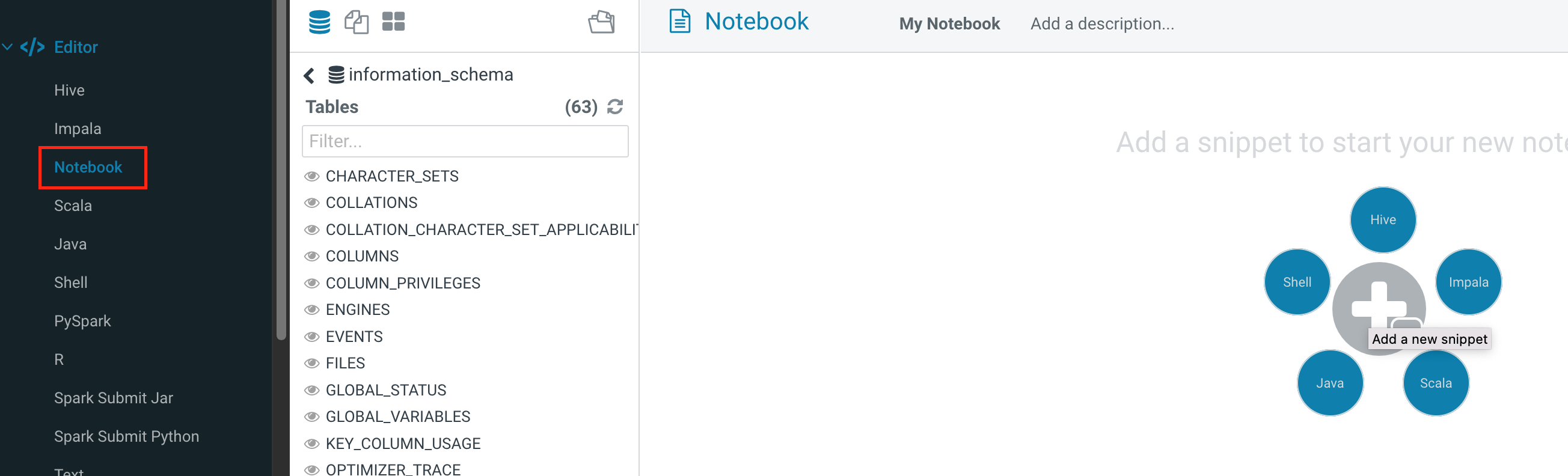

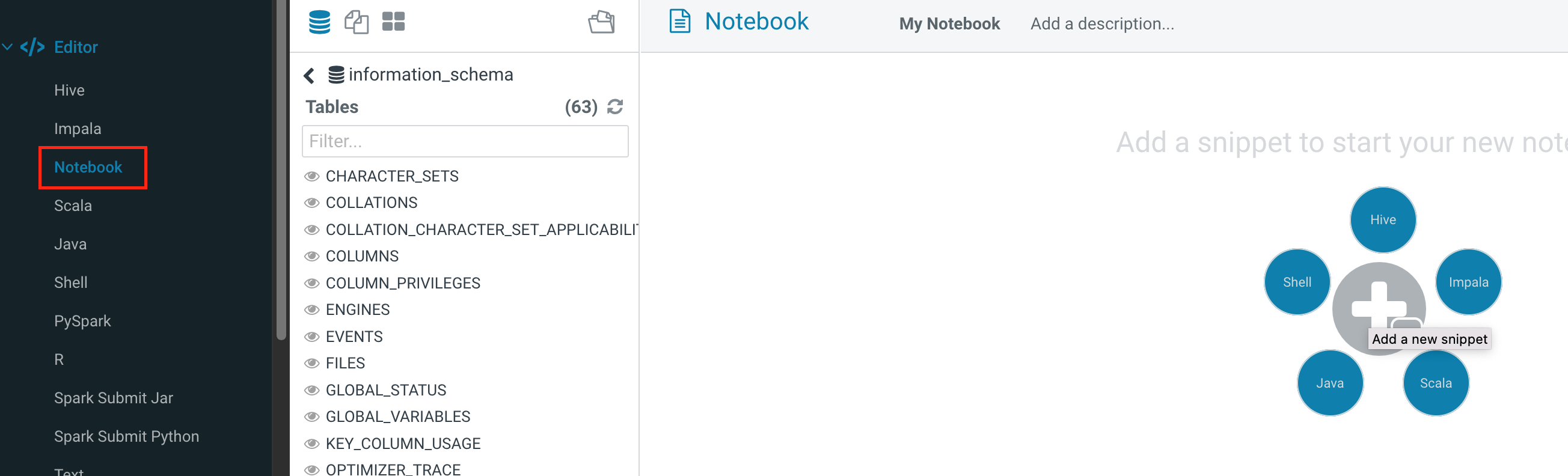

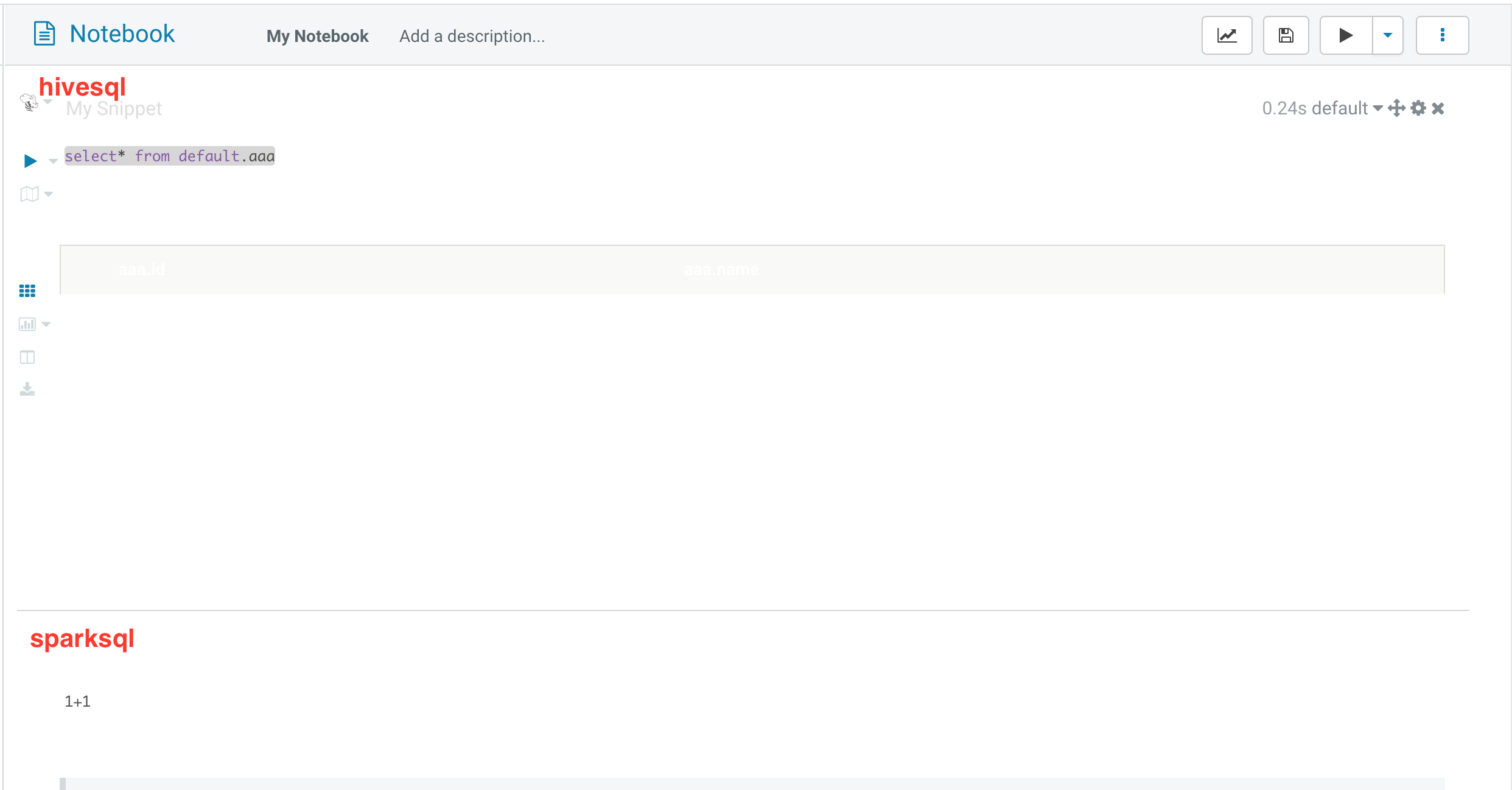

Notebook 查询对比分析

notebook 可以快速的构建间的查询,将查询结果放到一起做对比分析,支持5种类型:hive,impala,spark,java,shell。

1. 依次单击 Editor,Notebook,单击"+" 号添加需要的查询。

2. 依次单击保存可保存添加的notebook,单击执行可以执行整个 notebook。

文档反馈