- Release Notes and Announcements

- Release Notes

- Announcements

- qGPU Service Adjustment

- Version Upgrade of Master Add-On of TKE Managed Cluster

- Upgrading tke-monitor-agent

- Discontinuing TKE API 2.0

- Instructions on Cluster Resource Quota Adjustment

- Discontinuing Kubernetes v1.14 and Earlier Versions

- Deactivation of Scaling Group Feature

- Notice on TPS Discontinuation on May 16, 2022 at 10:00 (UTC +8)

- Basic Monitoring Architecture Upgrade

- Starting Charging on Managed Clusters

- Instructions on Stopping Delivering the Kubeconfig File to Nodes

- Security Vulnerability Fix Description

- Release Notes

- Product Introduction

- Purchase Guide

- Quick Start

- TKE General Cluster Guide

- TKE General Cluster Overview

- Purchase a TKE General Cluster

- High-risk Operations of Container Service

- Deploying Containerized Applications in the Cloud

- Kubernetes API Operation Guide

- Open Source Components

- Permission Management

- Cluster Management

- Cluster Overview

- Cluster Hosting Modes Introduction

- Cluster Lifecycle

- Creating a Cluster

- Deleting a Cluster

- Cluster Scaling

- Changing the Cluster Operating System

- Connecting to a Cluster

- Upgrading a Cluster

- Enabling IPVS for a Cluster

- Enabling GPU Scheduling for a Cluster

- Custom Kubernetes Component Launch Parameters

- Using KMS for Kubernetes Data Source Encryption

- Images

- Worker node introduction

- Normal Node Management

- Native Node Management

- Overview

- Purchasing Native Nodes

- Lifecycle of a Native Node

- Native Node Parameters

- Creating Native Nodes

- Deleting Native Nodes

- Self-Heal Rules

- Declarative Operation Practice

- Native Node Scaling

- In-place Pod Configuration Adjustment

- Enabling SSH Key Login for a Native Node

- Management Parameters

- Enabling Public Network Access for a Native Node

- Supernode management

- Registered Node Management

- GPU Share

- Kubernetes Object Management

- Overview

- Namespace

- Workload

- Deployment Management

- StatefulSet Management

- DaemonSet Management

- Job Management

- CronJob Management

- Setting the Resource Limit of Workload

- Setting the Scheduling Rule for a Workload

- Setting the Health Check for a Workload

- Setting the Run Command and Parameter for a Workload

- Using a Container Image in a TCR Enterprise Instance to Create a Workload

- Auto Scaling

- Configuration

- Register node management

- Service Management

- Ingress Management

- Storage Management

- Application and Add-On Feature Management Description

- Add-On Management

- Add-on Overview

- Add-On Lifecycle Management

- CBS-CSI Description

- UserGroupAccessControl

- COS-CSI

- CFS-CSI

- P2P

- OOMGuard

- TCR Introduction

- TCR Hosts Updater

- DNSAutoscaler

- NodeProblemDetectorPlus Add-on

- NodeLocalDNSCache

- Network Policy

- DynamicScheduler

- DeScheduler

- Nginx-ingress

- HPC

- Description of tke-monitor-agent

- GPU-Manager Add-on

- CFSTURBO-CSI

- tke-log-agent

- Helm Application

- Application Market

- Network Management

- Container Network Overview

- GlobalRouter Mode

- VPC-CNI Mode

- VPC-CNI Mode

- Multiple Pods with Shared ENI Mode

- Pods with Exclusive ENI Mode

- Static IP Address Mode Instructions

- Non-static IP Address Mode Instructions

- Interconnection Between VPC-CNI and Other Cloud Resources/IDC Resources

- Security Group of VPC-CNI Mode

- Instructions on Binding an EIP to a Pod

- VPC-CNI Component Description

- Limits on the Number of Pods in VPC-CNI Mode

- Cilium-Overlay Mode

- OPS Center

- Log Management

- Backup Center

- Cloud Native Monitoring

- Remote Terminals

- TKE Serverless Cluster Guide

- TKE Edge Cluster Guide

- TKE Registered Cluster Guide

- TKE Container Instance Guide

- Cloud Native Service Guide

- Best Practices

- Cluster

- Cluster Migration

- Serverless Cluster

- Edge Cluster

- Security

- Service Deployment

- Hybrid Cloud

- Network

- DNS

- Using Network Policy for Network Access Control

- Deploying NGINX Ingress on TKE

- Nginx Ingress High-Concurrency Practices

- Nginx Ingress Best Practices

- Limiting the bandwidth on pods in TKE

- Directly connecting TKE to the CLB of pods based on the ENI

- Use CLB-Pod Direct Connection on TKE

- Obtaining the Real Client Source IP in TKE

- Using Traefik Ingress in TKE

- Release

- Logs

- Monitoring

- OPS

- Removing and Re-adding Nodes from and to Cluster

- Using Ansible to Batch Operate TKE Nodes

- Using Cluster Audit for Troubleshooting

- Renewing a TKE Ingress Certificate

- Using cert-manager to Issue Free Certificates

- Using cert-manager to Issue Free Certificate for DNSPod Domain Name

- Using the TKE NPDPlus Plug-In to Enhance the Self-Healing Capability of Nodes

- Using kubecm to Manage Multiple Clusters kubeconfig

- Quick Troubleshooting Using TKE Audit and Event Services

- Customizing RBAC Authorization in TKE

- Clearing De-registered Tencent Cloud Account Resources

- Terraform

- DevOps

- Auto Scaling

- Cluster Auto Scaling Practices

- Using tke-autoscaling-placeholder to Implement Auto Scaling in Seconds

- Installing metrics-server on TKE

- Using Custom Metrics for Auto Scaling in TKE

- Utilizing HPA to Auto Scale Businesses on TKE

- Using VPA to Realize Pod Scaling up and Scaling down in TKE

- Adjusting HPA Scaling Sensitivity Based on Different Business Scenarios

- Storage

- Containerization

- Microservice

- Cost Management

- Fault Handling

- Disk Full

- High Workload

- Memory Fragmentation

- Cluster DNS Troubleshooting

- Cluster kube-proxy Troubleshooting

- Cluster API Server Inaccessibility Troubleshooting

- Service and Ingress Inaccessibility Troubleshooting

- Troubleshooting for Pod Network Inaccessibility

- Pod Status Exception and Handling

- Authorizing Tencent Cloud OPS Team for Troubleshooting

- Engel Ingres appears in Connechtin Reverside

- CLB Loopback

- CLB Ingress Creation Error

- API Documentation

- History

- Introduction

- API Category

- Making API Requests

- Cluster APIs

- DescribeEncryptionStatus

- DisableEncryptionProtection

- EnableEncryptionProtection

- AcquireClusterAdminRole

- CreateClusterEndpoint

- CreateClusterEndpointVip

- DeleteCluster

- DeleteClusterEndpoint

- DeleteClusterEndpointVip

- DescribeAvailableClusterVersion

- DescribeClusterAuthenticationOptions

- DescribeClusterCommonNames

- DescribeClusterEndpointStatus

- DescribeClusterEndpointVipStatus

- DescribeClusterEndpoints

- DescribeClusterKubeconfig

- DescribeClusterLevelAttribute

- DescribeClusterLevelChangeRecords

- DescribeClusterSecurity

- DescribeClusterStatus

- DescribeClusters

- DescribeEdgeAvailableExtraArgs

- DescribeEdgeClusterExtraArgs

- DescribeResourceUsage

- DisableClusterDeletionProtection

- EnableClusterDeletionProtection

- GetClusterLevelPrice

- GetUpgradeInstanceProgress

- ModifyClusterAttribute

- ModifyClusterAuthenticationOptions

- ModifyClusterEndpointSP

- UpgradeClusterInstances

- CreateCluster

- UpdateClusterVersion

- UpdateClusterKubeconfig

- DescribeBackupStorageLocations

- DeleteBackupStorageLocation

- CreateBackupStorageLocation

- Add-on APIs

- Network APIs

- Node APIs

- Node Pool APIs

- TKE Edge Cluster APIs

- DescribeTKEEdgeScript

- DescribeTKEEdgeExternalKubeconfig

- DescribeTKEEdgeClusters

- DescribeTKEEdgeClusterStatus

- DescribeTKEEdgeClusterCredential

- DescribeEdgeClusterInstances

- DescribeEdgeCVMInstances

- DescribeECMInstances

- DescribeAvailableTKEEdgeVersion

- DeleteTKEEdgeCluster

- DeleteEdgeClusterInstances

- DeleteEdgeCVMInstances

- DeleteECMInstances

- CreateTKEEdgeCluster

- CreateECMInstances

- CheckEdgeClusterCIDR

- ForwardTKEEdgeApplicationRequestV3

- UninstallEdgeLogAgent

- InstallEdgeLogAgent

- DescribeEdgeLogSwitches

- CreateEdgeLogConfig

- CreateEdgeCVMInstances

- UpdateEdgeClusterVersion

- DescribeEdgeClusterUpgradeInfo

- Cloud Native Monitoring APIs

- Virtual node APIs

- Other APIs

- Scaling group APIs

- Data Types

- Error Codes

- API Mapping Guide

- TKE Insight

- TKE Scheduling

- FAQs

- Service Agreement

- Contact Us

- Purchase Channels

- Glossary

- User Guide(Old)

- Release Notes and Announcements

- Release Notes

- Announcements

- qGPU Service Adjustment

- Version Upgrade of Master Add-On of TKE Managed Cluster

- Upgrading tke-monitor-agent

- Discontinuing TKE API 2.0

- Instructions on Cluster Resource Quota Adjustment

- Discontinuing Kubernetes v1.14 and Earlier Versions

- Deactivation of Scaling Group Feature

- Notice on TPS Discontinuation on May 16, 2022 at 10:00 (UTC +8)

- Basic Monitoring Architecture Upgrade

- Starting Charging on Managed Clusters

- Instructions on Stopping Delivering the Kubeconfig File to Nodes

- Security Vulnerability Fix Description

- Release Notes

- Product Introduction

- Purchase Guide

- Quick Start

- TKE General Cluster Guide

- TKE General Cluster Overview

- Purchase a TKE General Cluster

- High-risk Operations of Container Service

- Deploying Containerized Applications in the Cloud

- Kubernetes API Operation Guide

- Open Source Components

- Permission Management

- Cluster Management

- Cluster Overview

- Cluster Hosting Modes Introduction

- Cluster Lifecycle

- Creating a Cluster

- Deleting a Cluster

- Cluster Scaling

- Changing the Cluster Operating System

- Connecting to a Cluster

- Upgrading a Cluster

- Enabling IPVS for a Cluster

- Enabling GPU Scheduling for a Cluster

- Custom Kubernetes Component Launch Parameters

- Using KMS for Kubernetes Data Source Encryption

- Images

- Worker node introduction

- Normal Node Management

- Native Node Management

- Overview

- Purchasing Native Nodes

- Lifecycle of a Native Node

- Native Node Parameters

- Creating Native Nodes

- Deleting Native Nodes

- Self-Heal Rules

- Declarative Operation Practice

- Native Node Scaling

- In-place Pod Configuration Adjustment

- Enabling SSH Key Login for a Native Node

- Management Parameters

- Enabling Public Network Access for a Native Node

- Supernode management

- Registered Node Management

- GPU Share

- Kubernetes Object Management

- Overview

- Namespace

- Workload

- Deployment Management

- StatefulSet Management

- DaemonSet Management

- Job Management

- CronJob Management

- Setting the Resource Limit of Workload

- Setting the Scheduling Rule for a Workload

- Setting the Health Check for a Workload

- Setting the Run Command and Parameter for a Workload

- Using a Container Image in a TCR Enterprise Instance to Create a Workload

- Auto Scaling

- Configuration

- Register node management

- Service Management

- Ingress Management

- Storage Management

- Application and Add-On Feature Management Description

- Add-On Management

- Add-on Overview

- Add-On Lifecycle Management

- CBS-CSI Description

- UserGroupAccessControl

- COS-CSI

- CFS-CSI

- P2P

- OOMGuard

- TCR Introduction

- TCR Hosts Updater

- DNSAutoscaler

- NodeProblemDetectorPlus Add-on

- NodeLocalDNSCache

- Network Policy

- DynamicScheduler

- DeScheduler

- Nginx-ingress

- HPC

- Description of tke-monitor-agent

- GPU-Manager Add-on

- CFSTURBO-CSI

- tke-log-agent

- Helm Application

- Application Market

- Network Management

- Container Network Overview

- GlobalRouter Mode

- VPC-CNI Mode

- VPC-CNI Mode

- Multiple Pods with Shared ENI Mode

- Pods with Exclusive ENI Mode

- Static IP Address Mode Instructions

- Non-static IP Address Mode Instructions

- Interconnection Between VPC-CNI and Other Cloud Resources/IDC Resources

- Security Group of VPC-CNI Mode

- Instructions on Binding an EIP to a Pod

- VPC-CNI Component Description

- Limits on the Number of Pods in VPC-CNI Mode

- Cilium-Overlay Mode

- OPS Center

- Log Management

- Backup Center

- Cloud Native Monitoring

- Remote Terminals

- TKE Serverless Cluster Guide

- TKE Edge Cluster Guide

- TKE Registered Cluster Guide

- TKE Container Instance Guide

- Cloud Native Service Guide

- Best Practices

- Cluster

- Cluster Migration

- Serverless Cluster

- Edge Cluster

- Security

- Service Deployment

- Hybrid Cloud

- Network

- DNS

- Using Network Policy for Network Access Control

- Deploying NGINX Ingress on TKE

- Nginx Ingress High-Concurrency Practices

- Nginx Ingress Best Practices

- Limiting the bandwidth on pods in TKE

- Directly connecting TKE to the CLB of pods based on the ENI

- Use CLB-Pod Direct Connection on TKE

- Obtaining the Real Client Source IP in TKE

- Using Traefik Ingress in TKE

- Release

- Logs

- Monitoring

- OPS

- Removing and Re-adding Nodes from and to Cluster

- Using Ansible to Batch Operate TKE Nodes

- Using Cluster Audit for Troubleshooting

- Renewing a TKE Ingress Certificate

- Using cert-manager to Issue Free Certificates

- Using cert-manager to Issue Free Certificate for DNSPod Domain Name

- Using the TKE NPDPlus Plug-In to Enhance the Self-Healing Capability of Nodes

- Using kubecm to Manage Multiple Clusters kubeconfig

- Quick Troubleshooting Using TKE Audit and Event Services

- Customizing RBAC Authorization in TKE

- Clearing De-registered Tencent Cloud Account Resources

- Terraform

- DevOps

- Auto Scaling

- Cluster Auto Scaling Practices

- Using tke-autoscaling-placeholder to Implement Auto Scaling in Seconds

- Installing metrics-server on TKE

- Using Custom Metrics for Auto Scaling in TKE

- Utilizing HPA to Auto Scale Businesses on TKE

- Using VPA to Realize Pod Scaling up and Scaling down in TKE

- Adjusting HPA Scaling Sensitivity Based on Different Business Scenarios

- Storage

- Containerization

- Microservice

- Cost Management

- Fault Handling

- Disk Full

- High Workload

- Memory Fragmentation

- Cluster DNS Troubleshooting

- Cluster kube-proxy Troubleshooting

- Cluster API Server Inaccessibility Troubleshooting

- Service and Ingress Inaccessibility Troubleshooting

- Troubleshooting for Pod Network Inaccessibility

- Pod Status Exception and Handling

- Authorizing Tencent Cloud OPS Team for Troubleshooting

- Engel Ingres appears in Connechtin Reverside

- CLB Loopback

- CLB Ingress Creation Error

- API Documentation

- History

- Introduction

- API Category

- Making API Requests

- Cluster APIs

- DescribeEncryptionStatus

- DisableEncryptionProtection

- EnableEncryptionProtection

- AcquireClusterAdminRole

- CreateClusterEndpoint

- CreateClusterEndpointVip

- DeleteCluster

- DeleteClusterEndpoint

- DeleteClusterEndpointVip

- DescribeAvailableClusterVersion

- DescribeClusterAuthenticationOptions

- DescribeClusterCommonNames

- DescribeClusterEndpointStatus

- DescribeClusterEndpointVipStatus

- DescribeClusterEndpoints

- DescribeClusterKubeconfig

- DescribeClusterLevelAttribute

- DescribeClusterLevelChangeRecords

- DescribeClusterSecurity

- DescribeClusterStatus

- DescribeClusters

- DescribeEdgeAvailableExtraArgs

- DescribeEdgeClusterExtraArgs

- DescribeResourceUsage

- DisableClusterDeletionProtection

- EnableClusterDeletionProtection

- GetClusterLevelPrice

- GetUpgradeInstanceProgress

- ModifyClusterAttribute

- ModifyClusterAuthenticationOptions

- ModifyClusterEndpointSP

- UpgradeClusterInstances

- CreateCluster

- UpdateClusterVersion

- UpdateClusterKubeconfig

- DescribeBackupStorageLocations

- DeleteBackupStorageLocation

- CreateBackupStorageLocation

- Add-on APIs

- Network APIs

- Node APIs

- Node Pool APIs

- TKE Edge Cluster APIs

- DescribeTKEEdgeScript

- DescribeTKEEdgeExternalKubeconfig

- DescribeTKEEdgeClusters

- DescribeTKEEdgeClusterStatus

- DescribeTKEEdgeClusterCredential

- DescribeEdgeClusterInstances

- DescribeEdgeCVMInstances

- DescribeECMInstances

- DescribeAvailableTKEEdgeVersion

- DeleteTKEEdgeCluster

- DeleteEdgeClusterInstances

- DeleteEdgeCVMInstances

- DeleteECMInstances

- CreateTKEEdgeCluster

- CreateECMInstances

- CheckEdgeClusterCIDR

- ForwardTKEEdgeApplicationRequestV3

- UninstallEdgeLogAgent

- InstallEdgeLogAgent

- DescribeEdgeLogSwitches

- CreateEdgeLogConfig

- CreateEdgeCVMInstances

- UpdateEdgeClusterVersion

- DescribeEdgeClusterUpgradeInfo

- Cloud Native Monitoring APIs

- Virtual node APIs

- Other APIs

- Scaling group APIs

- Data Types

- Error Codes

- API Mapping Guide

- TKE Insight

- TKE Scheduling

- FAQs

- Service Agreement

- Contact Us

- Purchase Channels

- Glossary

- User Guide(Old)

Using Nginx Ingress to Implement Canary Release

Last updated: 2020-11-11 14:52:29

This document introduces the use cases, usage, and practices of implementing Canary Release by using Nginx Ingress.

Note:

For clusters that implement Canary Release by using Nginx Ingress, Nginx Ingress should be deployed as the Ingress Controller, and a unified traffic entry should be opened for external access. For more information, see Deploying Nginx Ingress on TKE.

Use Cases

The application scenarios where Canary Release is implemented through Nginx Ingress mainly depend on the business traffic splitting policy. Currently, Nginx Ingress supports three types of traffic splitting policies, which are based on Header, Cookie, and service weight, respectively. Based on these three types of policies, the following two deployment scenarios can be implemented:

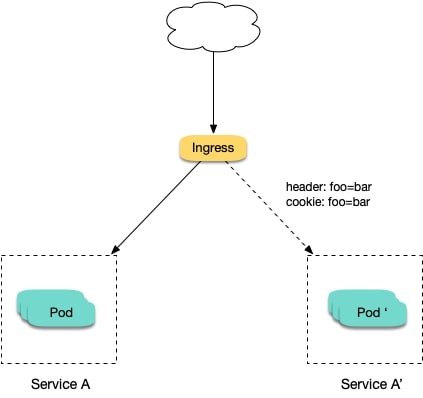

Scenario 1: providing some users with a new version for beta testing

Assume that Service A, which provides Layer-7 service to external users, has been running online; you want to activate a newly developed version, Service A', for some users as a beta test, without replacing the existing Service A; you want to let it run stably for some time before gradually and fully activating the new version and smoothly deactivating the old version.

For this scenario, you can use Nginx Ingress to make deployments under traffic split policies based on Header or Cookie. Business uses Header or Cookie to mark different types of users and configures Ingress to enable requests with the specified Header or Cookie to be forwarded to the new version while other requests are still forwarded to the old version. In this way, the new version is available to some users for beta testing. See the figure below:

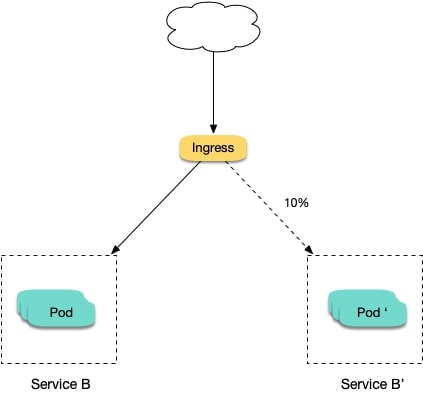

Scenario 2: splitting a certain proportion of traffic to the new version

Assume that Service B, which provides Layer-7 service to external users, has been running online for some time; you have rectified some issues in Service B and to activate a new version, Service B', for some users for beta testing, without replacing the existing Service B; and you want to first split 10% of the traffic to the new version and let it run stably for some time before gradually increasing the proportion of traffic to the new version to ultimately replace and deactivate the old version smoothly. See the figure below:

Annotation Descriptions

You can implement Canary Release by specifying the annotation supported by Nginx Ingress for Ingress resources. You need to create two Ingresses for the service: one is a regular Ingress, and the other, which carries the fixed annotation of nginx.ingress.kubernetes.io/canary: "true" is called a Canary Ingress. The Canary Ingress usually represents the new version of a service. By configuring this and the annotation of the traffic splitting policy, you can implement Canary Release in multiple scenarios. The following section introduces relevant annotations in detail:

nginx.ingress.kubernetes.io/canary-by-header

Indicates that if the request header contains the specified header name and the value isalways, the request will be forwarded to the corresponding real server defined by the Ingress. If the value isnever, it will not be forwarded and can be used for rollback to the old version. In case of other values, this annotation will be ignored.nginx.ingress.kubernetes.io/canary-by-header-value

This annotation can be a supplement tocanary-by-header. You can specify a custom value for the request header, including but not limited toalwaysornever. When the value of the request header matches the specified custom value, the request will be forwarded to the corresponding real server defined by the Ingress. In case of other values, this annotation will be ignored.nginx.ingress.kubernetes.io/canary-by-header-pattern

This annotation is similar tocanary-by-header-value. The difference is that this annotation uses a regular expression, instead of a fixed value, to match the value of the request header. If this annotation andcanary-by-header-valueexist at the same time, this annotation will be ignored.nginx.ingress.kubernetes.io/canary-by-cookie

Similar tocanary-by-header, this annotation is used for cookie and supports onlyalwaysandnever.nginx.ingress.kubernetes.io/canary-weight

Indicates the proportion of the traffic assigned to the Canary Ingress. The value range is [0-100]. For example, the value 10 indicates that 10% of the traffic is assigned to the corresponding real server of the Canary Ingress.

Note:

- The above rules will be assessed according to the priority sequence:

canary-by-header > canary-by-cookie > canary-weight.- When an Ingress is marked as the Canary Ingress, all non-Canary annotations, other than

nginx.ingress.kubernetes.io/load-balanceandnginx.ingress.kubernetes.io/upstream-hash-by, will be ignored.

Sample

Note:

The following sample uses a TKE cluster as an example. From this sample, you can quickly learn how to implement Canary Release by using Nginx Ingress. Please note:

- For a single service, only one Canary Ingress can be defined, so the real server can only support up to two versions.

- A domain name must be configured in the Ingress. Otherwise, the Ingress will not work.

- Even if all traffic is switched to the Canary Ingress, the old version still needs to exist. Otherwise, an error will be reported.

Using YAML to create resources

This document introduces the following two methods for using YAML to deploy workloads and create Services:

- Method 1: on the details page of the TKE or EKS cluster, click Use YAML to Create Resources in the upper right corner and input the YAML sample file content in this document to the editing interface.

- Method 2: save the sample YAML as a file and use kubectl to specify the YAML file to create resources, for example,

kubectl apply -f xx.yaml.

Deploying two versions of a service

- Deploy the first version of Deployment in the cluster. Here nginx-v1 is used as an example. The YAML sample is as follows:

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-v1

spec:

replicas: 1

selector:

matchLabels:

app: nginx

version: v1

template:

metadata:

labels:

app: nginx

version: v1

spec:

containers:

- name: nginx

image: "openresty/openresty:centos"

ports:

- name: http

protocol: TCP

containerPort: 80

volumeMounts:

- mountPath: /usr/local/openresty/nginx/conf/nginx.conf

name: config

subPath: nginx.conf

volumes:

- name: config

configMap:

name: nginx-v1

---

apiVersion: v1

kind: ConfigMap

metadata:

labels:

app: nginx

version: v1

name: nginx-v1

data:

nginx.conf: |-

worker_processes 1;

events {

accept_mutex on;

multi_accept on;

use epoll;

worker_connections 1024;

}

http {

ignore_invalid_headers off;

server {

listen 80;

location / {

access_by_lua '

local header_str = ngx.say("nginx-v1")

';

}

}

}

---

apiVersion: v1

kind: Service

metadata:

name: nginx-v1

spec:

type: ClusterIP

ports:

- port: 80

protocol: TCP

name: http

selector:

app: nginx

version: v1- Deploy the second version of Deployment. Here nginx-v2 is used as an example. The YAML sample is as follows:

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-v2

spec:

replicas: 1

selector:

matchLabels:

app: nginx

version: v2

template:

metadata:

labels:

app: nginx

version: v2

spec:

containers:

- name: nginx

image: "openresty/openresty:centos"

ports:

- name: http

protocol: TCP

containerPort: 80

volumeMounts:

- mountPath: /usr/local/openresty/nginx/conf/nginx.conf

name: config

subPath: nginx.conf

volumes:

- name: config

configMap:

name: nginx-v2

---

apiVersion: v1

kind: ConfigMap

metadata:

labels:

app: nginx

version: v2

name: nginx-v2

data:

nginx.conf: |-

worker_processes 1;

events {

accept_mutex on;

multi_accept on;

use epoll;

worker_connections 1024;

}

http {

ignore_invalid_headers off;

server {

listen 80;

location / {

access_by_lua '

local header_str = ngx.say("nginx-v2")

';

}

}

}

---

apiVersion: v1

kind: Service

metadata:

name: nginx-v2

spec:

type: ClusterIP

ports:

- port: 80

protocol: TCP

name: http

selector:

app: nginx

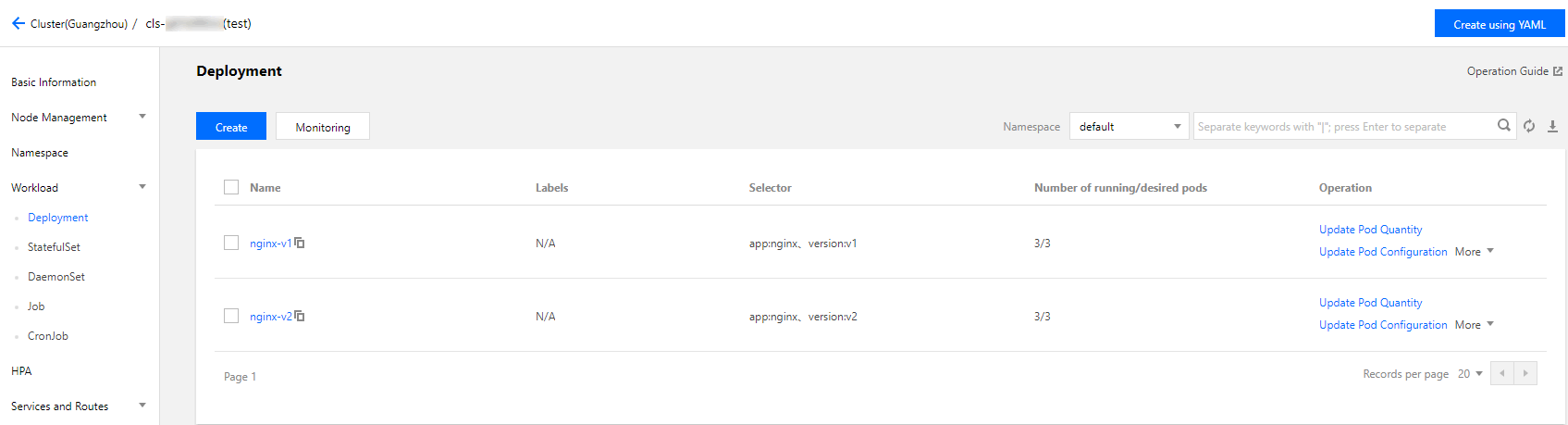

version: v2You can log in to the TKE Console and go to the workload details page of the cluster to view the deployment information, as shown in the figure below:

- Create an Ingress, open the service to external access, and point to the v1 service. The YAML sample is as follows:

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: nginx

annotations:

kubernetes.io/ingress.class: nginx

spec:

rules:

- host: canary.example.com

http:

paths:

- backend:

serviceName: nginx-v1

servicePort: 80

path: /- Run the following commands to verify access.

curl -H "Host: canary.example.com" http://EXTERNAL-IP # EXTERNAL-IP should be replaced with the opened IP address of Nginx Ingress.The returned result is as follows:

nginx-v1Traffic splitting based on the header

Create a Canary Ingress, specify the real server of the v2 version, and add an annotation to enable requests with the Region field in the header and the corresponding value of cd or sz to be forwarded to the current Canary Ingress. For example, if you select users in Chengdu and Shenzhen for the beta test of the new version, the YAML sample is as follows:

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-by-header: "Region"

nginx.ingress.kubernetes.io/canary-by-header-pattern: "cd|sz"

name: nginx-canary

spec:

rules:

- host: canary.example.com

http:

paths:

- backend:

serviceName: nginx-v2

servicePort: 80

path: /Run the following commands to perform an access test.

$ curl -H "Host: canary.example.com" -H "Region: cd" http://EXTERNAL-IP # EXTERNAL-IP should be replaced with the opened IP address of Nginx Ingress.

nginx-v2

$ curl -H "Host: canary.example.com" -H "Region: bj" http://EXTERNAL-IP

nginx-v1

$ curl -H "Host: canary.example.com" -H "Region: cd" http://EXTERNAL-IP

nginx-v2

$ curl -H "Host: canary.example.com" http://EXTERNAL-IP

nginx-v1You can see that the v2 service responds only to requests in which the value of the header field Region is cd or sz.

Traffic splitting based on cookies

To use cookies, you cannot set a custom value. For example, if you want to select users in Chengdu for the beta test, then only requests with the cookie of user_from_cd will be forwarded to the current Canary Ingress. The YAML sample is as follows:

Note:

If you have created a Canary Ingress through the above steps, please delete it and then refer to this step for creation.

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-by-cookie: "user_from_cd"

name: nginx-canary

spec:

rules:

- host: canary.example.com

http:

paths:

- backend:

serviceName: nginx-v2

servicePort: 80

path: /Run the following commands to perform an access test.

$ curl -s -H "Host: canary.example.com" --cookie "user_from_cd=always" http://EXTERNAL-IP # EXTERNAL-IP should be replaced with the opened IP address of Nginx Ingress.

nginx-v2

$ curl -s -H "Host: canary.example.com" --cookie "user_from_bj=always" http://EXTERNAL-IP

nginx-v1

$ curl -s -H "Host: canary.example.com" http://EXTERNAL-IP

nginx-v1You can view that the v2 service responds only to requests in which the value of the cookie user_from_cd is always.

Traffic splitting based on service weight

To use a Canary Ingress based on service weight, you only need to specify the proportion of traffic to be imported. For example, to import 10% of traffic to the v2 version, the YAML sample is as follows:

Note:

If you have created a Canary Ingress through the above steps, please delete it and then refer to this step to create a new one.

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-weight: "10"

name: nginx-canary

spec:

rules:

- host: canary.example.com

http:

paths:

- backend:

serviceName: nginx-v2

servicePort: 80

path: /Run the following commands to perform an access test.

$ for i in {1..10}; do curl -H "Host: canary.example.com" http://EXTERNAL-IP; done;

nginx-v1

nginx-v1

nginx-v1

nginx-v1

nginx-v1

nginx-v1

nginx-v2

nginx-v1

nginx-v1

nginx-v1You can see that the chance of the v2 service responding is 10%, which corresponds to the 10% service weight setting.

Yes

Yes

No

No

Was this page helpful?