Cloud Load Balancer(CLB)関連

ダウンロード

フォーカスモード

フォントサイズ

ここではCloud Load Balancer(CLB)に関するよくあるご質問をまとめ、Service/Ingress CLB関連のよくある各種トラブルの原因とその解決方法をご紹介します。

このドキュメントは以下のお客様向けとなります。

Pod、ワークロード/Workload、Service、Ingressなど、K8Sの基本概念に精通している方。

Tencent CloudコンソールのコンテナサービスTKE Serverlessクラスターの一般的な操作に精通している方。

kubectlコマンドラインツールによるK8Sクラスター内のリソース操作に精通している方。

注意:

K8Sクラスター内のリソースの操作はさまざまな方法で行うことができます。このドキュメントでは、Tencent Cloudコンソールによる操作、ならびにkubectlコマンドラインツールによる操作の方法についてご説明します。

TKE ServerlessはどのIngress用にCLBインスタンスを作成できますか。

TKE Serverlessは次のような条件を満たすIngress用にCLBインスタンスを作成できます。

Ingressリソースに対する要件 | その他の説明 |

annotations内に次のキーバリューペアが含まれること: kubernetes.io/ingress.class: qcloud | TKE ServerlessでIngress用にCLBインスタンスを作成したくない場合、例えばNginx-ingressを使用したい場合などは、annotations内に前述のキーバリューペアを含めないようにします。 |

TKE ServerlessのIngress用に作成したCLBインスタンスを確認するにはどうすればよいですか。

Ingress用のCLBインスタンスの作成に成功すると、TKE ServerlessはCLBインスタンスのVIPをIngressリソースの

staus.loadBalancer.ingressに書き込み、以下のキーバリューペアをannotationsに書き込みます。kubernetes.io/ingress.qcloud-loadbalance-id: CLBインスタンスID

TKE ServerlessのIngress用に作成したCLBインスタンスを確認したい場合の具体的な手順は次のとおりです。

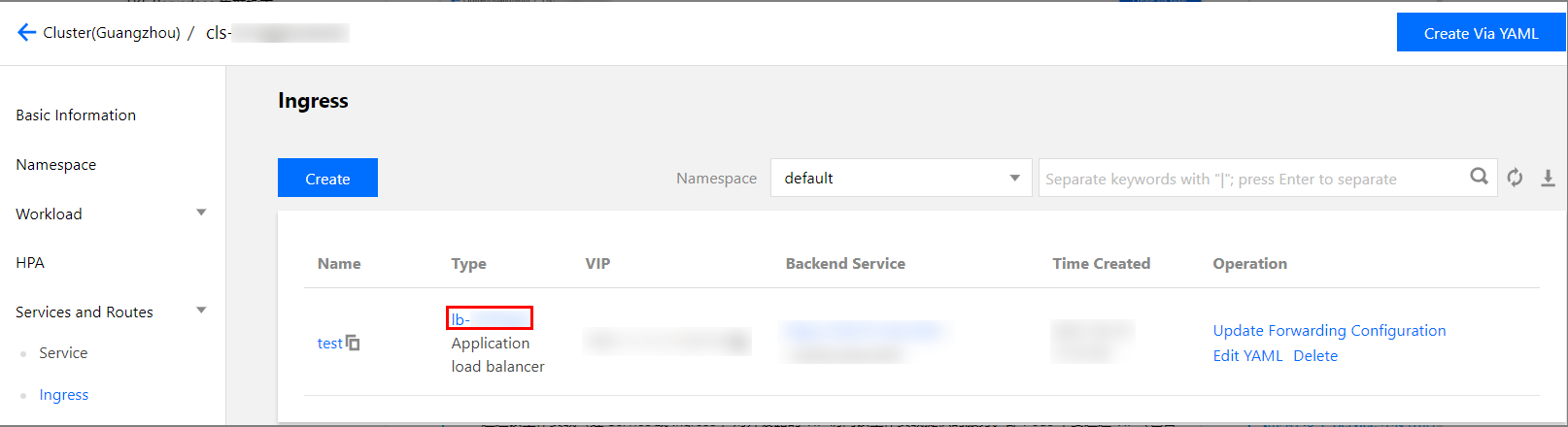

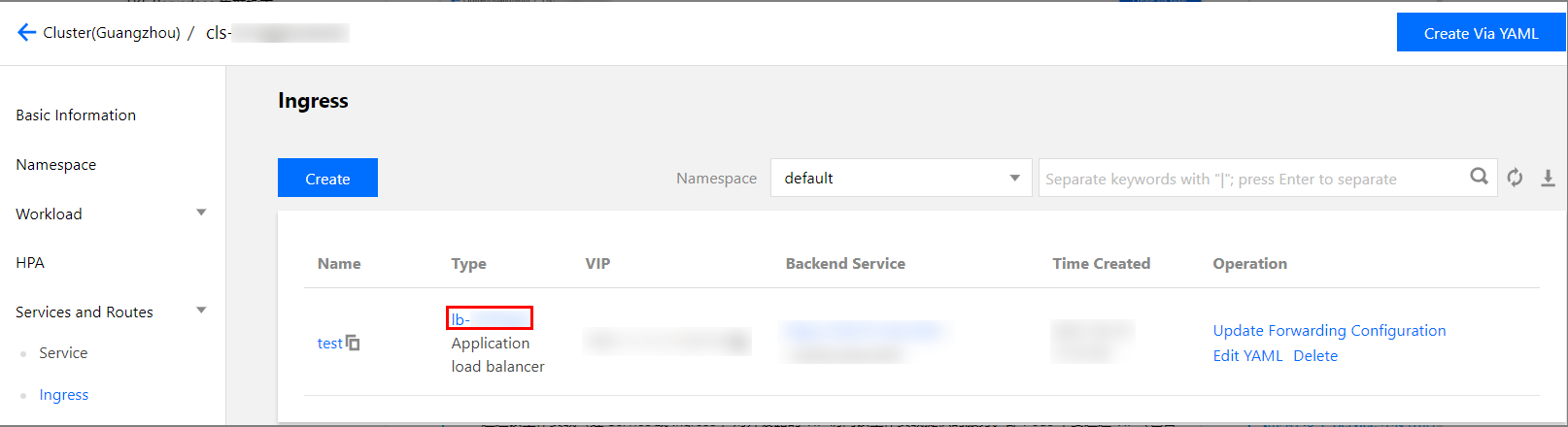

1. TKEコンソールにログインし、左側ナビゲーションバーのクラスターを選択します。

2. クラスターリストページで、クラスターIDを選択してクラスター管理ページに進みます。

3. クラスター管理ページで、左側のサービスとルーティング > Ingressを選択します。

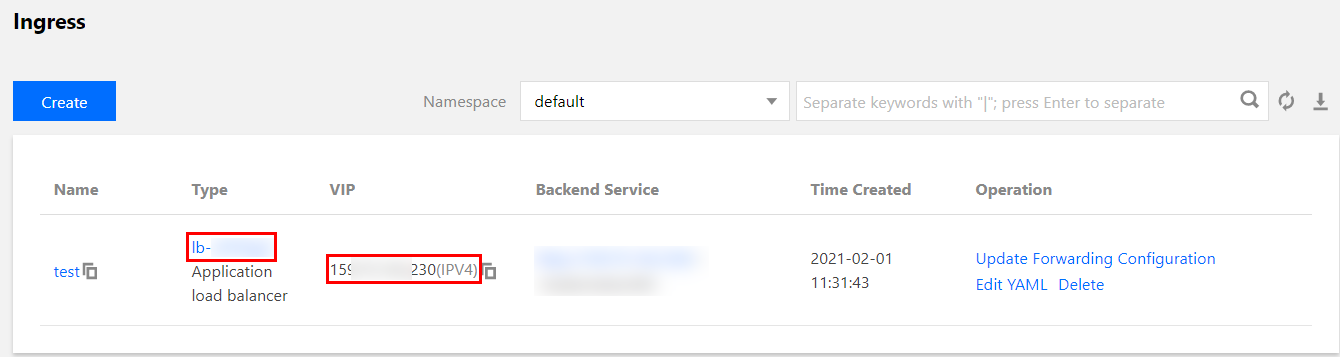

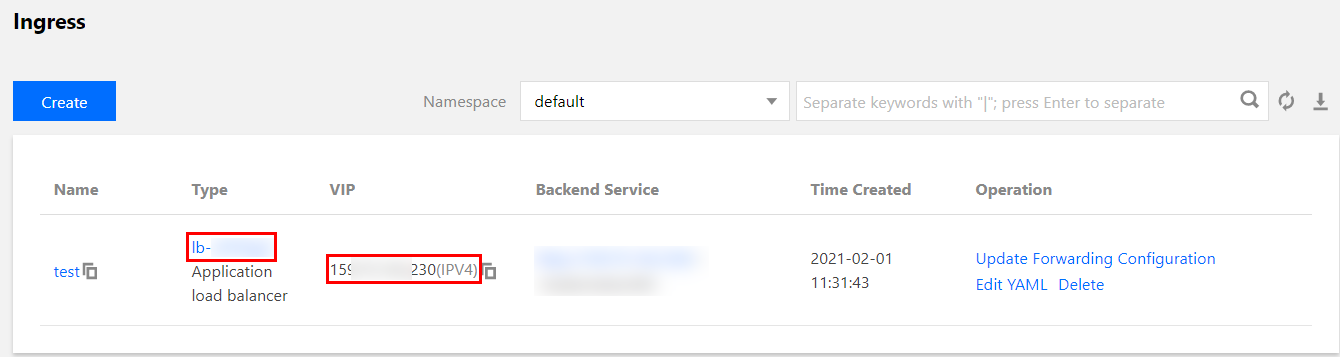

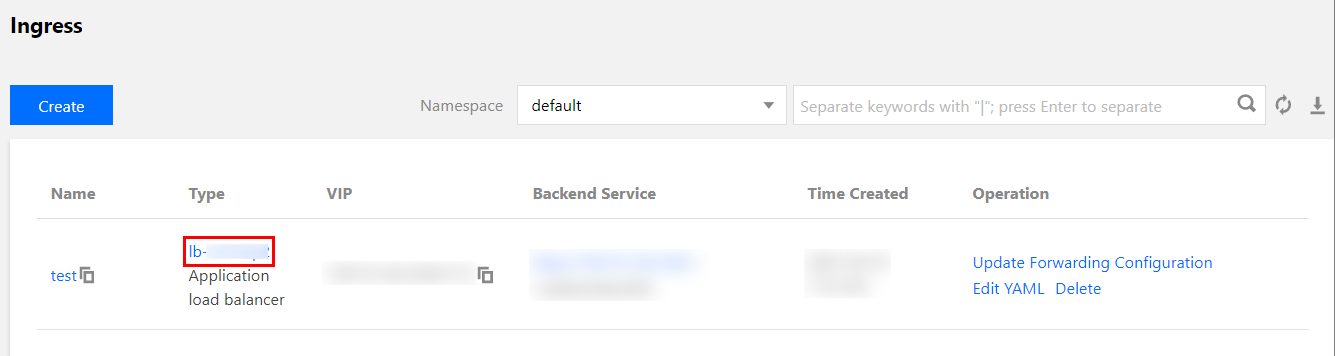

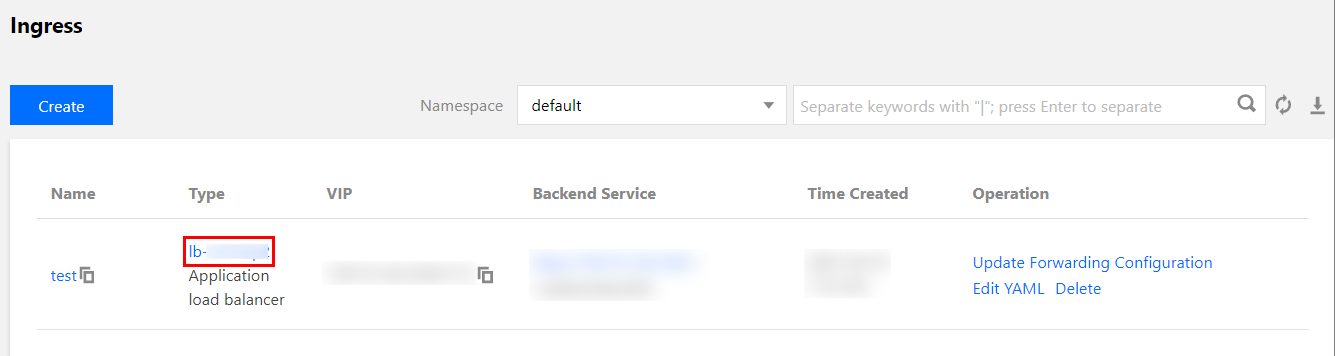

4. 「Ingress」ページで、CLBインスタンスIDとそのVIPを確認します。下の図をご覧ください。

TKE ServerlessはどのService用にCLBインスタンスを作成できますか。

TKE Serverlessは次のような条件を満たすService用にCLBインスタンスを作成できます。

K8Sバージョン | Serviceリソースに対する要件 |

TKE ServerlessがサポートするすべてのK8Sバージョン | spec.typeがLoadBalancerである |

改造版K8S(kubectl versionから返されるServer GitVersionにサフィックス「eks.」または「tke.」がついているもの) | spec.typeがClusterIPであり、かつspec.clusterIPの値がNoneではない(すなわちHeadlessではないClusterIPタイプのService) |

非改造版K8S(kubectl versionから返されるServer GitVersionにサフィックス「eks.」または「tke.」がついていないもの) | spec.typeがClusterIPであり、かつspec.clusterIPを空文字列("")に明確に指定している |

注意:

CLBインスタンスの作成に成功すると、TKE Serverlessは以下のキーバリューペアをService annotationsに書き込みます。

service.kubernetes.io/loadbalance-id: CLBインスタンスID

TKE ServerlessのService用に作成したCLBインスタンスを確認するにはどうすればよいですか。

Service用のCLBインスタンスの作成に成功すると、TKE ServerlessはCLBインスタンスのVIPをServiceリソースの

status.loadBalancer.ingressに書き込み、以下のキーバリューペアをannotationsに書き込みます。kubernetes.io/ingress.qcloud-loadbalance-id: CLBインスタンスID

TKE ServerlessのService用に作成したCLBインスタンスを確認したい場合の具体的な手順は次のとおりです。

1. TKEコンソールにログインし、左側ナビゲーションバーのクラスターを選択します。

2. クラスターリストページで、クラスターIDを選択してクラスター管理ページに進みます。

3. クラスター管理ページで、左側のサービスとルーティング > Serviceを選択します。

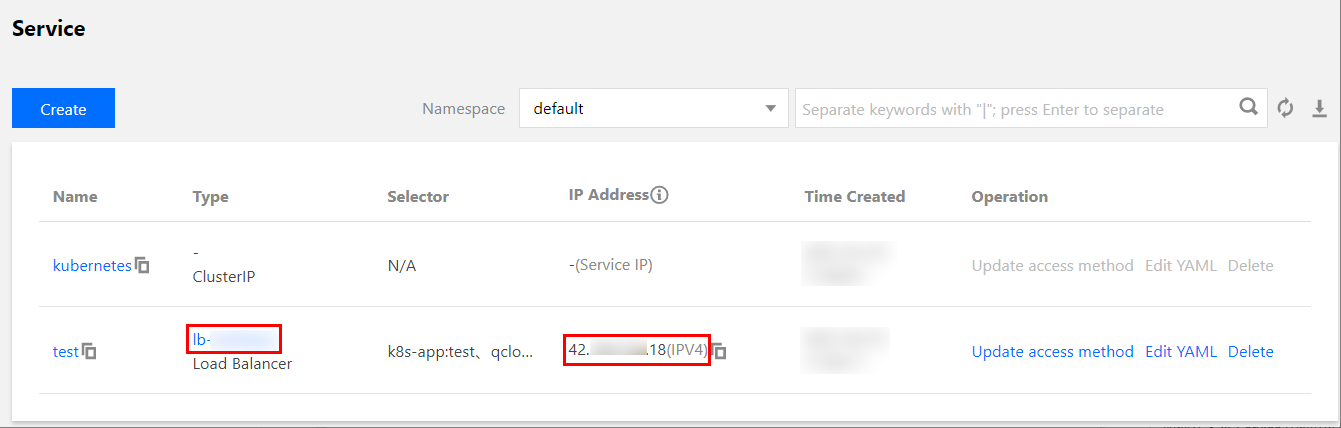

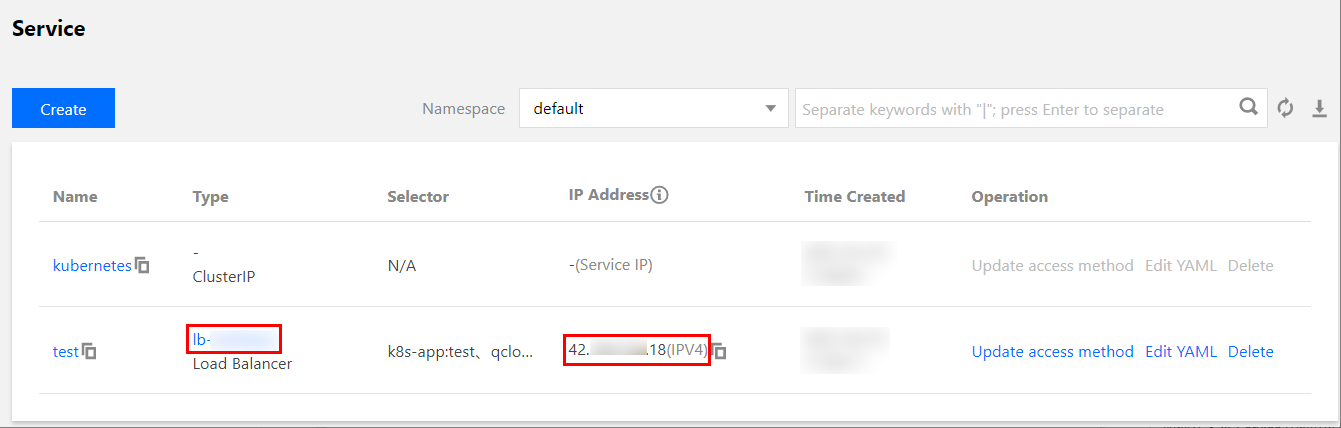

4. Serviceページで、CLBインスタンスIDとそのVIPを確認します。下の図をご覧ください。

ServiceのClusterIPが無効(正常にアクセスできない)またはClusterIPが存在しないのはなぜですか。

現在はspec.typeがLoadBalancerであるServiceに対して、TKE ServerlessがデフォルトでClusterIPを割り当てないか、または割り当てたClusterIPが無効(正常にアクセスできない)となっています。ユーザーが同時にClusterIPを使用してServiceにアクセスしたい場合は、annotationsに以下のキーバリューペアを追加し、TKE ServerlessがプライベートネットワークCLBをベースにClusterIPを実装するよう指示することができます。

service.kubernetes.io/qcloud-clusterip-loadbalancer-subnetid: Service CIDR サブネットID

Service CIDR サブネットIDはクラスターの作成時に指定し、

subnet-********の文字列とします。このサブネットIDの情報はCLB基本情報ページで確認することができます。注意:

この機能をサポートしているのは改造版K8S(kubectl versionから返されるServer GitVersionにサフィックス「eks.」または「tke.」がついているもの)を使用したTKE Serverlessクラスターのみです。初期に作成された、非改造版K8S(kubectl versionから返されるServer GitVersionにサフィックス「eks.」または「tke.」がついていないもの)を使用したTKE Serverlessクラスターについては、この機能を使用するにはK8Sをバージョンアップする必要があります。

CLBインスタンスタイプ(パブリックネットワークまたはプライベートネットワーク)を指定するにはどうすればよいですか。

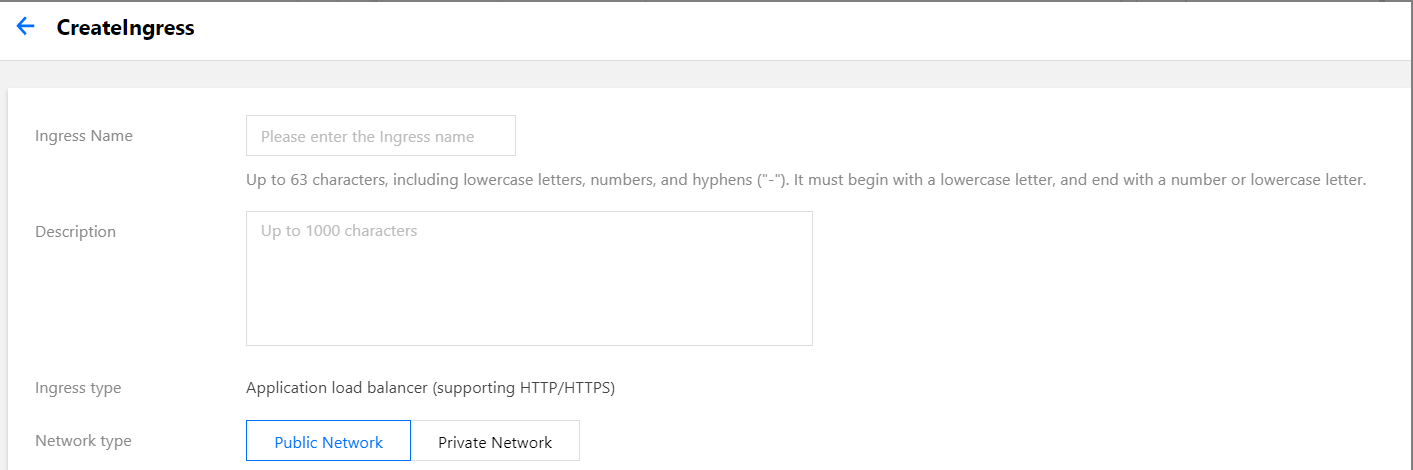

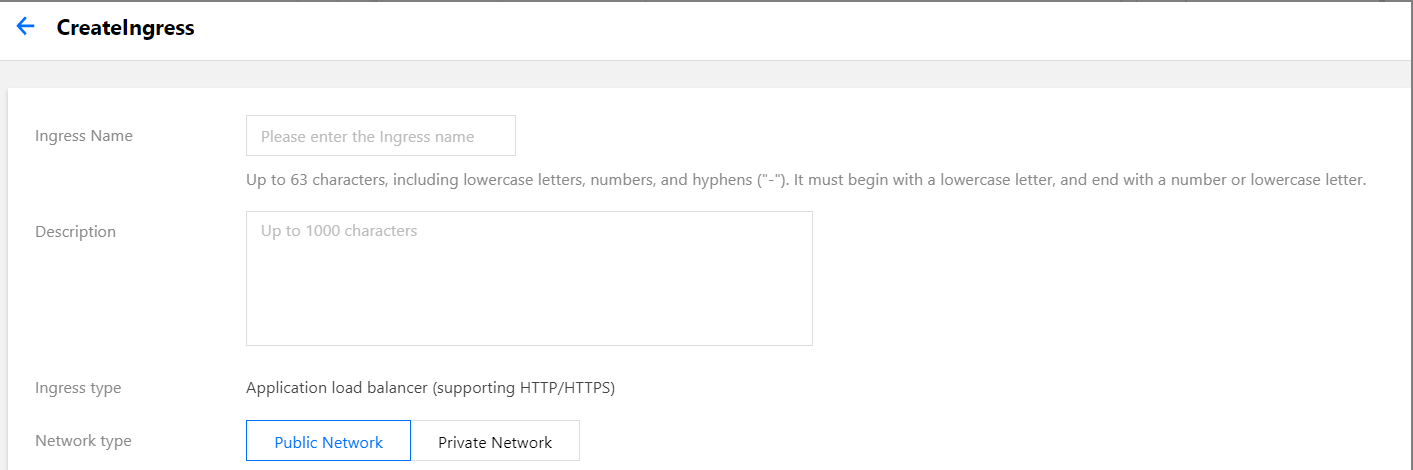

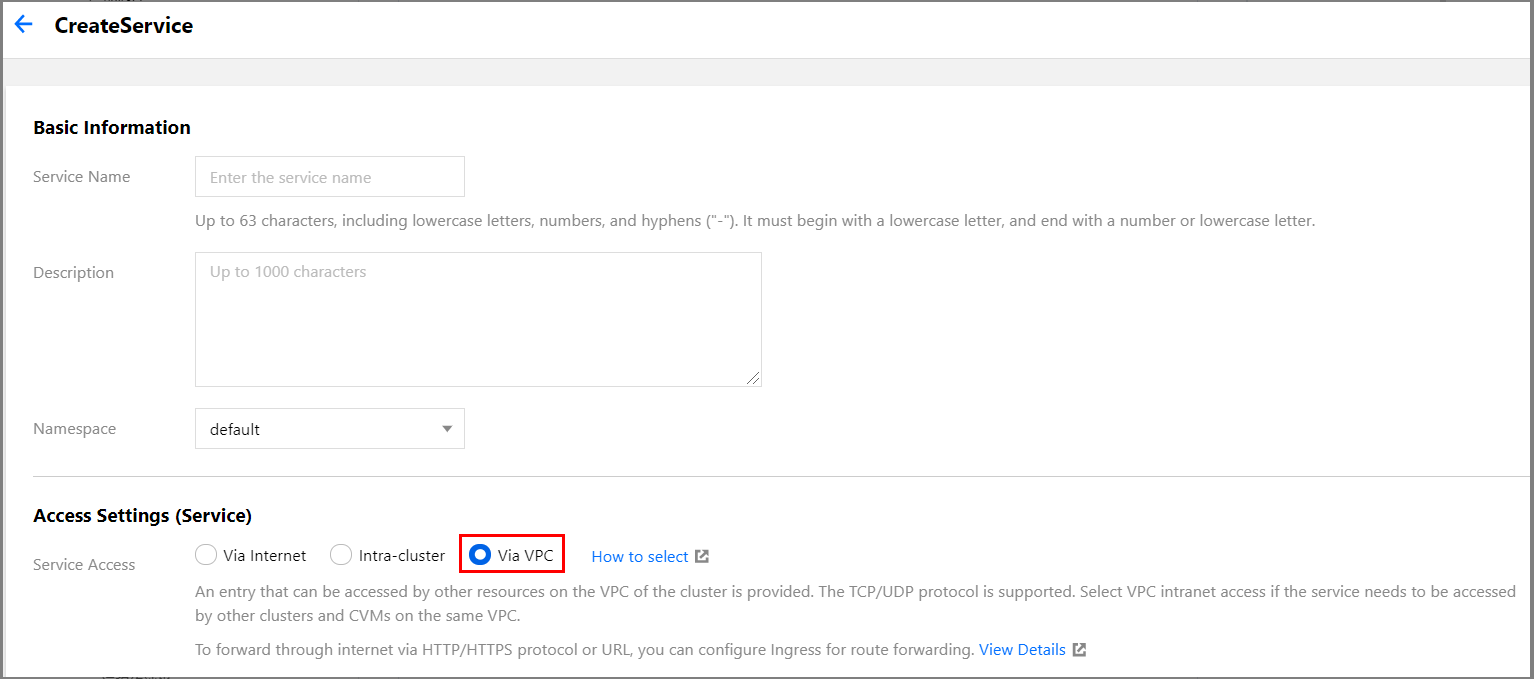

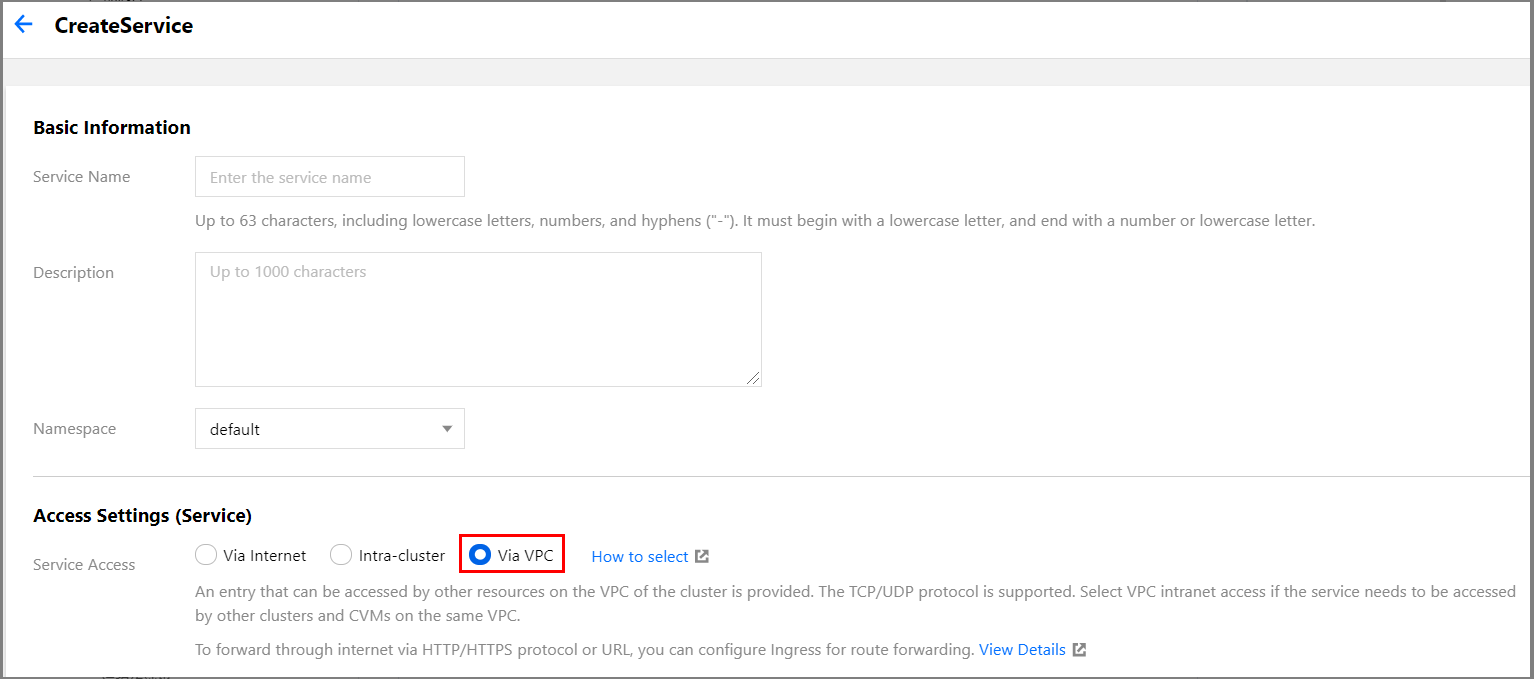

CLBインスタンスタイプの指定はTKEコンソールまたはkubectlコマンドラインツールによって行うことができます。

Ingressの場合は、「ネットワークタイプ」で「パブリックネットワーク」または「プライベートネットワーク」を選択します。

Serviceの場合は「サービスアクセス方式」で制御します。「VPCプライベートネットワークアクセス」がプライベートネットワークCLBインスタンスに対応します。

デフォルトで作成したCLBインスタンスは「パブリックネットワーク」タイプです。

「プライベートネットワーク」タイプのCLBインスタンスを作成したい場合は、対応するannotationをServiceまたはIngressに追加する必要があります。

リソースタイプ | annotationsに追加する必要があるキーバリューペア |

Service | service.kubernetes.io/qcloud-loadbalancer-internal-subnetid: サブネットID |

Ingress | kubernetes.io/ingress.subnetId: サブネットID |

注意:

サブネットIDの形式はsubnet-********の文字列とし、なおかつこのサブネットはクラスター作成時に「クラスターネットワーク」に指定したVPC内になければなりません。このVPCの情報はTencent Cloudコンソールクラスターの「基本情報」で照会できます。

既存のCLBインスタンスの使用を指定するにはどうすればよいですか。

TKEコンソールまたはkubectlコマンドラインツールによって、既存のCLBインスタンスの使用を指定することができます。

ServiceまたはIngressを作成する際に、「既存のCLBインスタンスの使用」を選択することができます。Serviceの場合は、Serviceの作成後に、「アクセス方式の更新」によって「既存のCLBインスタンスの使用」に切り替えることも可能です。

Service/Ingressの作成またはServiceの変更の際に、対応するannotationをServiceまたはIngressに追加します。

リソースタイプ | annotationsに追加する必要があるキーバリューペア |

Service | service.kubernetes.io/tke-existed-lbid: CLBインスタンスID |

Ingress | kubernetes.io/ingress.existLbId: CLBインスタンスID |

注意:

「既存のCLBインスタンス」を「TKE ServerlessがServiceまたはIngress用に作成するCLBインスタンス」にすることはできず、またTKE Serverlessは複数のService/Ingressによる同一の既存のCLBインスタンスの共用もサポートしていません。

CLBインスタンスのアクセスログを確認するにはどうすればよいですか。

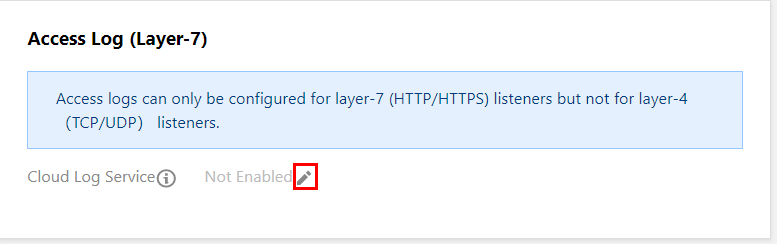

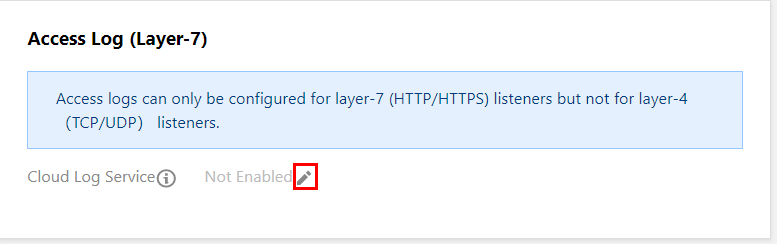

アクセスログはレイヤー7 CLBインスタンスでのみ設定がサポートされていますが、TKE ServerlessがIngress用に作成するレイヤー7 CLBインスタンスでは、アクセスログはデフォルトで有効になっていません。CLBインスタンスのアクセスログを有効化したい場合は、CLBインスタンスの詳細ページで操作を行うことができます。具体的な手順は次のとおりです。

1. TKEコンソールにログインし、左側ナビゲーションバーのクラスターを選択します。

2. クラスターリストページで、クラスターIDを選択してクラスター管理ページに進みます。

3. クラスター管理ページで、左側のサービスとルーティング > Ingressを選択します。

4. 「Ingress」ページで、CLBインスタンスIDを選択してCLB基本情報ページに進みます。下の図をご覧ください。

5. CLB基本情報ページの「アクセスログ(レイヤー7)」で、

TKE Serverlessで、IngressまたはService用のCLBインスタンスが作成できないのはなぜですか。

TKE ServerlessはどのIngress用にCLBインスタンスを作成できますか、およびTKE ServerlessはどのService用にCLBインスタンスを作成できますかの質問への回答を参照し、対応するリソースが対応する条件を満たしているかどうかを確認してください。条件を満たしているのにCLBインスタンスの作成に成功しない場合は、

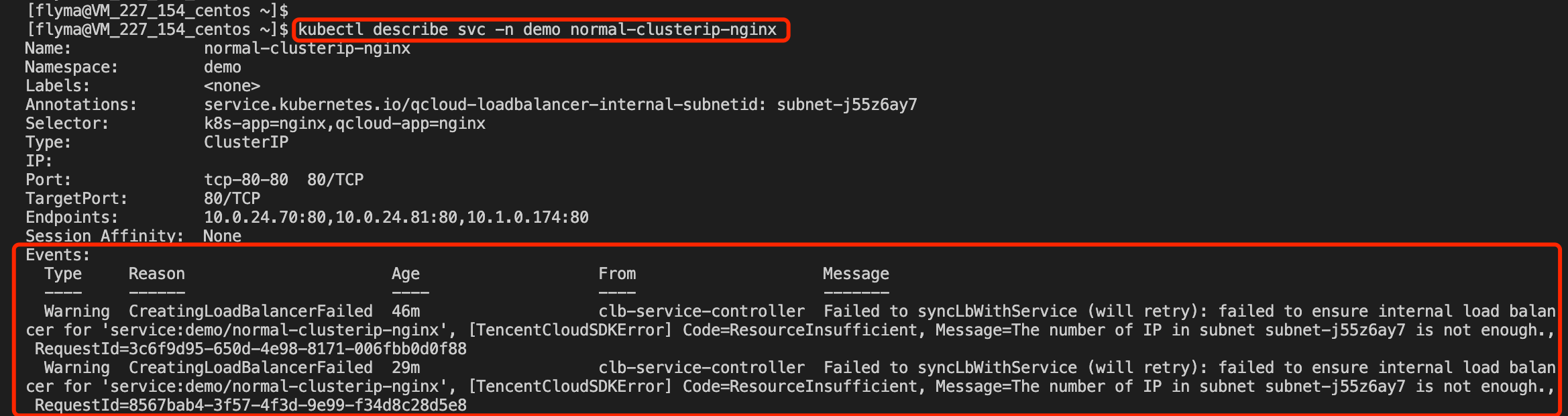

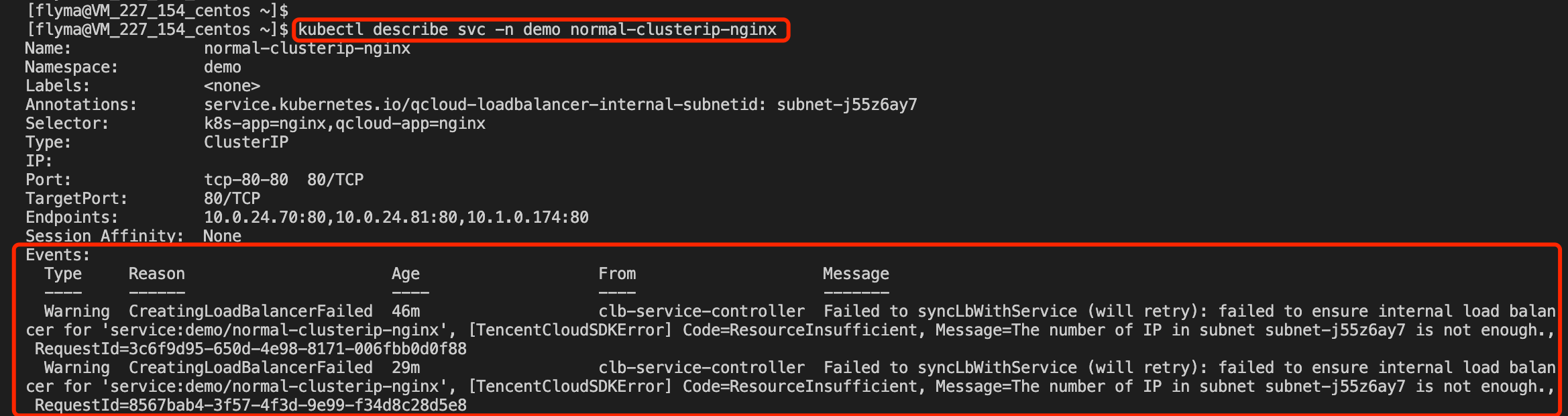

kubectl describeコマンドによって「リソース」関連イベントを確認することができます。

通常、TKE Serverlessは関連のWarningタイプのイベントを出力します。下の図の例では、使用可能なIPリソースがサブネット内にないためにCLBインスタンスの作成に失敗したことが、出力されたイベントによって明らかになっています。

複数のServiceで同一のCLBを使用するにはどうすればよいですか。

TKE Serverlessクラスターは、デフォルトでは複数のServiceが同一のCLBインスタンスを共用することはできません。他のServiceによって占有されているCLBをServiceに再利用したい場合は、このannotationを追加し、valueを「true」と入力してください。

service.kubernetes.io/qcloud-share-existed-lb: true。このannotationに関する詳細な説明については、Annotationの説明をご参照ください。CLB VIPにアクセスする際に失敗したのはなぜですか。

次の手順に従って分析を行ってください。

CLBインスタンスタイプを確認する

1. 「Service」または「Ingress」ページで、CLBインスタンスIDを選択してCLB基本情報ページに進みます。下の図をご覧ください。

2. CLB基本情報ページで上記のCLBインスタンスの「インスタンスタイプ」を確認することができます。

CLB VIPへのアクセス環境が正常かどうかを確認する

CLBインスタンスの「インスタンスタイプ」がプライベートネットワークの場合、そのVIPは所属するVPC内にしかアクセスできません。

TKE Serverlessクラスター内のPodsのIPはVPC内のENIのIPであるため、Pods内ではクラスター内のどのServiceまたはIngressのCLBインスタンスのVIPにもアクセスできます。

注意

通常、LoadBalancerシステムにはループバックの問題が存在します(例:AzureLoad Balancerトラブルシューティングガイド)。ワークロードが存在するPodsで、このワークロードが(ServiceまたはIngressを介して)外部に公開しているVIPによって、このワークロードが提供するサービスにアクセスすることはおやめください。つまり、PodsがVIP(「プライベートネットワークタイプ」と「パブリックネットワークタイプ」の両方を含む)によって、Pods自体が提供するサービスにアクセスしないようにします。これを行った場合は遅延が増加したり、(VIPに対応するルールにRS/Podが1つしかない場合は)アクセスできなくなったりする場合があります。

CLBインスタンスの「インスタンスタイプ」がパブリックネットワークの場合は、そのVIPはパブリックネットワークアクセス機能を持つ環境でのアクセスが可能です。

クラスター内でパブリックVIPにアクセスしたい場合は、NAT Gatewayまたはその他の方法でクラスターのパブリックネットワークアクセス機能が有効になっていることを確認してください。

CLB下のRSに、想定されるPodsのIP + ポートが含まれる(かつ、それのみが含まれる)ことを確認する

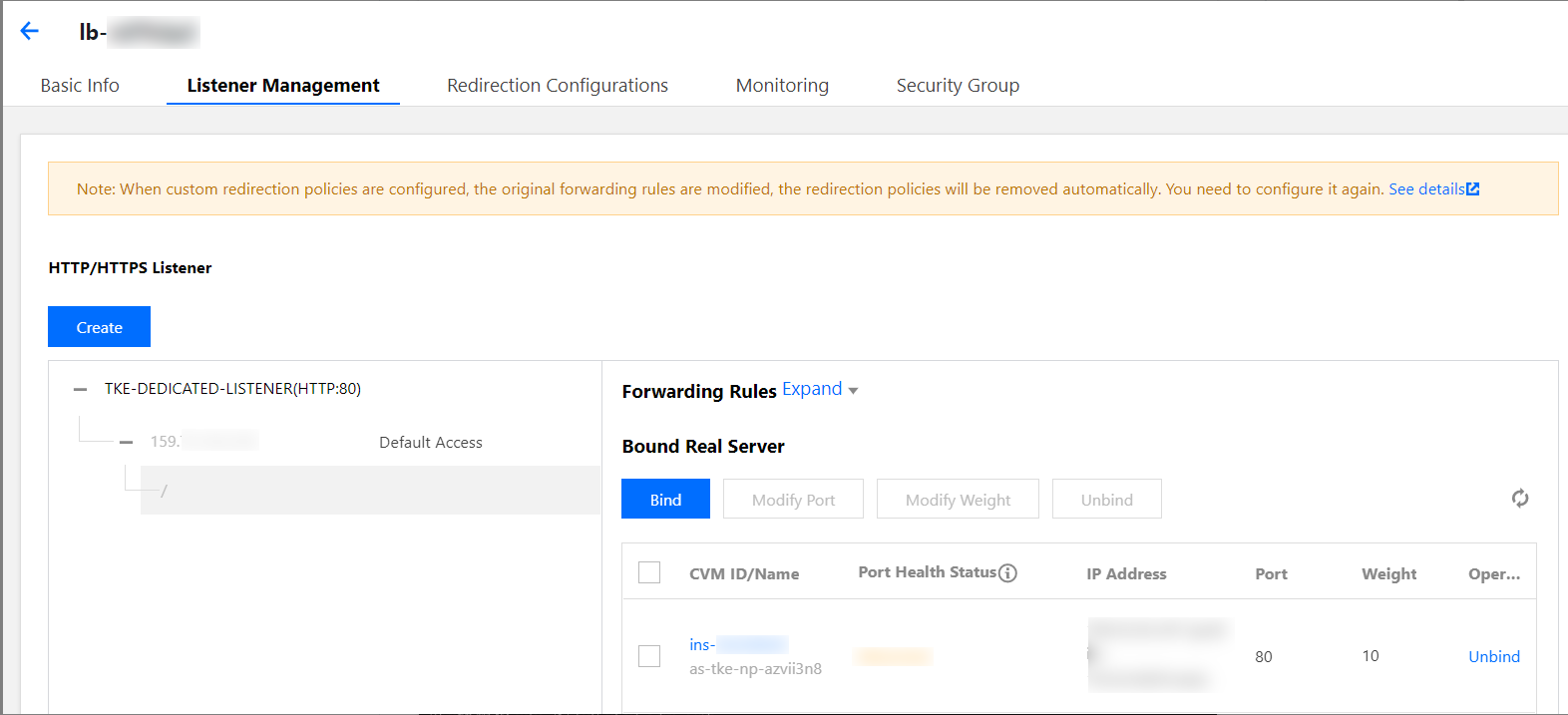

CLB管理ページで、リスナー管理ページを選択し、転送ルール(レイヤー7プロトコルの場合)、バインドされたバックエンドサービス(レイヤー4プロトコルの場合)を確認します。このうちIPアドレスは各PodのIPであることが想定されます。TKE ServerlessがあるIngress用に作成したCLBの例を次に示します。

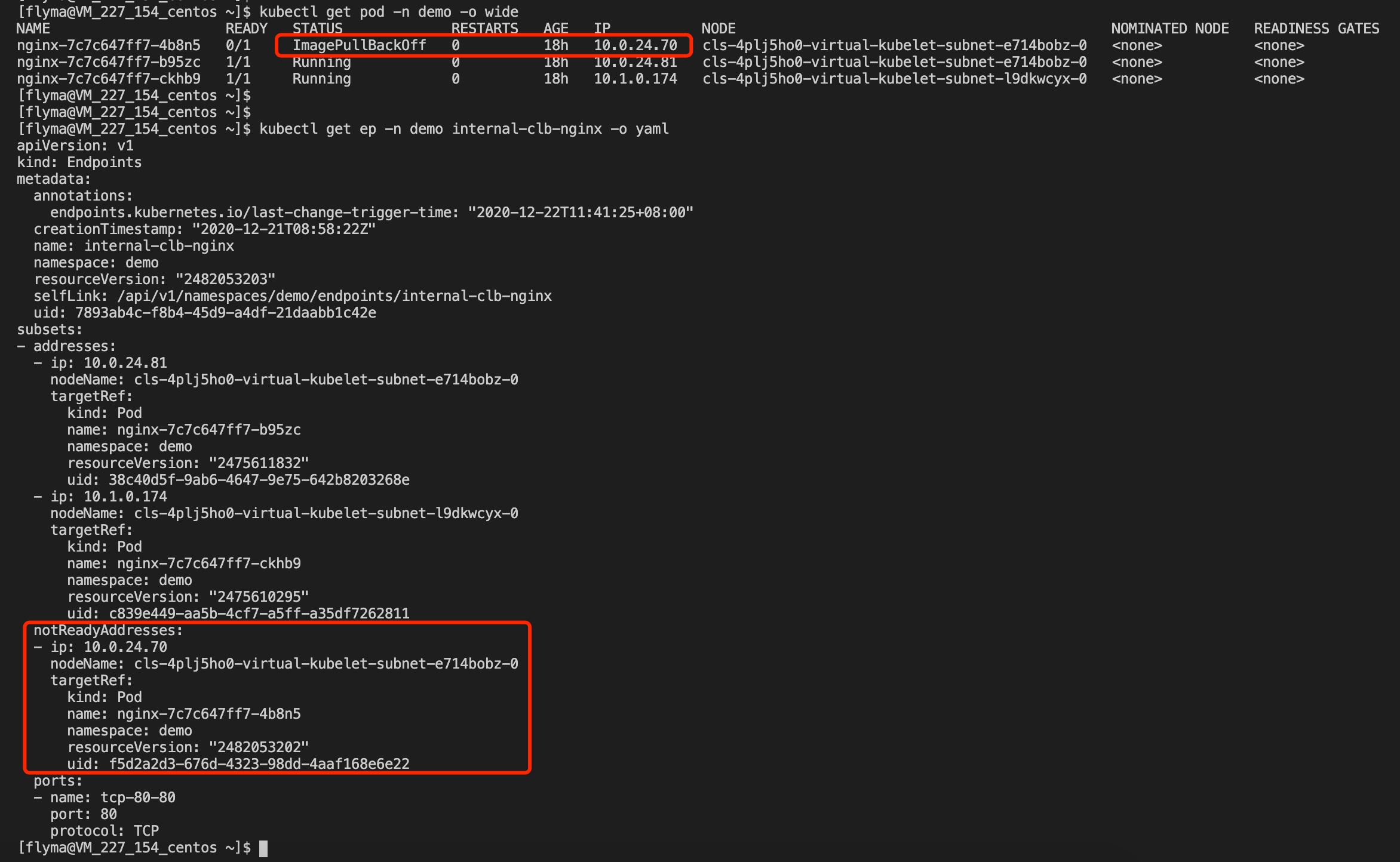

対応するEndpointsが正常かどうかを確認する

ワークロード(Workload)にラベル(Labels)を正しく設定し、かつServiceのリソースにセレクター(Selectors)を正しく設定している場合、ワークロードのPodsで正常に実行すると、K8SによってServiceが対応するEndpointsの準備完了アドレスリストにリストアップされたPodsを、

kubectl get endpointsコマンドによって確認できます。下の図の例をご覧ください。

注意:

異常なPodsについては、kubectl describeコマンドによって異常の原因を確認することができます。コマンドの例は次のとおりです。

kubectl describe pod nginx-7c7c647ff7-4b8n5 -n demo

Podsが正常にサービスを提供可能かどうかを確認する

PodsはステータスがRunningであっても、外部に正常にサービスを提供できない場合があります。例えば、指定のプロトコル+ポートをリッスンしていない、Pods内部の論理エラー、処理プロセスのスタックなどの場合です。

kubectl execコマンドによってPod内にログインし、telnet/wget/curlコマンドまたはカスタマイズしたクライアントツールを使用してPod IP+ポートに直接アクセスすることができます。Pod内への直接アクセスに失敗する場合は、Podが正常にサービスを提供できない原因をさらに分析する必要があります。PodにバインドしているセキュリティグループがPodsの提供するサービスのプロトコルおよびポートを許可しているかどうかを確認する

セキュリティグループはLinuxサーバーのIPTablesルールと同じように、Podsのネットワークアクセスポリシーを制御します。実際の状況に応じて確認を行ってください。

インタラクションのフローで、1つのセキュリティグループを指定することが強制的に要求され、TKE Serverlessはこのセキュリティグループを使用してPodsのネットワークアクセスポリシーを制御します。ユーザーが選択したセキュリティグループはワークロードの

spec.template.metadata.annotationsに保存され、最終的にPodsのannotations内に追加されます。次に例を示します。

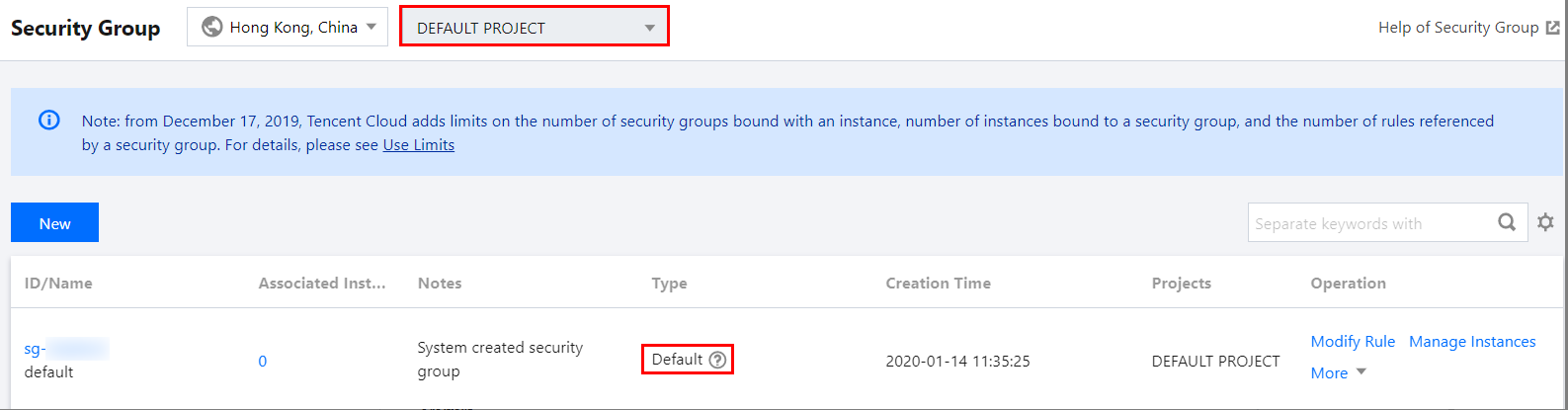

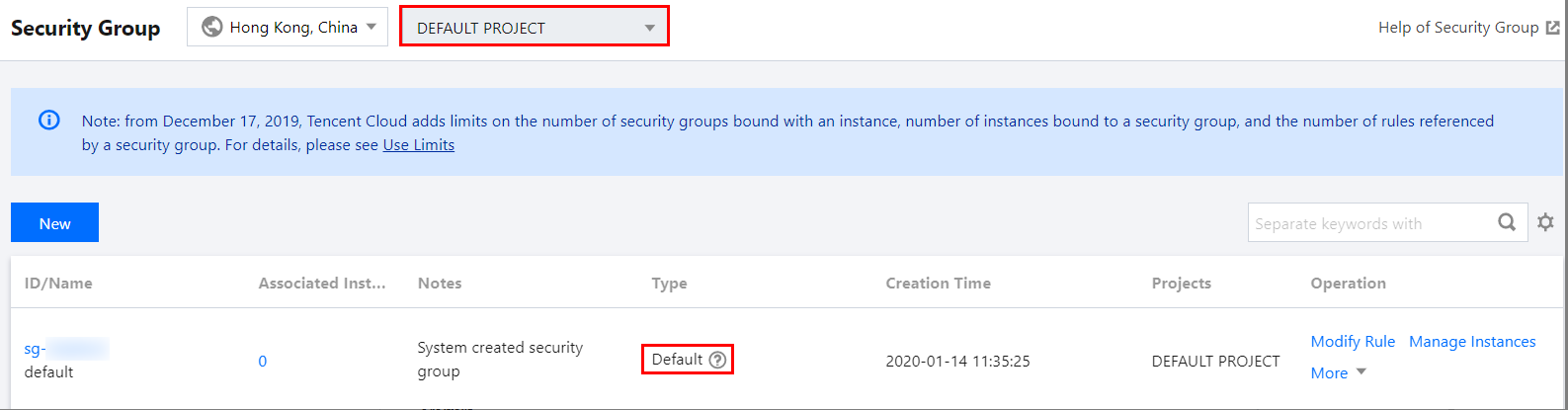

kubectlコマンドによってワークロードを作成し、かつ(annotationsによって)Podsにセキュリティグループを指定していない場合、TKE Serverlessはアカウント下の同一リージョンのデフォルトプロジェクトのdefaultセキュリティグループを使用します。確認の手順は次のとおりです。

1. VPCコンソールにログインし、左側ナビゲーションバーのセキュリティグループを選択します。

2. 「セキュリティグループ」ページの上方で、同一リージョンのデフォルトプロジェクトを選択します。

3. リストでdefaultセキュリティグループを確認し、ルールの変更をクリックして詳細を見ることができます。下の図をご覧ください。

お問い合わせ

フィードバック